Author: Kuri, Deep Tide TechFlow

A few days ago, there was a photo that went viral online.

India held an AI summit, and Prime Minister Modi stood on stage with a row of Silicon Valley big shots on either side. During the photo-taking session, Modi raised the hand of the person next to him over his head; the others followed suit, holding hands, creating a unified atmosphere.

However, only two people did not hold hands.

The CEOs of OpenAI and Anthropic—who are the heads of the companies behind ChatGPT and Claude—stood next to each other, each raising a fist.

No hand-holding, no eye contact, like two enemies assigned to sit at the same table by the teacher.

These two companies have been in fierce competition for years, with Claude being created by a team that left OpenAI. The two are competing for users, enterprise clients, and funding; during this year's Super Bowl, Anthropic even spent money on an ad mocking ChatGPT for needing advertising.

So, it is normal not to hold hands.

However, today they held hands. Because of the Pentagon.

Here’s what happened.

Anthropic, the company behind Claude, signed a contract worth up to $200 million with the U.S. Department of Defense last year. Claude is the first AI model deployed on U.S. military classified networks, helping with tasks such as intelligence analysis and mission planning.

But Anthropic drew two red lines in the contract:

Claude cannot be used for large-scale surveillance of U.S. citizens and cannot be used for autonomous weapons without human involvement. (Reference: The Seventy-Two Hours of Anthropic's Identity Crisis)

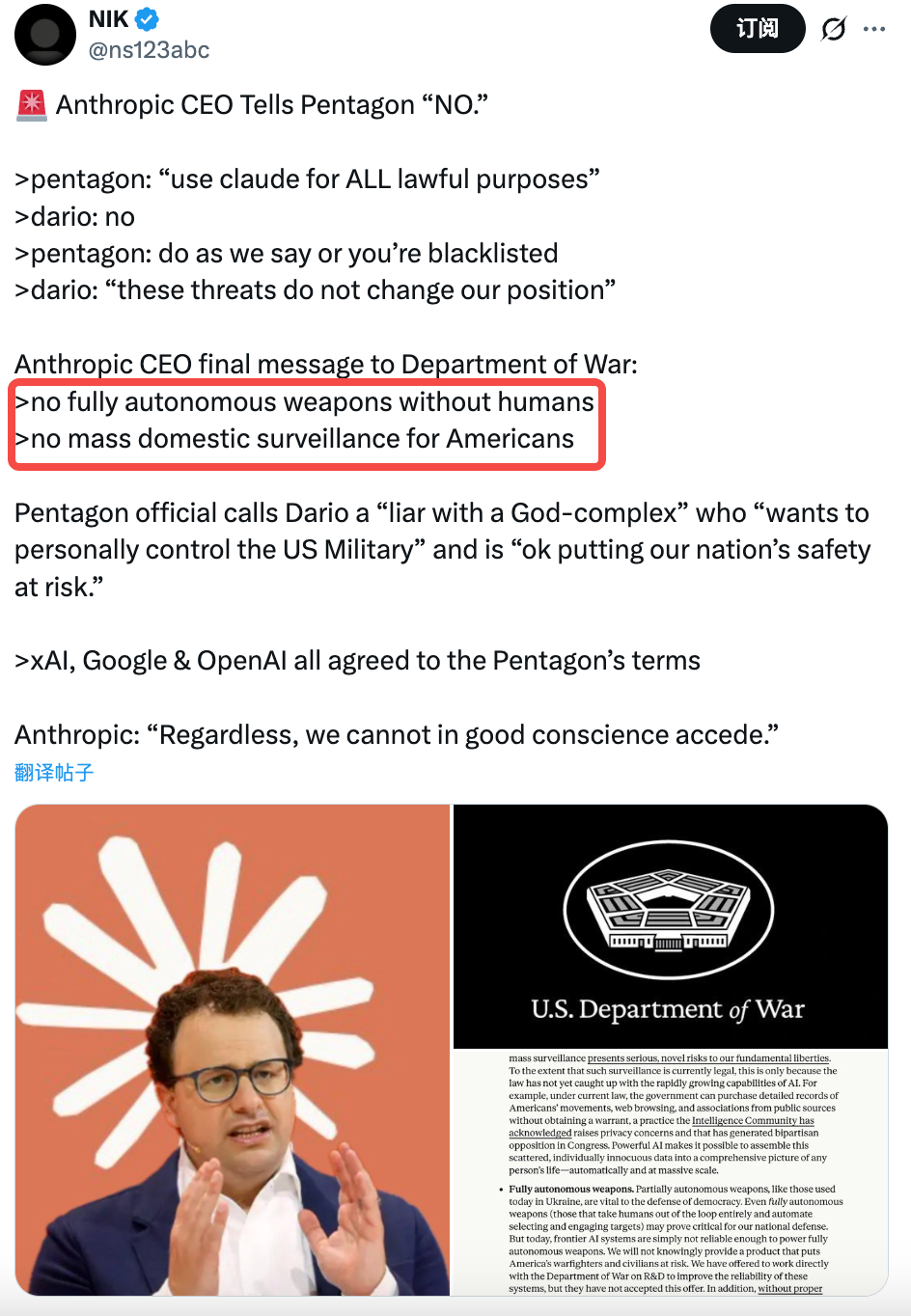

However, the Pentagon does not accept this.

Their demand is four words: no restrictions. Once tools are purchased, they should be used freely; what right does a tech company have to tell the U.S. military what can and cannot be done.

Last Tuesday, Defense Secretary Hegseth issued a final ultimatum to Anthropic's CEO: agree before 5:01 p.m. on Friday, or bear the consequences.

Anthropic did not agree.

Their CEO issued a public statement saying: We fully understand the importance of AI for U.S. defense, but in a few cases, AI can undermine rather than uphold democratic values. We cannot, in good conscience, accept this demand.

The Pentagon's negotiation representative, Deputy Secretary of Defense Emil Michael, subsequently called him a liar on social media, claiming he had a God complex and was joking about national security.

Brief Hand-Holding

Then, something unexpected happened.

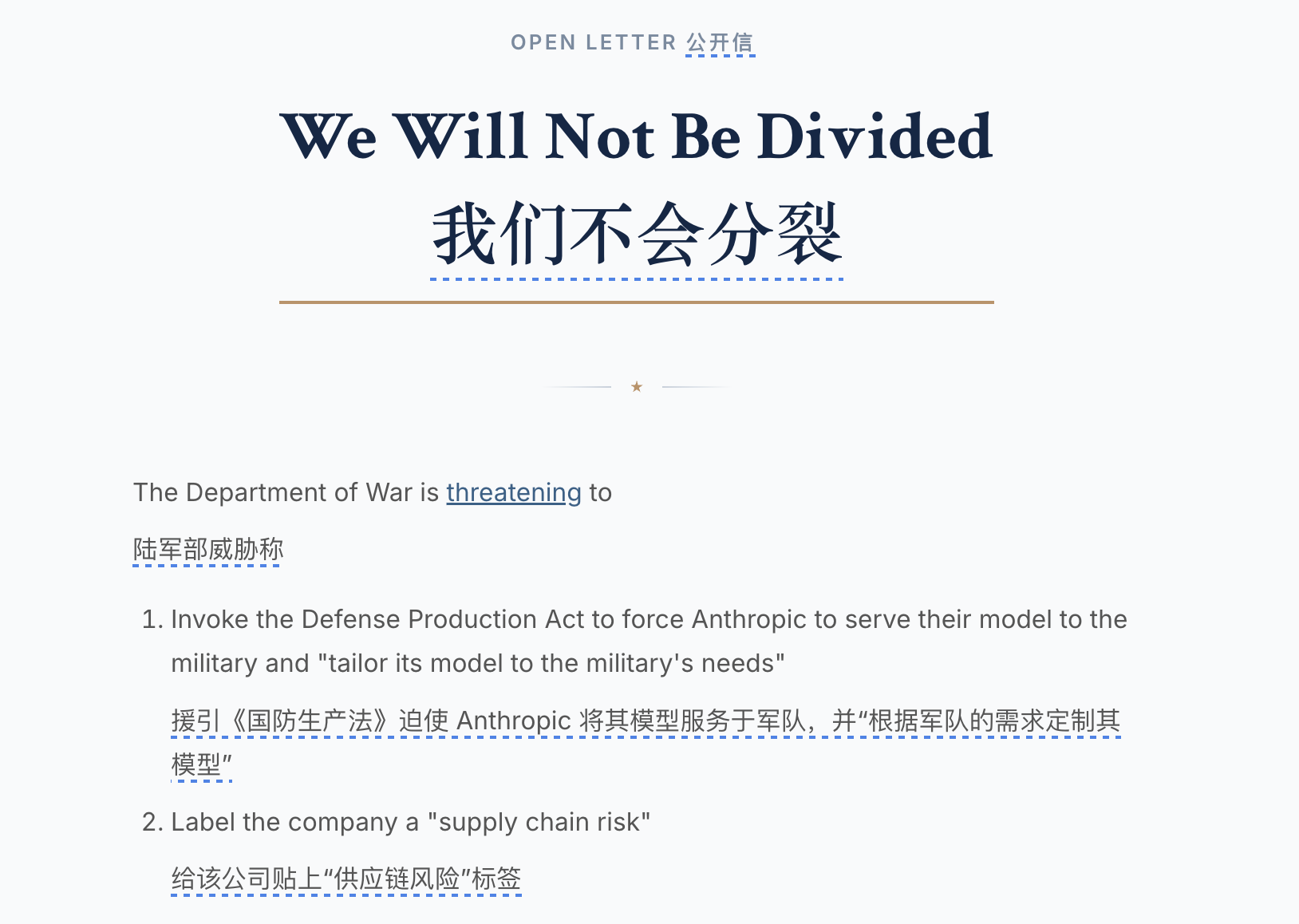

Employees from OpenAI and Google, a total of more than 400 people, signed a joint public letter titled "We Will Not Be Divided."

The letter stated that the Pentagon was individually negotiating with AI companies, trying to get others to agree to the conditions that Anthropic refused, using fear to divide each company.

OpenAI's CEO also sent an internal letter to all employees, stating that OpenAI has the same red lines as Anthropic:

No large-scale surveillance, no autonomous lethal weapons.

Just a few days ago, the two companies that were unwilling to hold hands suddenly stood on the same side because of the Pentagon.

However, such unity likely only lasted a few hours.

At 5:01 p.m. on Friday, the Pentagon's ultimatum expired. Anthropic did not sign.

A $380 billion U.S. tech company, facing the risk of a $200 million contract being voided, refused the U.S. Department of Defense. In the past, such matters would have resulted in just switching suppliers. But Washington's reaction this time was not at a commercial level.

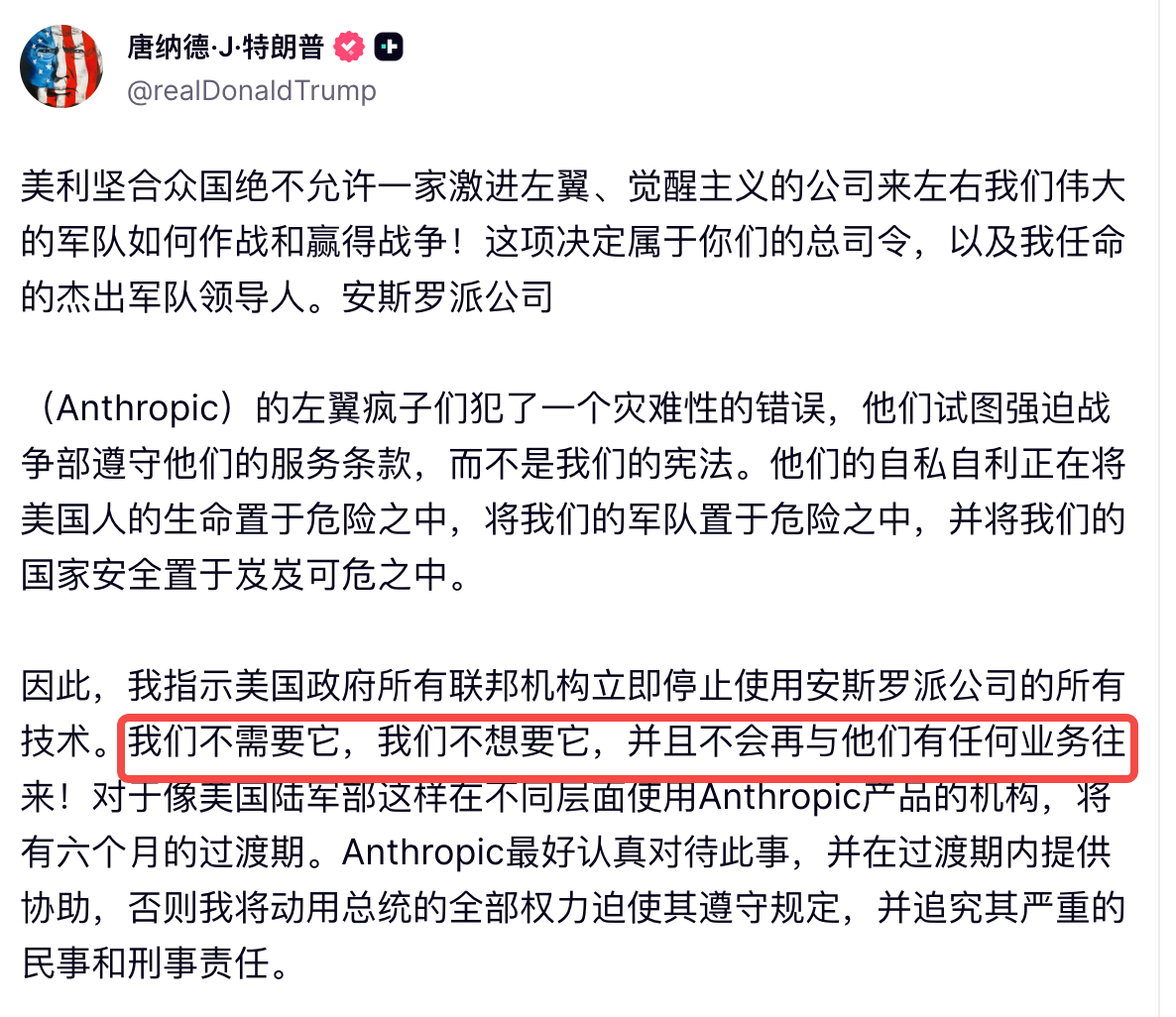

About an hour later, Trump posted on Truth Social, calling Anthropic "leftist lunatics," saying they were trying to place themselves above the Constitution and making jokes about the lives of American soldiers.

He demanded all federal agencies immediately stop using Anthropic's technology.

Following that, U.S. Defense Secretary Hegseth announced that Anthropic would be classified as a "supply chain security risk." This label is usually reserved for companies like Huawei. The meaning is clear: all contractors doing business with the U.S. military can no longer use Anthropic's products.

Anthropic stated they would file a lawsuit.

And on the same evening, OpenAI, which had previously maintained the same stance, signed an agreement with the Pentagon.

Ideological Issues

What did OpenAI gain?

The position left vacant after Claude was pushed out: AI supplier for the U.S. military's classified networks. However, OpenAI proposed three conditions to the Pentagon: no large-scale surveillance, no autonomous weapons, and human involvement in high-risk decision-making.

The Pentagon agreed.

You read that right. Conditions that Anthropic had been reluctant to accept for weeks, a different company proposed and worked out in a matter of days?

Of course, the proposals from both sides are not completely the same.

Anthropic demanded an additional layer: they believe current laws do not keep pace with AI capabilities, for example, AI can legally purchase and aggregate your location data, browsing history, and social media information, ultimately resulting in effects equivalent to surveillance, but each step is not illegal.

Anthropic said simply writing "no surveillance" is useless; this loophole needs to be closed. OpenAI did not insist on this point; they accepted the Pentagon's argument that existing laws are sufficient.

But if you think this is just a clause disagreement, that would be too naive. This negotiation has not only been about the terms from the beginning.

White House AI czar David Sacks has publicly criticized Anthropic for engaging in "woke AI" (prioritizing ideology and political correctness); senior Pentagon officials have told the media that Dario's issue is ideologically driven, "We know who we are dealing with."

Elon Musk's xAI is itself a direct competitor of Anthropic; he this week attacked Anthropic repeatedly on X, saying the company "hates Western civilization."

Moreover, Anthropic's CEO did not attend Trump's inauguration last year. OpenAI's CEO did.

Making an Example

So let's summarize what happened.

The same principles, the same red lines, but Anthropic, having requested an additional layer of guarantees, stood on the wrong side and posed incorrectly, was marked as a so-called national security threat on the same level as Huawei.

OpenAI, having requested one layer less and maintaining good relations, secured the contract. Do you consider this a victory of principles or a pricing of principles?

The Pentagon’s contracts have been resisted before.

In 2018, more than 4,000 Google employees signed a petition, and a dozen resigned, protesting the company's participation in a Pentagon project called Project Maven. That project used AI to analyze videos captured by drones, helping the military identify targets faster.

Google ultimately withdrew. They did not renew the contract. The employees won.

Eight years have passed, and the same controversy has arisen again. But this time, the rules have completely changed. A U.S. company said they could do business with the military but there were two things they could not do. The U.S. government's response was to kick them out of the entire federal system.

Moreover, the "supply chain security risk" label has destructive power far beyond losing a $200 million contract.

Anthropic's revenue this year is around $14 billion; the $200 million contract doesn't even count as pocket change. But the meaning of this label is that any company doing business with the military cannot use Claude.

These companies do not need to agree with the Pentagon's stance; they only need to conduct a risk assessment: continue using Claude and risk losing government contracts; switch to another model and nothing happens.

The choice is easy. This is the real signal of this matter.

Whether Anthropic can withstand it is not important; what matters is whether the next company dares to take a stand. It will look at this result, assess the cost of sticking to principles, and then make a very rational decision.

Looking back at that photo from India, everyone holding hands raised over their heads, only they stood with clenched fists.

Perhaps this is the new norm.

AI companies may share the same principles, but they may not be able to hold hands.

免责声明:本文章仅代表作者个人观点,不代表本平台的立场和观点。本文章仅供信息分享,不构成对任何人的任何投资建议。用户与作者之间的任何争议,与本平台无关。如网页中刊载的文章或图片涉及侵权,请提供相关的权利证明和身份证明发送邮件到support@aicoin.com,本平台相关工作人员将会进行核查。