Author: Curry, Deep Tide TechFlow

OpenAI recently released a report detailing how someone misused ChatGPT for malicious purposes and was caught.

The report is lengthy and lists numerous cases of AI abuse. There are Russians involved in false propaganda, and suspected spies using social engineering, but today I want to discuss one specific case:

The Cambodian pig butchering scheme.

Pig butchering is not surprising; everyone has heard too many stories about Cambodian parks. What’s remarkable is the role of AI in it.

In this scam ring, ChatGPT is responsible for romance conversations, translating supervisor instructions, writing daily work reports, and estimating the value of each victim.

Within the pig butchering scheme, the internal term is called kill value, which estimates how much money can be extracted from you.

Throughout the entire production line, ChatGPT might be the busiest employee.

OpenAI codenamed this case Operation Date Bait.

The process goes like this.

The scam group first created a fake high-end dating service called Klub Romantis, with a logo made by ChatGPT. They then paid for advertisements on social media, choosing keywords like golf, yachts, and upscale restaurants, specifically targeting young men from Indonesia.

You click on the ad and start by chatting with an AI chatbot. The bot plays the role of a sultry receptionist, letting you choose what type of girls you like, and after you finish, it gives you a Telegram link with a unique invite code.

After arriving on Telegram, a real person takes over.

The receptionist continues to use ChatGPT to generate suggestive messages, becoming increasingly explicit, and then guides you to two fake dating platforms, one called LoveCode and the other called SexAction.

On these platforms, there are numerous fake female profiles, along with a scrolling news bar continuously reporting "Congratulations to someone for completing a task and unlocking a bonus." All of this is fabricated, and experienced internet surfers could recognize it at a glance, but not every target group will be cautious.

Once the conversation reaches the right point, the receptionist transfers you to a "mentor." The mentor then begins assigning you "tasks," each requiring payment, with amounts progressively increasing. Buying VIP cards, voting for your "dream girl," paying hotel deposits—the list goes on.

The final step, internally referred to as kill.

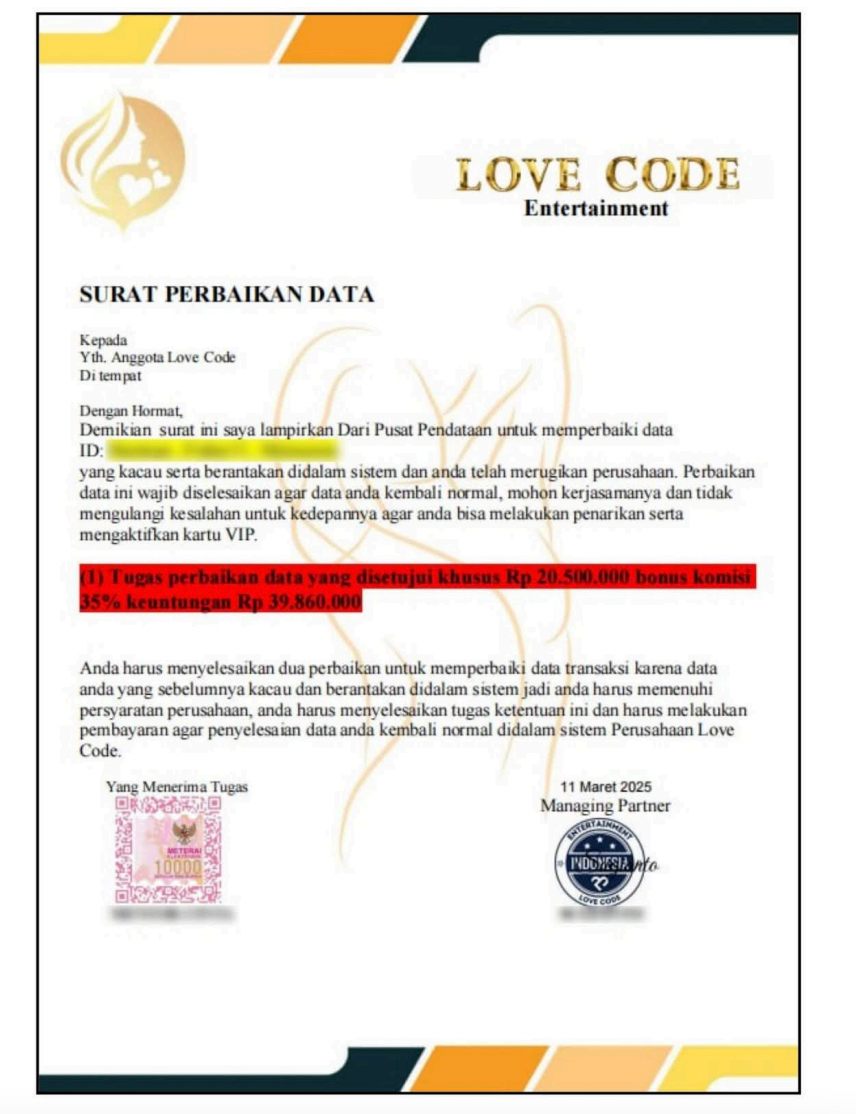

Invent an excuse, like data processing errors or verification deposits, and persuade you to transfer a large sum all at once. OpenAI included a letter from the scam group sent to the victims, demanding the payment of 20.5 million Indonesian rupiah, equivalent to about $12,000, claiming that after payment, a 35% bonus would be refunded.

Once the money is received, the scammer will block you on Telegram and note that the case is closed.

You might think there's nothing shocking here.

The scam itself isn’t new; the tactics of pig butchering schemes have been exposed many times over the years. What truly amazes is the background.

OpenAI's investigators were able to piece together a complete corporate structure from the usage records of these ChatGPT accounts:

The scam park is divided into three departments: the traffic generation team, the reception team, and the supervisor team. The traffic generation team is responsible for running ads to attract people, the reception team handles chatting to build trust, and the supervisor team is in charge of the final harvest.

There are daily reports. Each report lists every victim being processed, the name of the person responsible, the stage of progress, and that number:

kill value .

Which is the estimated amount the supervisor believes can ultimately be extracted from that person.

They also used ChatGPT to analyze financial accounts, generate work reports, and even asked ChatGPT how to connect to APIs and change the code of the dating site. In situations where the supervisor spoke Chinese and the employees spoke Indonesian, ChatGPT was responsible for translating between both sides.

Ironically, one of the scam workers asked ChatGPT about tax issues after earning income, and honestly filled in "scammer" in the occupation field.

OpenAI's report uses restrained language, mentioning that based on the scam group's own input records, they might be processing hundreds of targets simultaneously, raking in thousands of dollars each day. But the report also states that it can't independently verify whether these numbers are accurate.

However, I think it’s not necessary to get tangled in whether the numbers are real or not; just looking at this management process is enough:

Traffic generation, conversion, average revenue per customer, daily reports, departmental division—swap out the terminology, and you might think you’re reading the operational manual of a SaaS company.

And with dating, translation, writing reports, modifying code, accounting... more than half of the work in this park is done by a single ChatGPT account.

The story isn’t over yet.

In the same report, OpenAI also uncovered a second line, codenamed Operation False Witness (second scam targeting victims), also originating from Cambodia.

This line targets not ordinary people, but those who have already been scammed.

The logic is simple: you were scammed in a pig butchering scheme, you want to get your money back, and you search online for solutions.

Then you see an ad with a law firm stating they specialize in helping scam victims recover losses. You click in.

The website looks credible. The lawyer photos are either stolen real avatars from social media or AI-generated. Each law firm has an address, license, and introduction. ChatGPT generated membership cards for the New York State Bar Association and even created fake lawyer registration records.

OpenAI discovered at least six fake law firms in total.

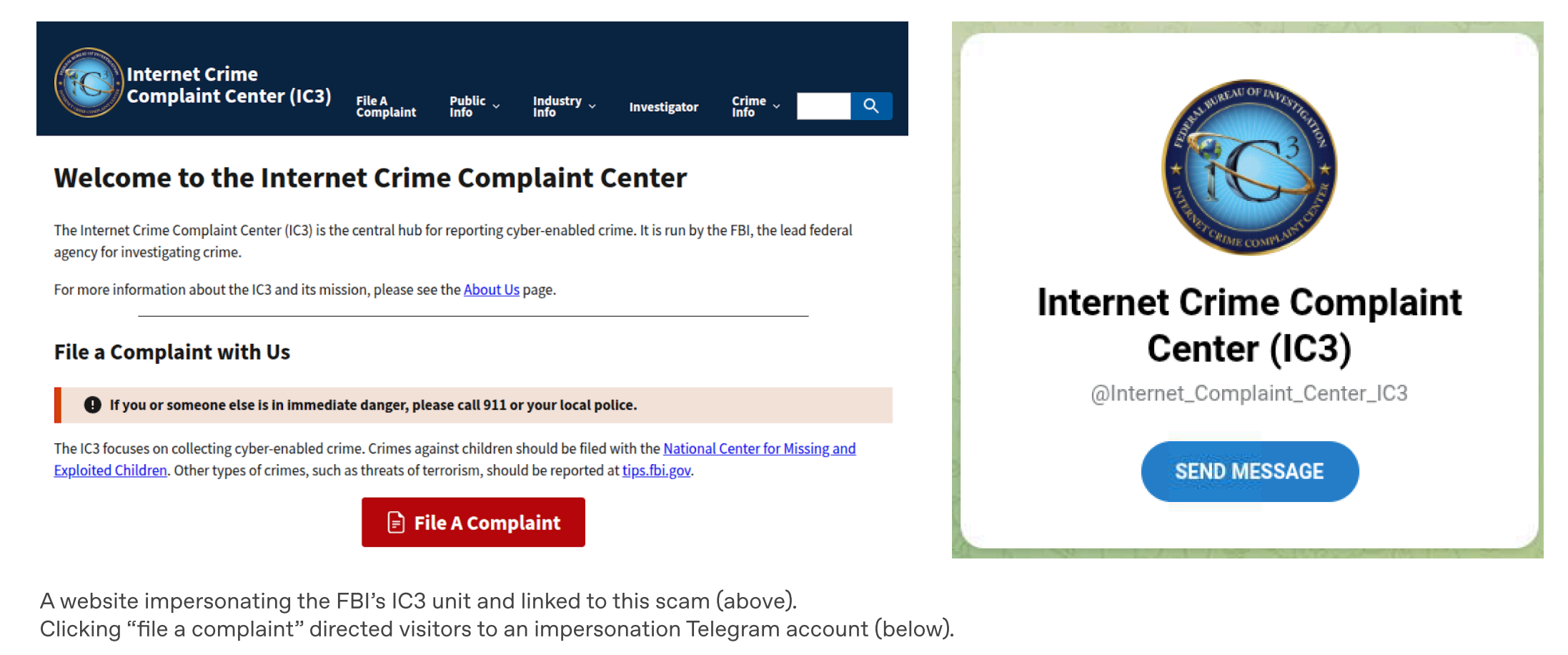

There’s also a website that directly impersonated the FBI’s Internet Crime Complaint Center. There’s a "Submit Complaint" button on the page; clicking it redirects you to a Telegram account.

Upon entering Telegram, the "lawyer" starts chatting with you. The script is generated by ChatGPT, specifically crafted to be in "American English," mimicking a professional lawyer’s tone. They tell you that they have a partnership with the International Criminal Court and do not charge until funds are recovered.

However, you must first pay a 15% service fee to activate the account, payable in cryptocurrency.

They also ask you to sign a confidentiality agreement. This agreement is also written by ChatGPT to prevent you from seeking verification elsewhere.

The FBI later issued a public warning specifically about this matter, stating that this scam mainly targets the elderly, exploiting their anxiety to recover losses.

After reading these two cases, I think the most ironic part in an environment where AI has become standard is this:

The first time you were scammed, you were a target. The second time you were scammed, you were a better target, because you have already proven that you can be deceived.

Finally, OpenAI summarized the scam process into three steps in the report.

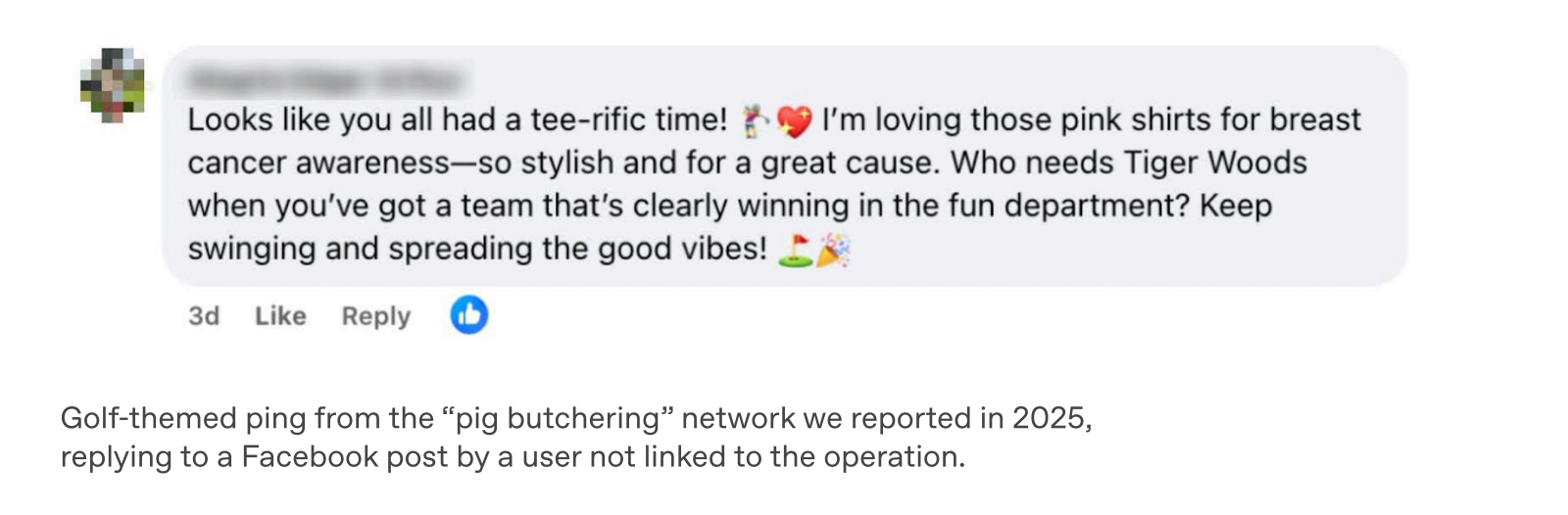

The first step is called ping, cold outreach, finding ways to get the target's attention. The second step is zing, creating emotions to make you feel excited or fearful. The third step is sting, harvesting the money.

This framework is well summarized; take a closer look—what part of these three steps can AI not do?

In the past, the largest cost of a pig butchering scheme was labor. You had to hire a room full of people to chat in front of computers, and they had to speak the target's language. In the early days, Cambodian parks would even select for good English skills and offer high wages.

Now, looking at the dating scam case mentioned in the report, supervisors speak Chinese, employees speak Indonesian, and the targets are also Indonesians. With the three parties speaking different languages, this work was impossible to carry out before. Add a ChatGPT, and it all becomes seamless.

Language is just one aspect.

The report includes another detail: scam workers even asked ChatGPT how to connect to OpenAI’s API, intending to fully automate the chatting process.

This means that AI does not make scams more sophisticated; the scams remain the same. AI makes scams cheaper.

Now, according to OpenAI, this group might simultaneously be handling hundreds of scam cases. With the scale increasing, the per-victim labor cost decreases, allowing them to scam more people at lower prices.

There’s one more issue that I think is worth pondering.

OpenAI can discover all this because the scam group used ChatGPT, and the chat records are stored on OpenAI’s servers.

What about those using locally deployed open-source models?

This report can only present a small piece of the entire puzzle that OpenAI can see. The areas they cannot see, nobody knows how vast they are.

免责声明:本文章仅代表作者个人观点,不代表本平台的立场和观点。本文章仅供信息分享,不构成对任何人的任何投资建议。用户与作者之间的任何争议,与本平台无关。如网页中刊载的文章或图片涉及侵权,请提供相关的权利证明和身份证明发送邮件到support@aicoin.com,本平台相关工作人员将会进行核查。