The next generation of AI infrastructure is Agent Harness.

Written by: Zoey Zhou, Foresight Ventures

For the past two years, the AI industry has been dominated by a single question: whose model is stronger?

This question is certainly important. The improvement of model capabilities is the starting point of this wave of AI. But as models gradually become the underlying capability for all products and workflows, the focus of competition begins to change. More and more companies are finding that the real challenge is not just getting the model to answer better, but to have it stably, reliably, and continuously complete tasks in real-world environments.

This is also why we have recently been increasingly focused on Agent Harness.

If models are the brains of agents, then Harness is the system that allows this brain to truly enter the world and start working. It includes context, tools, runtime environments, permissions, risk control, evaluation, tracking, and feedback. Without these elements, no matter how strong the model is, it can only remain in a dialogue box; with these elements, agents can transition from "can answer" to "can execute."

This article does not discuss yet another agent application opportunity, but rather a more fundamental question: as the software world begins to rewrite for agents, which infrastructures will become important? Which opportunities are worth investing in? Which seem to be hot but may struggle to form long-term barriers?

We will start from a new coordinate system of To-Agent, then discuss why Harness will become a key layer of the agent economy, as well as the investment opportunities and risks we see; finally, we will use trading agents as a vertical scenario to illustrate why execution trajectories and result feedback may become the most valuable data assets in the next generation of AI infrastructure.

1. From To-B/To-C to To-Agent

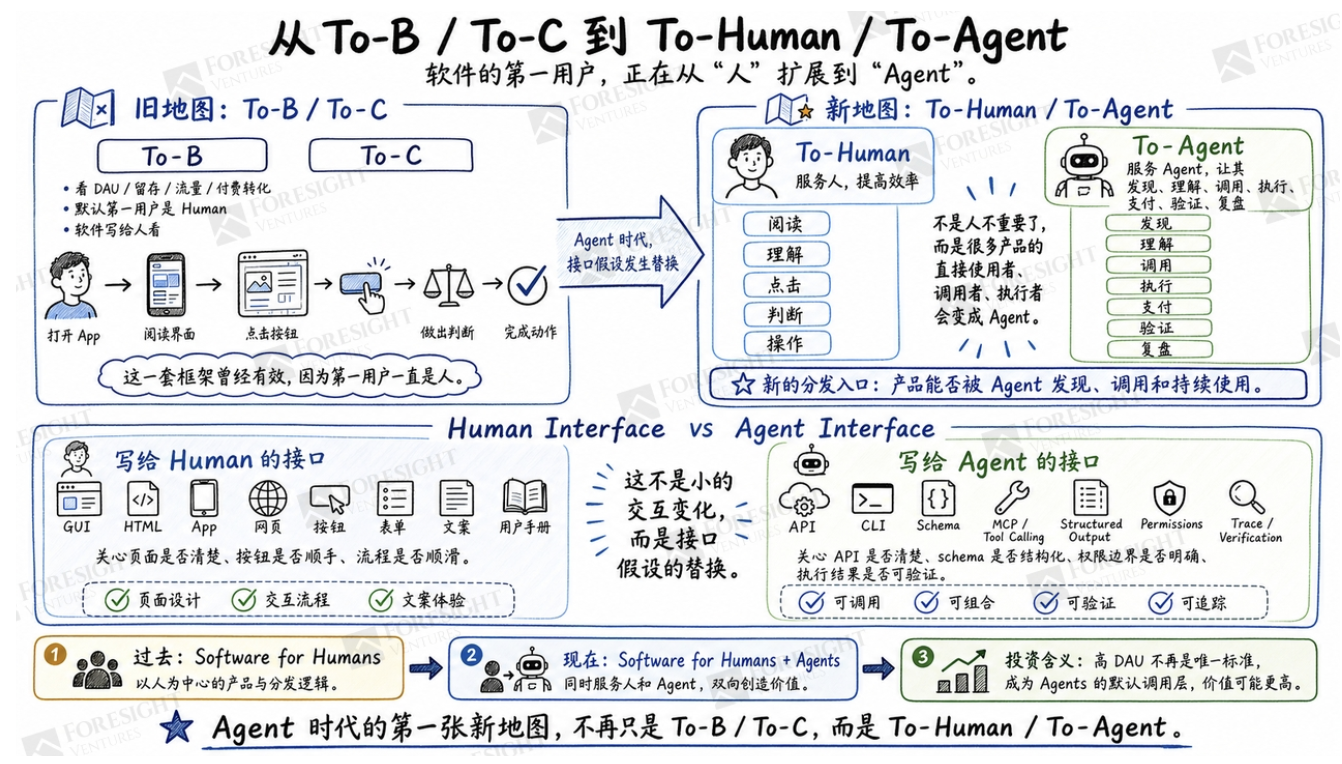

Many judgment frameworks from the internet era are becoming insufficient today.

In the past, when assessing a product, we used to ask whether it was To-B or To-C, what the DAU was, what retention looked like, where the traffic came from, and how paid conversion was. This method was effective because the first users of software have always been people. People open apps, read interfaces, click buttons, make judgments, and then take action.

But the agent era is changing this assumption.

In more and more scenarios, what truly enters the system, reads information, calls tools, and initiates operations is no longer directly people, but agents acting on behalf of people or organizations. It does not care whether the page is beautifully designed, nor does it require a traditional user manual. What it cares about is whether the API is clear, whether the schema is structured, whether permission boundaries are explicit, whether tool descriptions are readable, and whether execution results can be verified.

This is not a small interactive change but a replacement of interface assumptions.

In the past, software was written for people to see, so we optimized pages, buttons, copy, and processes; now, more and more systems need to be written for agents to use, so they must become callable, composable, verifiable, and traceable.

Therefore, beyond To-B and To-C, a new dimension is becoming important: To-Agent.

To-Agent does not mean that people are no longer important; rather, many of the direct users, callers, and executors of products will become agents. The ultimate payer may still be businesses or individuals, but whether the product can be discovered, called, and continuously used by agents will become the new distribution entry.

This has a significant impact on investment judgments. A product may not have a high DAU in the traditional sense, but if it becomes the default calling layer for a large number of agents, its value will be very high. Conversely, a product that appears complete when aimed at human users, if it cannot be read, called, and composed by agents, may be bypassed by new infrastructure in the future.

This is also why we believe that the first new map in the age of agents is not To-B/To-C but To-Human/To-Agent. The former serves people, increasing their efficiency; the latter serves agents, allowing agents to discover, understand, call, execute, pay, verify, and review.

2. The Giants Are Not Competing for Model Functions but for Agent Entry

Over the past year, several leading companies have appeared to take different actions: some are working on protocols, some on skills, some on agent builders, some on payment channels, and some on wallet tools. Looking at them individually, these seem like scattered feature releases; viewed together, they are actually competing for the same position: the entry point for agents into real systems.

Anthropic is the clearest example.

MCP addresses how agents connect to external systems. When Anthropic released MCP, it defined it as an open standard for establishing secure bidirectional connections between AI-powered tools and external data sources. In other words, what MCP aims to solve is not the chat experience itself, but how agents enter into data sources, development environments, and business systems.

Agent Skills address another problem: how agents acquire specialized workflows. Anthropic's explanation of Skills is very intuitive; it likens building skills to writing onboarding guides for new employees; a skill can contain instructions, scripts, reference materials, and processes that enable agents to perform more stably and be reused in specific tasks.

The significance of Claude Code also lies here. It is not a simple coding assistant but a long task execution system. It can read code, modify files, call tools, run tests, handle errors, and loop continuously until tasks are completed. Coding is one of the earliest workflows where agents have been fully validated because it inherently has structured environments, clear feedback, executable tools, and relatively clear result verification.

The rise of Anthropic has largely been due to its design focusing on agents as long task execution systems rather than just adding more features. Its core advantages lie not only in its models but also in the systems surrounding the models that enable execution.

OpenAI's AgentKit is also moving in this direction. It integrates the tools needed to build, deploy, and optimize agents, trying to reduce the difficulty for businesses and developers from prototype to production. The key terms here are not chat, but builder, connectors, eval, deployment, and optimization. OpenAI's introduction to AgentKit is also clearly centered around helping developers and enterprises to build, deploy, and optimize agents.

Coinbase and Cloudflare's promotion of x402 indicates that the agent economy requires not only tool invocation but also payment and settlement channels. When introducing the x402 Foundation, Coinbase mentioned that x402 embeds payments into web interactions through the HTTP "402 Payment Required" status code, enabling AI agents, APIs, and apps to complete value exchanges just like exchanging data. Cloudflare also describes x402 as a framework for clients and services to exchange value using a common language. Coinbase AgentKit further transforms wallets and on-chain interactions into components that AI agents can call, emphasizing a framework-agnostic and wallet-agnostic crypto-native agent toolkit.

Bitget's actions represent a different entry point competition in the vertical scenario of trading agents. Unlike x402, which is more payment protocol-focused, and AgentKit, which is more about on-chain wallets and on-chain interactions, Bitget Agent Hub is more directly aimed at trading execution scenarios. Bitget combines MCP, API, Skills, and CLI into a set of invocation systems to connect AI models, development tools, and live trading execution chains. The Agent Hub is positioned as AI-era crypto trading infrastructure, providing trading capabilities to developers and Vibe Coders.

The significance of this is not just that “exchanges have added a few AI tools.” More accurately, Bitget is attempting to answer a more specific question: if a trading agent is to truly enter the CEX scenario, what kind of complete harness does it need? It needs tools, interfaces, strategy skills, command line invocation, permission isolation, and also needs future execution trajectories, result evaluations, and risk control feedback. Bitget has already taken the first step, but this path has no industry standards yet. Whoever can make the entire chain from discovery, access, authorization, execution, verification, to review more complete is more likely to gain entry in the high-value scenario of trading agents.

Looking at these actions together shows that AI infrastructure is expanding from Model Stack to Agent Stack. Future competition will not only involve model capabilities but also how agents connect systems, obtain permissions, call tools, pay, execute, verify, and learn.

This also raises the central question of this article: when agents are no longer just chat windows but start entering real systems to perform tasks, what exactly is missing in between?

3. From Can Answer to Can Execute, What’s Missing in Between is Harness

To understand the agent era, one can remember a simple formula:

Model provides intelligence, Harness provides execution systems.

In the past, everyone focused on the model, which is reasonable. Without sufficiently strong models, there would be no agents today. But as model capabilities continue to improve, many bottlenecks begin to shift. The real concerns for enterprises become: can this agent be integrated into my system? Can it obtain appropriate permissions? If something goes wrong, can responsibilities be traced? Is the execution process visible? Who judges whether the results are good or not? Can it learn from failures?

These questions all belong to Harness.

A complete Harness is not just a tool invocation framework. Agents need context, understanding the current task, business background, historical state, and organizational rules. They need tools and skills to call external systems and reuse workflows that have been established in certain domains. They need runtime and orchestration to handle long tasks, multi-step executions, failure recovery, and parallel collaboration. They need identity and permission, clear about who they represent, what they can do, and what they cannot do. They also need guardrails, evaluation, trace, and feedback to record the execution process, assess results, and improve future executions.

This is why we tend to view Harness as the OS layer rather than an ordinary SaaS function.

Today, there are many single-point products on the market: memory tools, tool calling tools, orchestration tools, observability tools, and evaluation tools. They all have value and will spawn many good companies in the early stages. But in the long run, the risks and values of agents stem from closed loops. If a single layer cannot connect with other layers, it is easily reduced to a transitional tool.

Tools without permissions and risk control, the stronger the automation, the greater the risk. Tracking without identity and authorization collects only low-quality logs. Orchestration without evaluation can lead agents to confidently go wrong. Memory without feedback may get increasingly cluttered. Evaluation that cannot influence runtime decisions can only remain at post-event scoring.

The value of an operating system has never been a single module but the stable collaboration formed between various modules. Agent Harness is the same.

4. Our View on Investment Opportunities

Within this framework, Agent Harness is not a single track but a group of infrastructure opportunities.

The first type of opportunity is at the discovery and protocol layer. Agents need to locate external systems, understand what they can do, and call them in a standardized manner. MCP servers, tool registries, skill marketplaces, structured documentation, and machine-readable APIs will become new distribution entries. There is significant potential here but it can also be easily overstated. Simply creating directories or protocols may not necessarily yield long-term value. The real value lies in usage, workflows, and data built on these protocols.

The second type of opportunity exists in the context and skill layer. General models know a lot, but real work relies on a myriad of organizational and industry contexts. Law, finance, healthcare, security, chip design, and auditing—every industry has its own processes, terminology, templates, compliance requirements, and exceptions. Those who can structure these experiences into context and skills usable by agents are likely to form barriers in vertical fields.

The third type of opportunity is found in runtime and orchestration layers. Agents need state management, failure recovery, cost control, sandbox environments, and multi-agent collaboration to undertake long tasks. This layer’s demand is clear, but the competition will be fierce. Model companies, cloud providers, and open-source frameworks will all enter. We are more focused on teams that capture specific high-value scenarios rather than companies that generically build workflow builders.

The fourth type of opportunity is in evaluation, observability, and trace. We think this is an underrated layer. Many agent projects fail not because the model cannot perform, but because enterprises do not know why it is performing, what step it has reached, where it went wrong, where costs were incurred, and whether results are credible. Trace and evaluation may look like developer tools in the short term but could become the data infrastructure of the agent era in the long term. Only trace, without eval, is just logging; when trace and eval are combined, they may turn into a training and judgment system.

The fifth type, which we are most optimistic about, is vertical harness. It is not about creating a vertical agent app, but about connecting context, tools, workflows, permissions, guardrails, evaluations, traces, and feedback loops within a high-value field. Fields like law, healthcare, finance, trading, security, and chip design are particularly suitable because their tasks have high value, error costs are significant, processes are deep, results are assessable, and data closed loops are very valuable.

Correspondingly, we remain cautious about several types of directions.

A generic agent framework may seem to occupy a large position, but it risks being pushed down by managed agents from model companies and eaten up from below by vertical workflow companies. Without a strong ecosystem, strong distribution, or real execution data, the middle layer is difficult to defend in the long term.

Pure model shell applications should also be approached cautiously. Applications without workflow ownership, exclusive context, execution data, or distribution barriers will find it increasingly easy to be consumed by model upgrades and platform capabilities.

Protocol narratives also cannot only focus on popularity. Directions like MCP, x402, and A2A are worth tracking, but protocols themselves are not a business model. Ultimately, what matters is the real adoption by developers, the volume of calls, whether they enter the mainstream agent builders, and whether they can be part of the actual execution chain.

Lastly, the data flywheel. Many companies will talk about having a data flywheel, but a true data flywheel must have data rights, structured schema, and evaluation labels. A data collection without user authorization and compliance boundaries may ultimately not be a barrier but a risk.

5. Trading Agent as a Stress Test for Harness

Trading agents are one of the best vertical cases for understanding Agent Harness.

The reason is simple: trading scenarios magnify all the issues with Harness at once.

Trading involves real funds, high error costs, authorization is required for execution, risk control must be real-time, the process needs auditing, and results can be quantified, with markets operating 24/7. The value of a trading agent should not only be viewed through its ability to place orders. More importantly, does it know who it represents, which accounts it can access, how much risk it can handle, which products it can trade, when it must stop, and how good the execution results are?

Therefore, a mature Trading Agent Harness should encompass a complete chain.

The agent must first be able to discover trading systems and understand interfaces and tools. Next, it needs identity and permissions, knowing who it represents and what it can do. Only then can it call functions such as market data, account management, order placement, order cancellation, position management, stop-loss orders, and hedging. Further, it needs to break down trading tasks into research, planning, execution, and verification phases to avoid any single agent holding excessive permissions and self-evaluation rights simultaneously.

Risk control is core to trading agents. Budget limits, maximum leverage, maximum drawdown, product restrictions, frequency limits, and abnormal triggers must all be written into the system rather than relying on post-fact reminders. Evaluation cannot only focus on PnL; short-term profits might come from excessive risk-taking, while short-term losses could be a result of prudent risk control. A more rational evaluation should include slippage, risk-adjusted returns, adherence to user objectives, and exceptional behavior during extreme conditions.

Most importantly, trace.

Every agent transaction generates a trace: market conditions, decision-making processes, tool calls, risk control checks, execution actions, transaction results, and subsequent attribution. Ordinary trading logs only record what happened, while trading agent traces also document why these actions were taken and how the results turned out.

This is what we mean by saying: the Harness itself is a Dataset.

If a certain Transaction Foundation Model emerges in the future, it will require not just market data and transaction data, but transaction trajectories imbued with strategic intent, risk boundaries, execution paths, and result labels. Such data must come from real executions and must be designed using reusable schemas from day one. Otherwise, looking back three years from now will simply show a pile of untrainable logs.

This is also why the competition among trading agents may, in the long term, shift from API competition to execution data competition.

6. The Position of Exchanges and Bitget's Early Exploration

In the trading agent scenario, exchanges hold a very unique position.

Model companies can provide reasoning, middleware companies can deliver workflows, wallets can offer signatures and on-chain permissions, and cloud platforms can provide runtime capabilities. However, centralized exchanges control another set of assets: custodial accounts, order and transaction data, market depth, risk control systems, clearing systems, permission structures, and 24/7 operational infrastructure.

These assets provide exchanges with structural opportunities to build Trading Agent Harness. They are closest to real execution and real results and are most likely to accumulate high-quality execution data.

Of course, this does not mean that exchanges will naturally win. Exchanges are typically not adept at developer ecosystems, and documentation and API experiences are not necessarily agent-friendly. Compliance requirements may make product iterations more cautious, and user trust in automated trading needs to be gradually established. More importantly, the use of trading data must have strict authorizations, anonymization, privacy, and compliance designs.

Therefore, if exchanges want to enter the agent era, they cannot just expose their APIs. They need to undergo a deeper upgrade: from execution backend to trusted harness layer.

Bitget is an early case in this direction. The Bitget Agent Hub has already begun to expand around MCP, API, Skills, CLI, and other modules, attempting to connect AI models, developer tools, and real trading executions, allowing developers and AI agents to access market data, conduct strategies, and execute trades. Bitget has also disclosed that the Agent Hub covers multiple capability modules and 58 tools, including spot, contracts, copy trading, and wealth management.

This indicates that Bitget has taken the first step: making the exchange's capabilities more visible and callable for agents.

However, from an industry perspective, this still represents an early stage. Tool exposure is just the starting point; the real watershed is ahead. Whether an exchange can become a Trading Agent Harness depends on whether it can resolve several deeper questions: can agents discover it by default, are the key and signature boundaries clear, are permissions sufficiently granular, are trading behaviors traceable, are results verifiable, can failures be written back into guardrails, and can execution traces form data assets?

If Bitget or other exchanges can continue to evolve towards trace, evaluation, guardrails, KYA, and feedback loops, exchange competition may expand from liquidity, product depth, and user scale to agent-native trust and execution data.

This will be a more critical question than "who has more MCP and Skills."

Conclusion: The Next Generation of AI Infrastructure Is Agent Harness

The real change in the agent era is not that AI can speak better, but that AI starts to take action.

Once AI begins to take action, the infrastructure will be rewritten. It needs to discover tools, gain permissions, invoke systems, complete payments, execute actions, undergo audits, be evaluated, and improve from failures.

These combined capabilities make up Agent Harness.

From an investment perspective, we are more focused on companies that can enter high-value workflows and continuously accumulate context, workflows, traces, evaluations, and outcome data. They may not look the hottest at first, nor have the most attractive frontend products, but if they position themselves correctly, they could become the true infrastructure of the agent era.

The trading agent is just one case. Similar logic will also occur in legal, medical, security, chip design, corporate compliance, and financial workflows.

Future AI competition will undergo three migrations.

The first phase focuses on whose model is stronger.

The second phase focuses on whose agent can more reliably perform real work.

The third phase focuses on whose execution can be crystallized into better asset data for future executions.

免责声明:本文章仅代表作者个人观点,不代表本平台的立场和观点。本文章仅供信息分享,不构成对任何人的任何投资建议。用户与作者之间的任何争议,与本平台无关。如网页中刊载的文章或图片涉及侵权,请提供相关的权利证明和身份证明发送邮件到support@aicoin.com,本平台相关工作人员将会进行核查。