Author: New Intelligence Source

Introduction: Who would have thought that the core team of OpenAI starting a business would be turned away by 21 top VCs? As a result, five years later, this group paid a 300 times premium just to get in.

In 2021, Anjney Midha brought the BP of Anthropic and ran 22 meetings with top VCs, only to be rejected 21 times.

Fast forward to January 2026, and Anthropic's latest round of financing reached $25 billion, with a valuation skyrocketing to $350 billion.

What does this mean? It's equivalent to 10 OpenAI's from 2023.

The investment giants who had welded the door shut under the pretext of "risk control" are probably now lining up in the bathroom crying and fainting.

This is not just a slap in the face; it is the most expensive collective "IQ tax" in this century.

21 Rejection Letters: The "Blindness" Moment of Top VCs

Those who rejected Anthropic were all the "heroes" of the industry in Midha's eyes.

Look at Anthropic's lineup at the time: high-level executives who defected from OpenAI, the biological parents of GPT-3.

This configuration today is at the level where funding arrives before the PPT is finished.

Midha thought this time it would be certain, but reality slapped back hard.

Back in 2021, big models were seen by VCs as a bottomless pit.

Furthermore, the team at Anthropic had an almost obsessive commitment to "AI safety" and even had a nonprofit background, which mainstream VCs couldn't comprehend; traditional capital directly labeled them as "high-risk outliers."

Until Spark Capital led the Series C, this group finally woke up. Jason Shuman later had to admit:

The fact proves that projects understandable to everyone in the early stages usually do not lead to great success.

How much did this "cognitive dullness" cost?

In May 2021, Anthropic accepted a $124 million Series A led by Jaan Tallinn.

Compared to today's $350 billion valuation, those 21 rejected institutions missed out on nearly 3000 times the return.

Risk Control is the Biggest Risk

In this drama, Sequoia Capital perfectly enacted what is meant by "being at a loss."

It has been reported that at that time, Sequoia's global head Roelof Botha repeatedly rejected leading the investment.

The reason sounded particularly grand: "concentration risk." The implication is that they were afraid of hanging their investment on the AI tree, affecting asset allocation balance.

Such correct but vacuous traditional finance talk is a disaster in the face of exponentially growing AI.

Sequoia's face only turned around after it got slapped hard. By early 2026, AI investment had contributed 40% to the U.S. GDP.

At this point, who still talks about allocation? This belonged to life-saving assets! So, Sequoia's management underwent a major overhaul, and when Alfred Lin and Pat Grady took over, they quickly overturned Botha's conservative doctrine.

Roelof Botha publicly responded to the leadership change at Disrupt 2025 and defended Sequoia's "free speech" culture.

In January 2026, Sequoia finally grit its teeth and squeezed into Anthropic's latest round.

The awkward part was that by now, the valuation had surged from $1 billion in Series A to $350 billion.

In the name of "risk avoidance," Sequoia had stood outside and stared for five years, ultimately tearfully paying over 300 times the "cognitive premium."

This is not just a problem for Sequoia. The data at that time was very heartbreaking:

Before Spark Capital entered the fray, the vast majority of VCs would rather invest in uninspiring SaaS software than dare to touch Anthropic, which burns tens of billions in computational costs each year.

Compared to "investing wrongly," what these people feared more was being the "early bird," only to become the joke of "swimming naked" in the tide of the times.

The Dimensionality Reduction Strike of "Non-Mainstream" Capital

While mainstream VCs were still calculating ROI, who saved Anthropic?

It was a group of "madmen."

Jaan Tallinn, who led the Series A in May 2021, is a co-founder of Skype and a fervent believer in AI safety. He completely overturned Wall Street's money-flinging logic:

I'm not investing to earn profits from big models; I'm afraid AI losing control will kill humanity.

His logic was "fund replacement." Using money that cares about human survival to push out that which only looks at financial statements.

Also investing at the time were former Google CEO Eric Schmidt and Facebook co-founder Dustin Moskovitz.

The common point of these people was obvious: wealthy, willful, technically savvy, and didn't need to heed LP's opinions.

This also explains why the AI safety obsession viewed as "poison" by institutional investors in 2021 was seen as the strongest moat by real technology giants.

Without money like Tallinn's that was "buying for the survival of humanity," Anthropic would probably have perished by the A round.

It was this lifeline that helped them survive two years without commercial pressure, finalizing the core logic of the R1 series models.

Ironically, the money that was initially considered "charity" earned exploding returns in 2026.

The Harsh Truth of 2026: Not Investing in AI is Waiting to Die

By 2026, capital frantically scrambling for Anthropic was not just for profit but for survival.

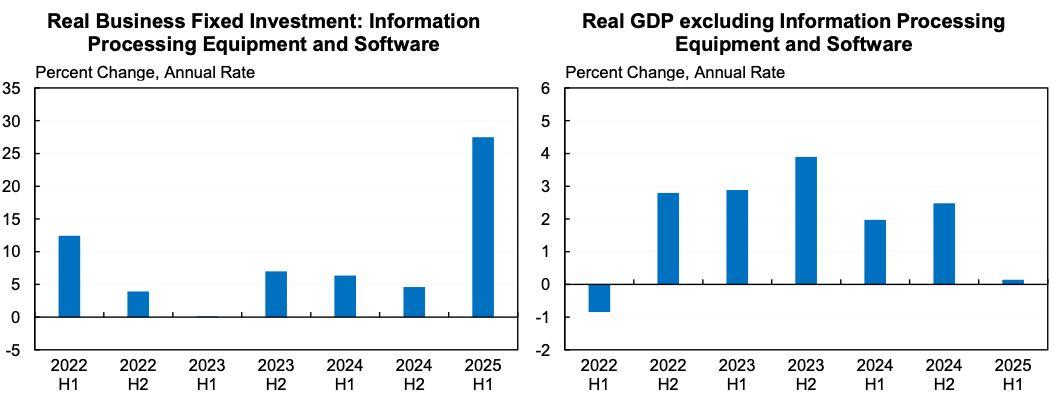

Macroeconomic data show that if you strip out AI, U.S. GDP growth would fall below 0.7%.

AI is no longer a trend; it is the only life support for the American economy. Analyst Siddharth's metaphor is very straightforward:

Pulling out AI's oxygen tube, and the economy stops beating immediately.

In the first half of 2025, after stripping out information processing equipment and software (i.e., AI infrastructure investment), the real GDP growth rate of the U.S. was nearly 0%. Meanwhile, IPE&S investments surged by 28%.

Venture capital logic has completely changed. In 2026, capital began to shift wildly from general models to vertical intelligent agents.

Amit Goel pointed out that VCs finally discovered that enterprises deeply cultivating vertical fields and not needing to write code were the new gold mines.

This is yet another ironic cycle.

In 2021, VCs rejected Anthropic because they couldn't understand "safety" and "large models";

In 2026, they were once again being left behind by a new generation of boutique funds because they couldn't understand "vertical domain knowledge."

This five-year cognitive war proves that capital never creates the future; it only spends big to buy a standing ticket when the future becomes unavoidable.

From 21 rejection letters to a $350 billion valuation, Anthropic has ripped apart the most respectable facade of the venture capital circle with solid data.

And now, when AI has become the only pillar of GDP, capital's entry is no longer about insight, but purely about survival instinct.

Stop mythologizing VCs' foresight. Those 21 rejection letters are concrete evidence: the vast majority of that $350 billion is the "cognitive tax" paid by the latecomers.

This is the reality. Either understand and bet on it in 2021, or kneel and pay in 2026.

免责声明:本文章仅代表作者个人观点,不代表本平台的立场和观点。本文章仅供信息分享,不构成对任何人的任何投资建议。用户与作者之间的任何争议,与本平台无关。如网页中刊载的文章或图片涉及侵权,请提供相关的权利证明和身份证明发送邮件到support@aicoin.com,本平台相关工作人员将会进行核查。