You thought Qwopus was cool because it merged Qwen and Opus? Well, Kyle Hessling, an AI engineer with a lot of knowledge and free time just took that recipe and threw GLM—one of the best reasoning models out there—into the mix. The result is an 18 billion parameter frankenmerge that fits on a cheap GPU and outperforms Alibaba's newest 35B model.

For those who don't know, parameters are the numerical values baked into a neural network during training, like dials that a neural network can adjust — the more of them, the more knowledge and complexity the model can handle, and the more memory it needs to run.

Hessling, an AI infrastructure engineer, stacked two of Jackrong's Qwen3.5 finetunes on top of each other: layers 0 through 31 from Qwopus 3.5-9B-v3.5, which distills Claude 4.6 Opus's reasoning style into Qwen as a base model, and layers 32 through 63 from Qwen 3.5-9B-GLM5.1-Distill-v1, trained on reasoning data from z.AI's GLM-5.1 teacher model on top of the same Qwen base.

The hypothesis: Give the model Opus-style structured planning in the first half of the reasoning and GLM's problem decomposition scaffold in the second—64 layers total, in one model.

The technique is called a passthrough frankenmerge—no blending, no averaging of weights, just raw layer stacking. Hessling had to write his own merge script from scratch because existing tools don't support Qwen 3.5's hybrid linear/full attention architecture. The resulting model passed 40 out of 44 capability tests, beating Alibaba's Qwen 3.6-35B-A3B MoE—which requires 22 GB of VRAM—while running on just 9.2 GB in Q4_K_M quantization.

An NVIDIA RTX 3060 handles it fine… theoretically.

Hessling explains that making this model wasn’t easy. The raw merge used to throw garbled code. But even so, the test models he published went kind of viral among enthusiasts.

Hessling's final fix was a "heal fine-tune"—basically a QLoRA (a bit of code that is embedded into the model like an appendix and heavily conditions the final output) targeting all attention and projections.

We tried it, and even though the idea of having Qwen, Claude Opus, and GLM 5.1 running locally in our potato is beyond tempting, in reality we found that the model is so good at reasoning through things that it ends up overthinking.

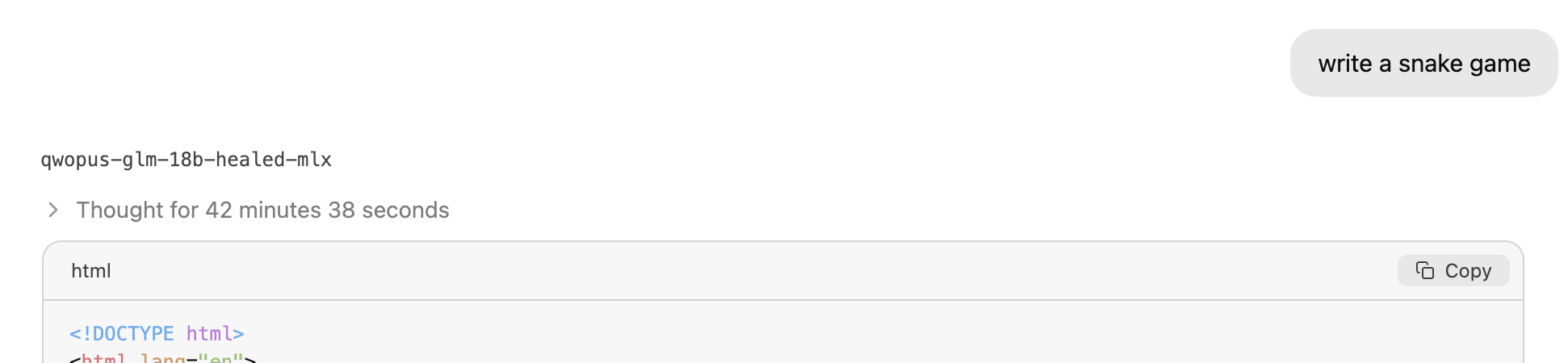

When tested it on an M1 MacBook running an MLX quantized version (a model optimized to run on Macs). When prompted to generate our usual test game, the reasoning chain ran so long it hit the token limit and gave us a nice long piece of reasoning without a working result in a zero shot interaction. That's a daily-use blocker for anyone wanting to run this locally on consumer hardware for any serious application.

We went a bit softer and things still were challenging. A simple "write a Snake game" prompt took over 40 minutes in reasoning... lots of it.

You can see the results in our Github repository.

This is a known tension in the Qwopus lineage: Jackrong's v2 finetunes were built to address Qwen 3.5's tendency toward repetitive internal loops and "think more economically." Stacking 64 layers of two reasoning distills appears to amplify that behavior on certain prompts.

That's a solvable problem, and the open-source community will likely solve it. What matters here is the broader pattern: a pseudonymous developer publishes specialized finetunes with full training guides, another enthusiast stacks them with a custom script, runs 1,000 healing steps, and lands a model that outperforms a 35 billion parameter release from one of the world's largest AI labs. The whole thing fits in a small file.

This is what makes open-source worth watching—not just the big labs releasing weights, but the layer-by-layer solutions, the specialization happening below the radar. The gap between a weekend project and a frontier deployment is narrower the more developers join the community.

Jackrong has since mirrored Hessling's repository, and the model had accumulated over three thousand downloads within its first two weeks of availability.

免责声明:本文章仅代表作者个人观点,不代表本平台的立场和观点。本文章仅供信息分享,不构成对任何人的任何投资建议。用户与作者之间的任何争议,与本平台无关。如网页中刊载的文章或图片涉及侵权,请提供相关的权利证明和身份证明发送邮件到support@aicoin.com,本平台相关工作人员将会进行核查。