Author: Vince Ultari

Compiled by: Shenchao TechFlow

Shenchao Guide: With the same subscription fee of 20 dollars, which should you choose, ChatGPT Plus or Claude Pro? This author bought both and compared them side by side for 30 days. The conclusion is contrary to common sense: there is no winner. ChatGPT is the all-purpose Swiss Army knife, offering a large message quota, image generation, and voice; Claude is a scalpel for deeper writing and coding, but its usage limits are tightly constrained. If you are willing to spend 40 dollars a month, subscribing to both is the optimal solution for 2026.

One sentence conclusion: Both ChatGPT Plus and Claude Pro are 20 dollars per month. ChatGPT gives you more message quota, image generation, voice mode, and the most comprehensive feature set; Claude provides better writing, deeper reasoning, a larger context window, and the strongest coding Agent in blind tests. Neither company has an overwhelming victory. Which one to choose depends on whether you want a Swiss Army knife or a scalpel. Most heavy users will be subscribing to both in 2026. The most important part to read is the coding comparison below, where the biggest differences lie. Not suitable for: those expecting a clean answer—there isn't one here.

Everyone is asking the same question: which to choose in 2026, ChatGPT or Claude? Both are 20 dollars per month, with the same price and the same promises, but the experiences are completely different.

Opinions vary online. Reddit is buzzing, and red arrows on YouTube thumbnails point to various benchmark charts. The vast majority are useless because they compare parameters on paper, not running in actual work.

What I did was this: I used ChatGPT Plus and Claude Pro together for 30 days. The same prompts, the same tasks, the same expectations. The final conclusion is not the kind that both marketing teams would write.

Every Pricing Tier Calculated for You

The 20 dollar tier is the starting point for most people. But the other tiers above and below this line reveal how both companies define their target users.

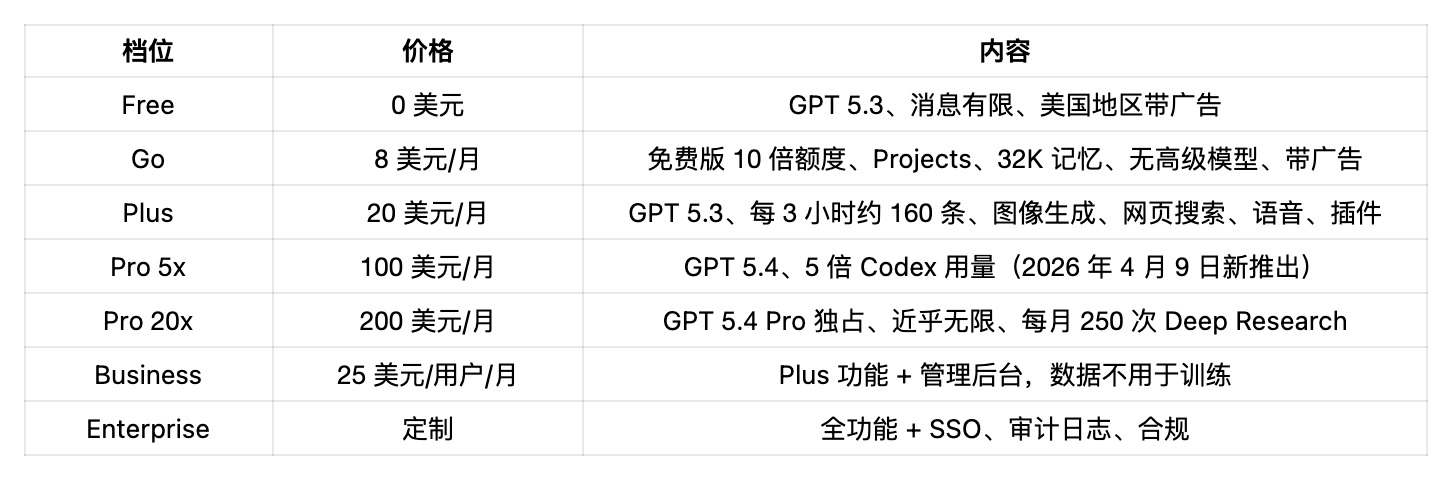

ChatGPT Pricing Tiers (April 2026)

On April 9, OpenAI split Pro into two tiers. The new Pro 5x is priced at 100 dollars, directly competing with Claude Max: same price point, same positioning, more Codex usage. The 200 dollar Pro 20x retains exclusive access to the GPT 5.4 Pro model.

The Go tier at 8 dollars removes advanced reasoning, Codex, Agent Mode, Deep Research, and Tasks. What remains is an ad-supported, enhanced free version with a larger quota. If you just want a better chat robot and don’t need productivity tools, it’s sufficient. But anyone reading this depth of comparison needs to be on Plus.

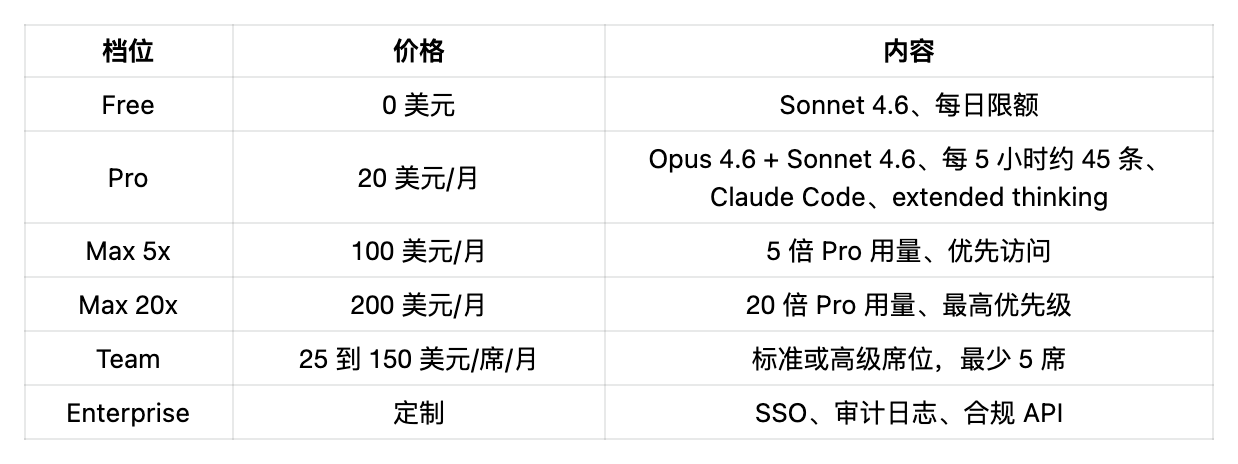

Claude Pricing Tiers (April 2026)

Anthropic does not have a cheap tier. It's either free or starts at 20 dollars. The Max tier exists because Claude Pro’s usage limits are really tight: a single complex Claude Code session can burn 50% to 70% of the 5-hour quota. That’s no small complaint. This is the number one grievance in every Claude community.

100 Dollar Tier: Direct Confrontation

OpenAI's new Pro 5x at 100 dollars and Anthropic's Max 5x at 100 dollars now compete at the same price. Same price, same customer base. OpenAI gives you GPT 5.4 plus five times Codex usage (increased to ten times until May 31 as an onboarding benefit). Anthropic gives you five times Pro usage plus priority access. For developers, the additional Codex usage in the 100 dollar tier is a more practical benefit. For others, Claude's message output quality is already higher, and after five times, it may be more cost-effective.

Same 20 Dollars, Who Gives More?

ChatGPT Plus: About 160 messages every 3 hours under GPT 5.3. Based on an 8-hour workday, you could send about 1280 messages a day.

Claude Pro: About 45 messages every 5 hours, roughly 200 messages a day. However, this number drops sharply with longer conversations, file attachments, and Claude Code usage. PYMNTS reports that AI usage rationing has become the new normal, and Claude is a typical representative.

In terms of message volume alone, ChatGPT Plus wins, and it's not by a small margin.

But volume does not equal quality. The complexity lies here.

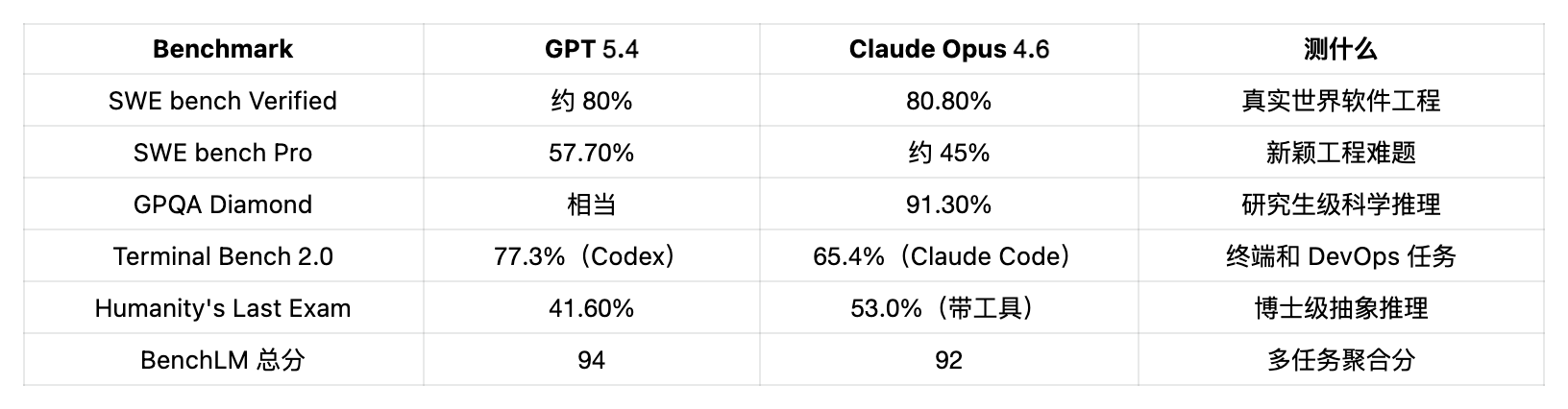

Model Showdown: GPT 5.4 vs Claude Opus 4.6

Both companies released significant updates at the beginning of 2026. The actual situation now is as follows:

(Source: BenchLM, Scale Labs HLE, Terminal Bench)

In practice, GPT 5.4 wins in breadth (comprehensive scoring, end tasks), while Claude Opus 4.6 wins in depth (complex coding, scientific reasoning, problem-solving under tool support). Neither crushes the other in categories; both have optimized for different types of intelligence.

Additionally, the 200K token context window in Claude consumption tiers is noticeably larger than ChatGPT’s 128K. When loading entire codebases, long documents, or research papers, the difference becomes apparent. Claude made the 1M context fully available on March 13, and charges uniformly. GPT 5.4’s 1M context is only supported via API, and the price doubles after exceeding 272K tokens.

Both Are Echo Chambers, Neither Has Fixed Issues

A study published by Stanford in March in Science tested 11 mainstream models, including GPT 5, Claude, and Gemini. The conclusion is that AI chatbots affirm user input 49% more than humans, even when the user is clearly wrong. The proportion of users who receive affirmative replies and then apologize or reconsider their stance decreases significantly.

This is not a problem with ChatGPT or Claude. It’s a problem for the entire industry. We’ve written separately about the complete study and its implications.

The Stanford HAI 2026 report measured 26 models, with hallucination rates ranging from 22% to 94%. GPT 4o’s accuracy dropped from 98.2% to 64.4% under adversarial conditions. The conclusions using both tools are the same: all outputs should be verified.

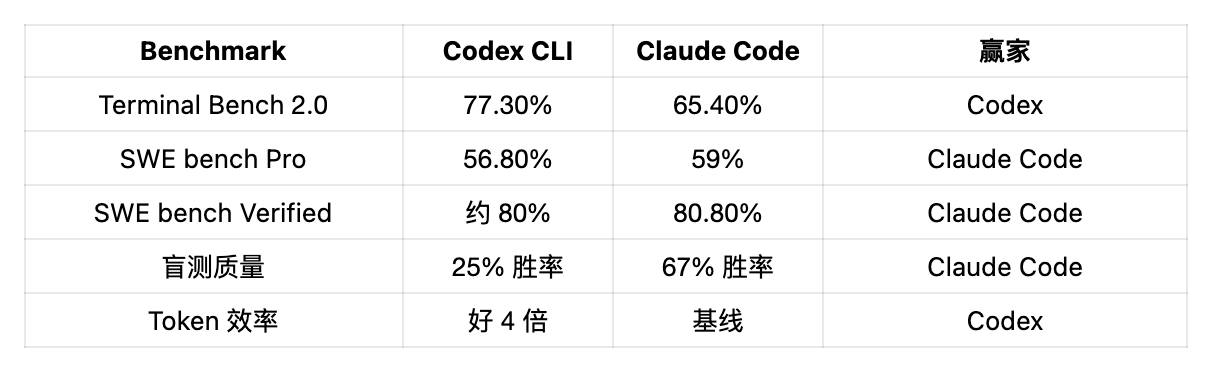

Claude Code vs Codex: The Most Contentious Battlefield

If you're writing code, this section is more important than everything above combined.

A survey of over 500 Reddit developers showed that 65% prefer Codex CLI. However, in 36 rounds of blind tests—where developers did not know which tool produced the code—Claude Code won 67% of the time, while Codex won 25%.

This gap between preference and quality explains the whole issue.

Why Developers Prefer Codex

The first reason is token efficiency. The token consumption for each task by Codex is about a quarter that of Claude Code. In one benchmark, Claude Code used 6.2 million tokens for the same task, while Codex used only 1.5 million. Based on API prices, Codex costs about 15 dollars, while Claude Code costs about 155 dollars. For the same output, the cost difference is 10 times.

@theo tweeted: “Anthropic sent a DMCA complaint against my Claude Code fork project.

…The project doesn’t even contain Claude Code's source code. I just modified a skill PR a few weeks ago.

It’s truly sad.”

The second reason is usage limits. On the 20 dollar Plus tier, Codex users report they can write code all day without hitting barriers. Claude Code users report that just one or two complex prompts can burn through their 5-hour quota. A comment on Reddit with 388 upvotes put it bluntly: one complex prompt can consume 50% to 70% of the limit.

The Claude Code Desktop Version Adds More Chaos

The situation is getting worse. The newly released Claude Code desktop version is redesigned to add multi-session support, meaning that four instances of Claude can run simultaneously. The problem is: each session has its own independent context window. Each of the four sessions loading 100,000 tokens of context adds up to 400,000 tokens. Users on X report that the entire 5-hour quota can be burned within 4 to 8 minutes. Anthropic engineers themselves described this rewrite as “a complete redo,” while community feedback labels it “burns tokens faster.”

@theo tweeted: Claude Code is now basically unusable. I’m done.

Lastly, there's speed. Codex focuses on autonomous execution: set the task, hand it over, and come back to look at the results. In February, OpenAI launched a Codex desktop application (macOS) that organizes tasks in a cloud sandbox by project. GPT 5.3 Codex Spark running on Cerebras can execute over 1000 tokens per second, which is 15 times the standard speed.

Why Claude Code Won Blind Tests

Looking at code quality from the opposite angle, the story is completely different. Claude Code produces more comprehensive and more deterministic results and can catch edge cases. In a widely referenced example, Claude Code identified a race condition that Codex completely missed.

The depth of reasoning is also notable. Claude Code acts more like a collaborative partner, reviewing changes step by step with you, asking clarifying questions, and explaining trade-offs. This is vital for complex refactoring and architectural decision-making.

In terms of features, Claude Code has hooks, rewind, Chrome extensions, plan mode, and the most mature MCP ecosystem in the industry. Codex features reasoning levels (low, medium, high, minimal), cloud sandbox execution, and background tasks. OpenAI even released an official Codex Plugin for Claude Code, allowing developers to assign tasks to different Agents within the same split screen terminal. Both tools are converging towards a technology stack that no one has fully planned, but everyone is using.

The shorthand in the developer community is: “Codex handles the keystrokes, Claude Code handles the commits.”

For quick iterations, template code, and tasks sensitive to speed and token cost, use Codex. For high-risk scenarios, switch to Claude Code: for production deployments, security-sensitive code, or complex debugging where missing an edge case could lead to being paged at midnight.

The biggest complaint about Claude Code is rate limiting. The biggest complaint about Codex is instability in long conversations. Pick one poison, or subscribe to both for 40 dollars a month, and avoid both issues.

(How Claude Code can fit into a more complete productivity stack can be seen in our GitHub repository guide.)

Feature Comparison: Skipping Scores

Writing Quality

Claude wins, and the gap is not small. In a blind test with 134 participants, Claude won 4 out of 8 rounds, while ChatGPT only won 1 round. Claude's writing rhythm is more natural, paragraph transitions are better, and the vocabulary range is wider. ChatGPT writes competently but feels formulaic. Generating a paragraph with ChatGPT and then editing out the AI flavor takes longer than writing it yourself.

In any situation where voice and nuance are important—marketing copy, editorial content, creative writing—choose Claude. For quick drafts, brainstorming, and large-scale structured content, choose ChatGPT.

Image Generation

ChatGPT wins by default. Claude does not have native image generation. That's it. ChatGPT’s DALL-E integration and native image capabilities in GPT 5 allow you to generate, edit, and iterate images directly within conversations. If visual content is a part of your workflow, this is enough to determine the winner.

Web Search and Research

Both have built-in web search. ChatGPT's integration is smoother, and the returns are faster. Claude offers a more layered and structured synthesis of the content found. When conducting deep research that requires holding multiple sources simultaneously, Claude's larger context window holds an advantage. Use ChatGPT for quick information checks.

Voice Mode

ChatGPT's advanced voice mode is clearly superior. Real-time dialogue, emotional tone variation, and interruption handling are significantly better. Claude’s voice capabilities are relatively rudimentary. If voice interaction is important, only ChatGPT can be used within the consumer tiers.

Memory

ChatGPT maintains a persistent memory across conversations and can set custom instructions. Claude has Projects (grouping conversations by shared context) and a memory function that is improving but still not mature enough. In actual experience, ChatGPT better “remembers you” long-term, while Claude better recalls your project context within a single conversation.

Computer Operations

Claude’s Cowork and Dispatch allow it to operate your desktop directly: click, input, switch between applications. It’s still in early stages but already functional. The computer operations done by ChatGPT through Codex are limited to cloud sandboxes. For desktop automation, Claude’s approach is more aggressive.

API and Developer Tools

Claude API pricing: Opus 4.6 input/output 5/25 dollars per million tokens, Sonnet 4.6 is 3/15 dollars, Haiku 4.5 is 1/5 dollars. ChatGPT’s GPT 5.3 Codex Mini is 1.50/6.00 dollars per million tokens, and high concurrency API usage is significantly cheaper.

Claude’s MCP ecosystem is more mature for Agent workflows. If you’re exploring open-source Agent alternatives, OpenClaw is worth checking out. OpenAI adopted Anthropic's MCP standard at DevDay in October 2025. This protocol created by Anthropic is now used by over 70 AI clients across both platforms.

The Same Prompt, Two Answers

“Write me a 1500-word blog about remote work trends”

ChatGPT delivers a well-structured, slightly generic article in about 45 seconds. The subheadings are tidy, the logic flows, and the basics are covered. It reads like a competent product from a content factory.

Claude’s output presents clearer viewpoints and more specific details, reading less like a product of a committee. It takes about 60 seconds to deliver. There are fewer edits needed before sending it out.

“Analyze this 40-page PDF and summarize key findings”

Claude performs better because its 200K context window can fit the entire document in one go, and when cross-referencing different sections, it doesn’t lose the thread. ChatGPT can run it but starts losing context on long documents with cross-page references.

“Help me debug this infinite re-rendering React component”

Both can pinpoint the missing dependency array in useEffect. However, Claude’s response also explains why it enters a re-render cycle and gives broader architectural refactoring suggestions. ChatGPT provides quicker fixes but with less context.

“Help me plan a 6-month product roadmap for a SaaS startup”

This is where the differences in usage limits hurt. ChatGPT lets you iterate repeatedly: draft, rewrite, refactor, regenerate, without worrying about quota after 30 exchanges. Claude’s roadmap will be deeper—more reasonable prioritization, a more realistic timeline, sharper trade-off analysis—but you may hit your limit after three or four rounds of edits.

“Summarize this 80-page legal contract and highlight high-risk clauses”

Claude pulls ahead here. Its context window can accommodate the entire contract, cross-referencing clause 47 with the indemnity clause on page 12 without losing any clues. ChatGPT’s 128K handles most contracts adequately, but very long or citation-dense documents will start losing context.

Who Should Choose Which

Choose ChatGPT Plus if: you need image generation, prefer voice interaction, place more value on message volume rather than individual quality, intend to use multiple AI features daily (search, image, voice, plugins), are looking for the most affordable entry tier (the 8 dollar Go), and require the broadest plugin ecosystem.

Choose Claude Pro if: you make a living through writing, care about output quality, are doing serious coding and want to use Claude Code, frequently handle long documents (200K context), value depth of reasoning more than breadth of functionality, can accept tighter usage limits, and want the best MCP and Agent workflow tools.

If you can spend 40 dollars a month, subscribing to both has become the way for more people: Codex for speed + Claude Code for quality, Claude for first drafts + ChatGPT for illustrations, assigning each task to the tool that excels at it.

This mixed-use approach is becoming the norm among heavy users. In March 2026, the search volume for “Claude vs ChatGPT” reached an average of 110,000 per month, an elevenfold increase year-on-year. People are not just curious anymore; they are choosing daily tools, and many find the final answer is to use both.

If you are building automation workflows around these two tools, the question has shifted from “which AI to choose” to “which tasks to assign to which AI.” This is the true answer for 2026.

The Bottom Line

ChatGPT is the Swiss Army knife. It can do everything: text, images, voice, search, plugins, Agents. None of its functions are top-notch, but none are bad either. If you want to cover all AI scenarios with one subscription, it is the most stable choice.

Claude is the scalpel. It does fewer things, but the few it does—writing, coding, reasoning, long context analysis—ChatGPT can't catch up. The trade-offs are real: tighter limits, no image generation, underdeveloped voice capabilities, and a narrower feature set.

If I must choose one for 20 dollars based on usage: for writing? Claude. For creative tasks? ChatGPT. For development? Start with Claude Code and switch to Codex if you hit limits. On a tight budget? ChatGPT's Go tier at 8 dollars is the cheapest usable AI assistant entry point.

The best answer for April 2026 is as uncomfortable as ever: it depends.

But now you know what specifics to look for.

FAQ

Which is better for coding in 2026, ChatGPT or Claude?

Claude Code won 67% of blind tests, and the SWE bench Verified score is also higher (80.8% vs about 80%). However, Codex CLI consumes 4 times fewer tokens per task, and the usage limits in the 20 dollar tier are much more generous. Choose Claude for code quality, choose Codex for cost and throughput. Many professional developers use both.

How many messages do ChatGPT Plus and Claude Pro provide per month?

ChatGPT Plus generates about 160 messages every 3 hours under GPT 5.3. Claude Pro generates about 45 messages every 5 hours, with this number significantly decreasing with lengthy conversations, attachments, and Claude Code usage. At the same price point, ChatGPT’s raw message volume is significantly higher.

Is the 8 dollar Go tier of ChatGPT worth it?

The Go tier gives you 10 times the quota of the free version, project organization, and a 32K memory window for 8 dollars a month. However, it lacks advanced reasoning models, Codex, Agent Mode, Deep Research, and Tasks, and it comes with ads. It suffices if you just want a better chat robot without productivity features.

Can Claude generate images like ChatGPT?

No. As of April 2026, Claude does not have native image generation capabilities. ChatGPT integrates DALL-E and native image generation. If image generation is a part of your workflow, you can only choose ChatGPT.

Are AI chatbots sycophants?

Yes. The research conducted at Stanford in March 2026 showcased in Science tested 11 mainstream models, finding that AI affirms user input 49% more than humans do, even when the user is wrong. This is a widespread issue in the industry, not specific to any one company.

Which AI is better for writing in 2026?

Claude is the consensus choice among professional writers. It generates more natural-sounding results, better transitions, and richer vocabulary. Choose Claude for any context where voice is important and choose ChatGPT for batch structured content.

Should I subscribe to both ChatGPT and Claude?

If you can commit 40 dollars a month, subscribing to both provides access to each one's strongest aspects. Assign writing and complex coding to Claude, and handle images, voice, quick queries, and high-volume tasks with ChatGPT. This is a stable solution for most heavy users in 2026.

免责声明:本文章仅代表作者个人观点,不代表本平台的立场和观点。本文章仅供信息分享,不构成对任何人的任何投资建议。用户与作者之间的任何争议,与本平台无关。如网页中刊载的文章或图片涉及侵权,请提供相关的权利证明和身份证明发送邮件到support@aicoin.com,本平台相关工作人员将会进行核查。