Written by: TinTinLand

The rapid development of AI technology is no longer a celebration for niche enthusiasts but has entered the productive force revolution in households across the country.

Remember a few months ago, when hundreds of users stood in line with laptops outside the Tencent building in Shenzhen, just to wait for a spot to deploy OpenClaw? When the whole internet was buzzing about "crawfish," whether it was professionals using it to automatically handle reports and write code, or companies building intelligent assistants that execute tasks autonomously, AI has permeated every corner of work and life. Meanwhile, various AIGC applications have accelerated in popularity, from AI-generated artwork and smart customer service to enterprise-level intelligent agents, its traces are now everywhere in our lives.

According to relevant data, the global AI market is expected to exceed $900 billion by 2026, and China's core AI industry size will reach 12 trillion yuan, with 88% of enterprises reporting that AI has helped increase annual revenue, and 76% of large companies have deployed AI-related applications. Meanwhile, with OpenClaw driving the paradigm upgrade of AI Agents, global token consumption has more than quadrupled within a month, and it is expected that by the end of 2026, global monthly token consumption will see exponential growth. AI is shifting from a conversational tool to a productivity engine, profoundly changing the cost structure for businesses and the work mode for individuals.

However, behind the rapidly growing data, many users only scratch the surface of AI. Faced with high-frequency keywords like Prompt, Token, and RAG, they are either bewildered or only partially understand, making it hard to unlock the full value of AI.

We interact with AI every day but are often confused by a plethora of jargon. For example, when using OpenClaw, not understanding the Context Window means you cannot effectively leverage its persistent memory capability to complete multi-step tasks. Not understanding Plugins means you will not know how to expand its functionality to fit your needs. When generating AI copy, not comprehending Prompt engineering means you won't be able to write precise instructions. So, rather than blindly following trends and using AI tools, it is better to proactively grasp the core concepts of AI technology, seizing the initiative in the artificial intelligence wave. TinTinLand has prepared a "Core Basic Concepts of AI that even novices can understand" for you, so that you can grasp the complete logic of AI operations after reading it, no longer fearing being confused by terminology!

Base Layer —— The Foundation of AI Technology

The base layer is the foundation of AI, akin to the foundation and building materials of a house, directly determining the technical height that AI can achieve, serving as the starting point for all AI applications.

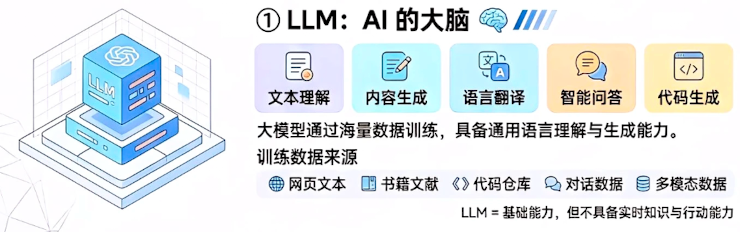

LLM: Large Language Model, AI's Super Brain

Many people think that models like ChatGPT represent the entirety of AI, but this understanding is only half correct. The foundation of AI applications is LLM (Large Language Model), a natural language processing system built on deep learning technology. Its core lies in the pre-training on vast amounts of text data to autonomously learn the grammar, semantics, and logic of human language. Ultimately, it possesses comprehensive abilities to understand context, generate context-appropriate text, and complete complex language tasks, serving as the "core brain" of all generative AI.

In simple terms, AI writing tools rely on LLM to generate logically coherent text, while code generation tools understand programming syntax and requirements through LLM. In just one year, the deployment volume of enterprise-level LLMs increased by 187%, covering entire sectors like finance, healthcare, and education. During practical operations, users generally do not need to build LLM from scratch, as they can directly invoke mature models; enterprise applications can fine-tune open-source LLMs to build solutions tailored to their specific business scenarios.

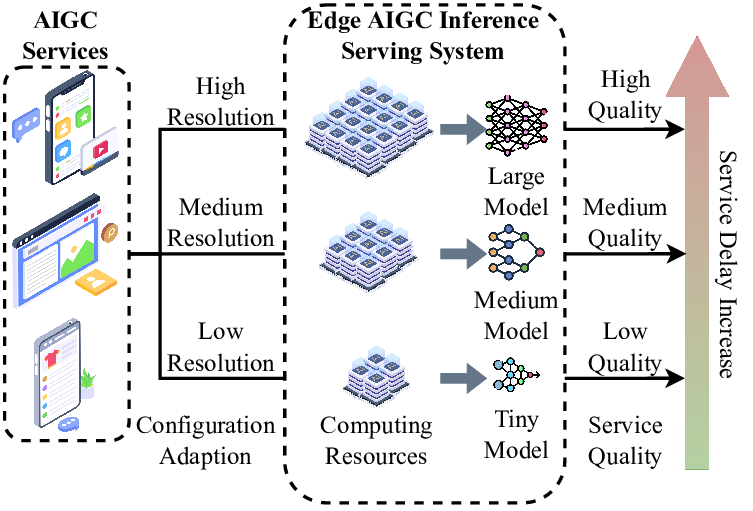

AIGC: Generative AI, The Creativity Engine

AIGC (AI Generated Content) refers to intelligent technologies that automatically generate text, images, audio, video, code, and other content using AI, distinguishing it from traditional AI's inherent limitations of "only analyzing, not creating." This is key to AI's transition from a tool to a creative force. Users input specific text prompts and reference material needs into a dialogue box, and the AI model processes these requirements to generate appropriate text, graphic, or video content, which is then fine-tuned by humans to achieve a completed product.

Current popular AIGC software applications/websites include MidJourney, Stable Diffusion, Runway, etc. The proportion of manual labor investment has decreased by about 30%, while content generation efficiency has improved by 5-10 times compared to manual efforts, significantly unleashing the application potential and product coverage in design and cultural industries.

Interaction Layer —— Enabling Effective Command of AI by Humans

The AI at the base layer is powerful, but it needs the interaction layer to translate human needs, allowing AI to understand and perform tasks effectively, directly determining our communication efficiency and effectiveness with AI.

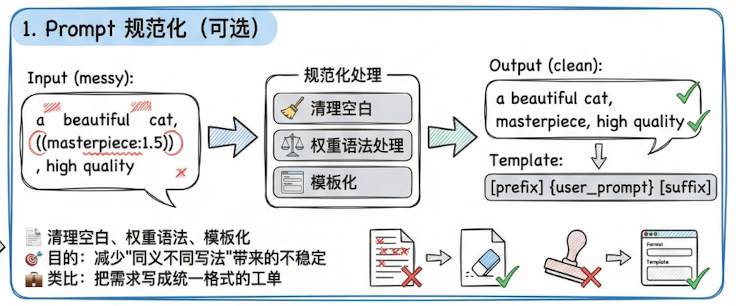

Prompt: The Keyword, Understanding AI Instruction Description

Prompt is the various detailed instructions input by humans to AI, including need descriptions, scenario limitations, format requirements, etc., aiming to clarify the task objectives for AI and generate expected outcomes. When users make various demands of AI, the content editing instructions they provide are Prompts. High-quality Prompts enable AI to output content more accurately and in line with users' established expectations.

Common Prompt structural elements include —— Role Setting (Role), Available Tools (Tools), Task Objectives (Goal), Output Format (Output Format), Rules and Steps (Rules & Steps), Examples (Example). In real AI dialogue practice, there is rarely a ready-made Prompt; adjustments are typically necessary based on practical outcomes to reach an ideal Prompt editing state.

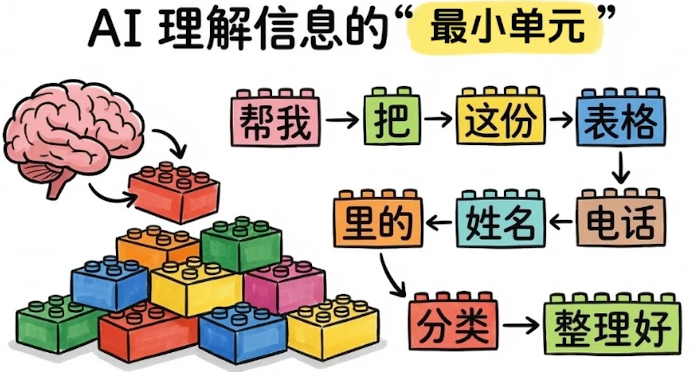

Token: The Lexeme, Grasping AI's Minimum Comprehension Unit

In the realm of AI applications, Token is the minimum semantic unit of text and acts as the "atom" for AI to understand and process language. This is mainly because AI cannot directly recognize complete sentences or words but breaks text down into individual Tokens for calculation and understanding. As an authentication token, Token can be used in various scenarios such as API access control.

As a core metric of AI computing cost, the daily Token consumption in the country soared from about 100 billion at the beginning of 2024 to breaking 30 trillion by the end of June 2025. This figure intuitively reflects the speed of AI application proliferation. It is believed that data centers will no longer be mere storage warehouses but transform into smart factories for producing Tokens in the future.

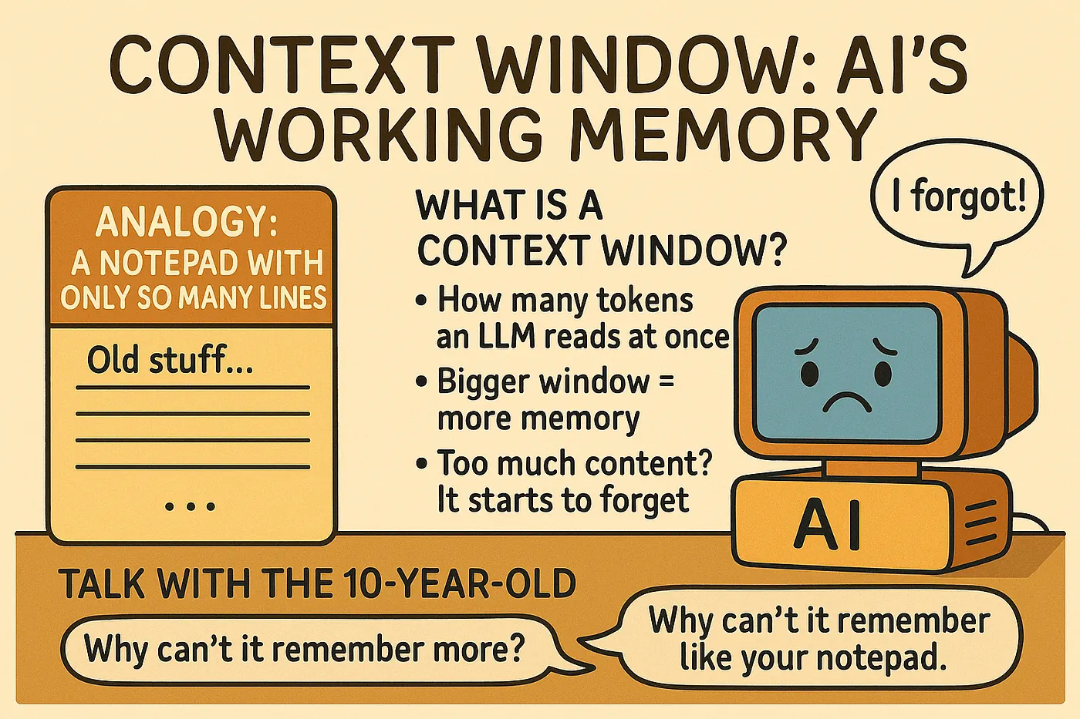

Context Window: Short-Term Memory of AI

The Context Window directly affects the processing of long texts and multi-turn dialogue experiences. For example, processing a 5,000-word article (about 3,000 Tokens), if the model's context window is only 2,048 Tokens, then the AI model may exhibit disjointedness, failing to comprehend the latter half of the article. Hence, only when the Context Window reaches a sufficient length to accommodate more extended information can longer amounts be processed continuously; otherwise, the AI may "forget old information."

Currently, when we need to handle long texts, we can choose models with large context windows (like GPT-4 Turbo or Bean Bag Long Text Model) or segment the text for processing. During multi-turn dialogue, if the content is extensive, a brief recap of key information can be included in the Prompt to avoid AI memory loss.

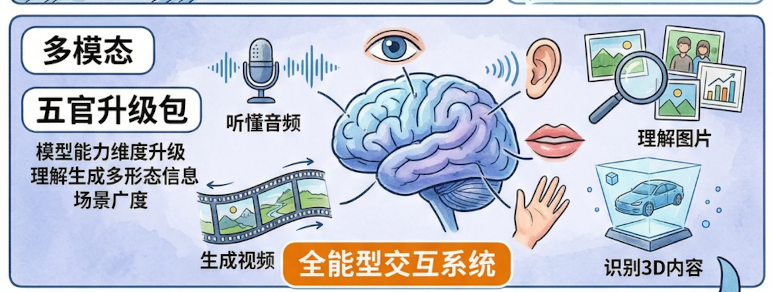

Multimodal: The Sensory Capability of AI

Multimodal refers to AI's ability to process and understand text, images, audio, video, and other types of information simultaneously, breaking the limitations of single-text interaction, deeply simulating human multi-sensory capabilities of "seeing, hearing, speaking, and reading." This is also one of the core development directions of current AI technology. For example, Baidu's Wenxin large model 4.5 Turbo, as a multimodal model, can now achieve mixed training of text, images, and videos, with multimodal understanding effects improving by over 30%.

The maturity of multimodal technology allows AI to align more closely with human interaction habits. For instance, you can send AI an image + text prompt —— "Help me turn this landscape photo into a watercolor style and write a caption," and AI can simultaneously understand the image content and text requirements, completing a seamless creative task.

Application Layer —— Making AI a Tool for Practical Work

With the brain of the base layer and the bridge of the interaction layer, the application layer serves as the toolkit for deploying AI into specific scenarios and solving real-world problems. Its core is to transform AI capabilities into products or services that can be directly utilized.

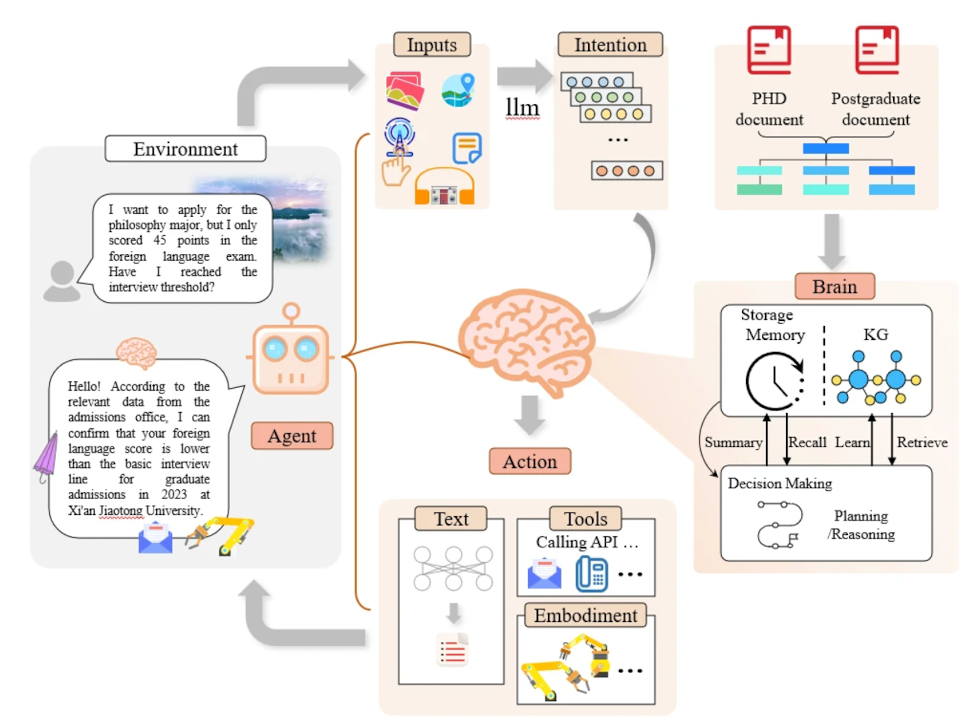

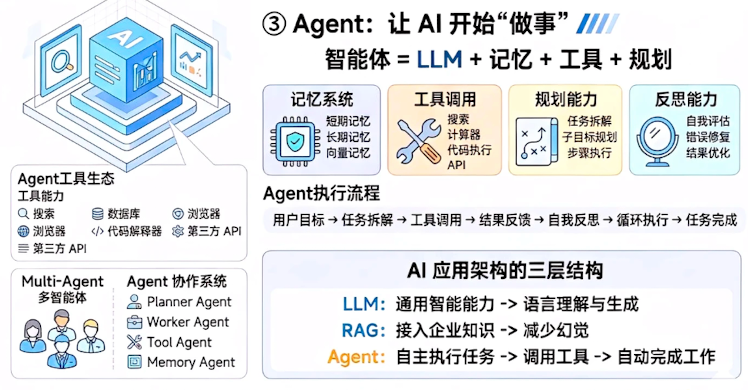

Agent: The Intelligent Entity, AI's Automated Worker

Agent (AI Intelligent Agent) is an AI system with self-decision-making, dynamic planning, and autonomous execution capabilities, equivalent to a worker who does not require supervision. You only need to provide the final goal; it autonomously breaks down tasks, invokes tools, and resolves problems without the need for human guidance at each step. In complex and uncertain application scenarios, the Agent can self-analyze the task goals and complete a positive feedback loop of self-reflection and results.

Aligned with user habits, the Agent can remember personalized preferences, such as preferred hotels, travel destinations, and desired routes, facilitating tailored information search and execution, and even learning from past instruction mistakes to enhance future content generation outputs.

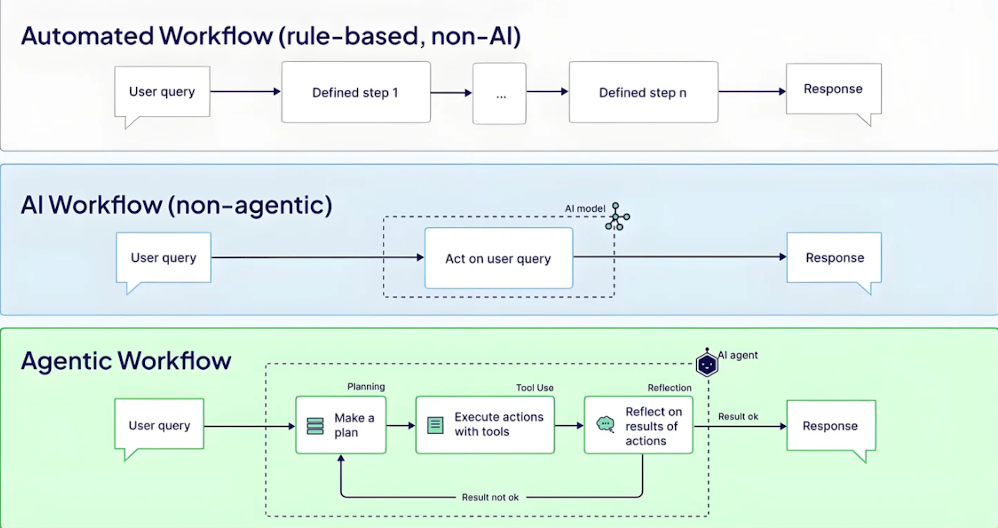

Workflow: Standardized Processing Flow of AI

Workflow is the process of breaking down AI tasks into step-by-step, standardized, and repeatable execution flows, clarifying the execution sequence, responsible parties, and output results for each step, similar to an assembly line for AI to efficiently and reliably execute tasks. AI Workflow cleverly designs execution steps for AI, allowing both users and large models to operate tasks in accordance with predetermined SOPs, thereby improving production efficiency.

For instance, in a craft product enterprise, an AI drawing tool has developed more than 120 standardized workflows covering "creative inspiration — style transfer — product editing — 3D presentation," achieving a closed-loop output from natural language descriptions to deliverable effect diagrams, reducing the time for individual design tasks from 5 days to 1.5 days, improving efficiency by over 70%.

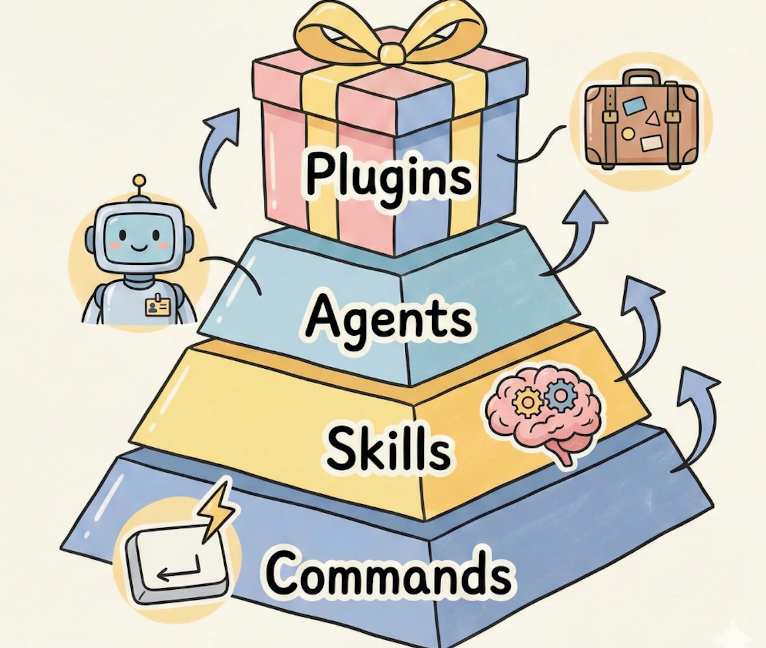

Plugin: Expanding AI Capabilities Efficiently

Plugin is a small tool that supplements specific functionalities for AI, like installing plugins to enhance AI capabilities. By installing plugins, users can quickly unlock new application abilities without the need to retrain the model. In real-world applications, general users can install plugins based on their needs, and enterprises can develop customized plugins that fit business scenarios, significantly lowering the costs of deploying AI applications.

Specifically, AI uses Skills to think through tasks and invokes the Plugin when information retrieval or actions are required. Plugins follow a unified MCP protocol, allowing for plug-and-play use and easy interchange, and can connect to third-party services and APIs, becoming a high-energy expansion mechanism for the entire system.

Patch Layer —— Efficient Correction Mechanism for AI

AI can make mistakes and produce nonsensical outputs, and the core function of the patch layer is to correct these errors, enhancing the accuracy and reliability of AI outputs to make AI operations more trustworthy.

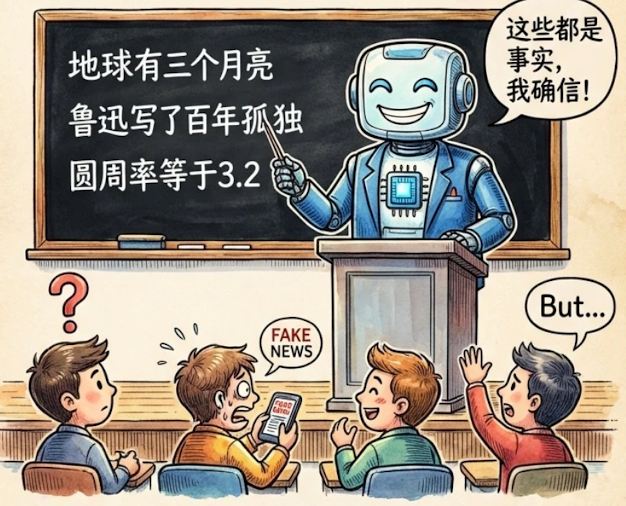

Hallucination: Does AI Really Speak Nonsense?

Hallucination (AI Hallucination) refers to content generated by AI that appears reasonable and fluent but is actually inaccurate, fabricated, or inconsistent with facts. However, AI confidently presents such erroneous information, which is one of the main pain points of current generative AI. This has become a frequent shortcoming of AI-generated content, with instances of false academic citations, fabricated non-existent data, misinterpretation of facts, and the creation of fictitious characters or events being common, such as when an unoptimized LLM may provide erroneous medical advice in response to health questions, posing potential serious risks.

Real-time tool invocation and limiting output methods can effectively reduce the frequency of AI hallucinations. Currently, the industry primarily addresses this via RAG technology, confidence calibration, traceability labels, and real-time feedback corrections, with RAG being the most commonly used and effective solution that can reduce the error rate of AI hallucinations by over 70%.

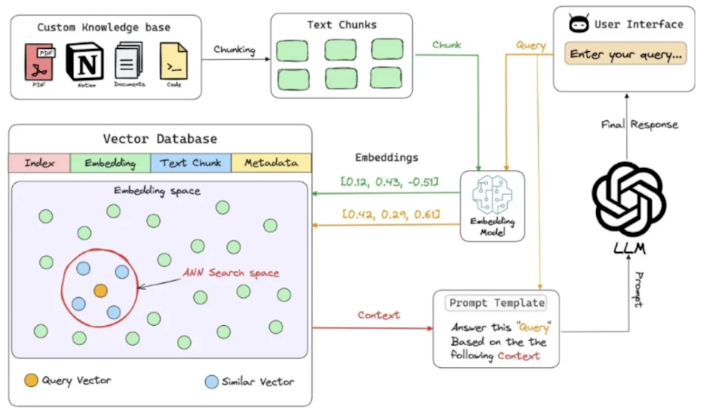

RAG: Retrieval-Augmented Generation, the AI Information Retrieval Tool

RAG (Retrieval-Augmented Generation) is the key technology for addressing AI hallucination and knowledge lag. Simply put, it ensures that AI retrieves accurate information from external knowledge bases before generating content and combines this acquired knowledge with its own capabilities to generate content.

In healthcare, integrating patient records and medical guidelines into external knowledge bases through RAG technology has boosted the accuracy of LLM-generated treatment recommendations from 65% to 92%. In finance, RAG combines the latest policies and market data to produce compliant and accurate industry analysis reports, reducing errors by 80%. Compared to traditional generative AI, RAG-enhanced systems have shortened the knowledge update cycle from months to minutes, significantly reducing deployment costs, making generated content traceable and meeting audit requirements.

Connectivity Layer —— Achieving Interconnected AI Systems

Modules within AI need to connect through the connectivity layer to ensure smooth data and capability transfer. This is key for the large-scale application of AI.

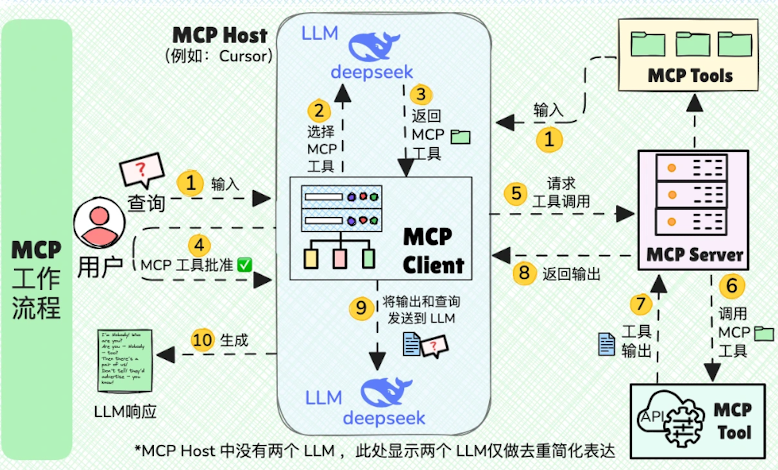

MCP: Model Context Protocol, AI's Standardized Interface

MCP (Model Context Protocol) is a standardized protocol framework proposed by Anthropic and open-sourced, aimed at standardizing interaction methods between large language models and external data sources and tools. It is hailed as the "TYPE-C interface" for AI applications —— providing a standardized way for connecting peripherals. MCP provides a unified interface for connecting AI models to different data sources and tools.

The emergence of MCP breaks the technical capability boundaries of LLMs, enabling AI applications to access local and remote resources in a relatively uniform manner, achieving more efficient and flexible integration and lowering the costs of connecting AI to external tools. Currently, we can experience MCP capabilities at the Volcano Ark experience center, supporting multiple models, multiple MCP servers, and tool options.

API: Application Programming Interface, AI's Data Channel

API (Application Programming Interface) has always served as a data channel between different software and systems, facilitating easy data exchange and functional interlinking without starting from scratch. Nearly all AI application scenarios are inseparable from APIs; enterprises quickly realize intelligent customer service by integrating ChatGPT's API into their customer service systems; self-media platforms access AIGC's API for batch generation of copy and images; e-commerce platforms integrate AI translation APIs to automatically translate product descriptions into multiple languages for wider coverage in overseas markets.

Ordinary developers can rapidly develop AI applications by calling public APIs without building the underlying models. Enterprises can deeply bind AI capabilities with their business systems through APIs, assisting in process automation. Currently, mainstream AI API call latencies have dropped to below 100ms, with stability reaching 99.9%, meeting enterprise-level application requirements.

Conclusion: Embrace the Intelligent Era, Seize High Ground in the AI Technology Wave

The tide of technological iteration has never ceased, but often only those who understand the underlying principles can better master technology. This article on AI core concepts aims to help everyone deeply understand the underlying logic and key vocabulary of AI technology, not only to keep pace with the times but also to enable more partners to leverage AI accurately in their work and creation, truly transforming AI tools into the core productivity that enhances efficiency.

免责声明:本文章仅代表作者个人观点,不代表本平台的立场和观点。本文章仅供信息分享,不构成对任何人的任何投资建议。用户与作者之间的任何争议,与本平台无关。如网页中刊载的文章或图片涉及侵权,请提供相关的权利证明和身份证明发送邮件到support@aicoin.com,本平台相关工作人员将会进行核查。