Original Title: "Anthropic Launched a New Product Today That May Cause Some Teams Working on AI Infrastructure to Become Unemployed"

Original Author: Baoyu, AI Engineer

This product is called Claude Managed Agents. In summary: you tell Anthropic what kind of AI agent you want, and it helps you run it in the cloud, with all infrastructure covered and billed by usage. Sentry went live with a complete automated bug-fixing process in just a few weeks, and Rakuten deploys a special agent every week. Previously, these tasks required a whole engineering team several months to complete.

Meanwhile, Anthropic's annual recurring revenue has just surpassed $30 billion, three times that of last December. Most of the growth comes from enterprise customers. Wall Street is already getting nervous; WSJ reports that investors are becoming more cautious about the stock prices of traditional SaaS companies, fearing that products like Anthropic's will render some traditional software services obsolete.

What exactly is this product? How does it differ from the Claude Code you are already using? How is it technically achieved?

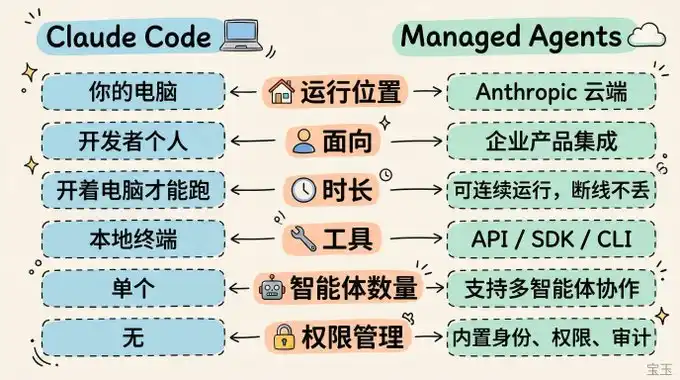

What is it? How does it differ from Claude Code?

If you have used Claude Code, you know how AI agents work: you give it a task, and it plans steps, calls tools, writes code, modifies files, and completes the task step by step.

Claude Code runs on your own computer and is a command-line tool for individual developers. If you turn off your computer, it stops.

Managed Agents run on Anthropic's cloud and are an API service for enterprises. They can run continuously 24/7, and if disconnected, they do not lose progress; your products can directly embed agent capabilities.

Notion does this: users assign tasks to Claude agents within Notion, the agents finish the work in the background and return the results, all without the users having to leave Notion.

Several typical use cases:

· Event-triggered: System detects a bug, automatically dispatches an agent to fix it and submit a PR, with no human intervention needed in between.

· Scheduled: Automatically generate GitHub activity summaries or team work briefs every morning.

· Set-it-and-forget-it: Assign a task to the agent in Slack, and it returns completed spreadsheets, PPTs, or apps.

· Long-duration task: Running several hours of deep research or code refactoring.

How is it different from the cloud agents built by enterprises themselves?

They can build their own, but it's expensive and slow.

A deployable agent requires much more than just "tweaking an API": sandbox environments (a secure isolated space where AI runs code and modifies files without affecting the outside real system—similar to giving AI a dedicated virtual computer), credential management, state recovery, permission control, full-link tracking...

Many enterprise clients previously needed a whole engineering team dedicated to these tasks. Now it’s plug-and-play, allowing engineers to focus on the core parts of the product.

But the pain points that Managed Agents solve go beyond just saving manpower.

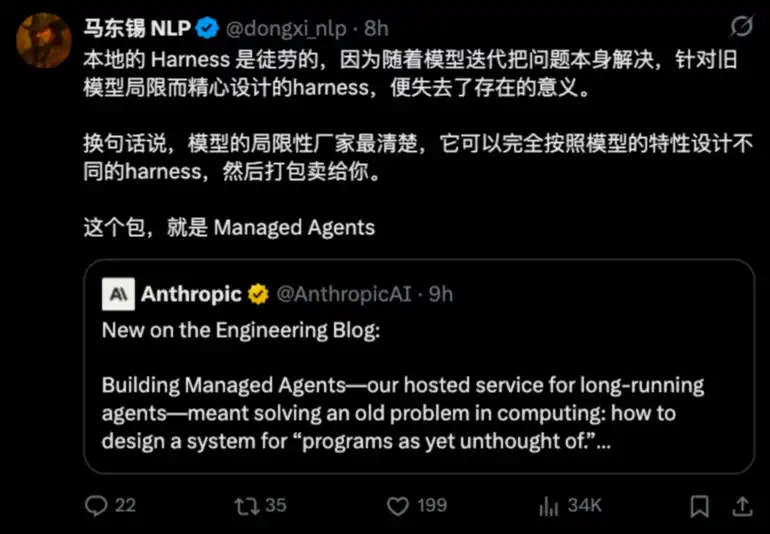

Ma Dongxi (@dongxi_nlp) has provided a keen summary:

There is a specific example in Anthropic's engineering blog:

When Claude Sonnet 4.5 approaches its context window limit, it becomes "anxious" and hastily ends tasks. They added context resets in the scheduling framework to address this. But when Claude Opus 4.5 came out, this issue disappeared, rendering the previous patch redundant.

If you build your own scheduling framework, you must keep it updated with every model upgrade. Hand it over to Anthropic, and they optimize it for you; strictly speaking, they have optimized it for sale to you.

Who is using it? How are they using it?

Notion allows users to directly assign coding, creating PPTs, and organizing spreadsheets to Claude within the workspace, executing dozens of tasks in parallel, with the entire team collaborating on the same outputs. Notion Product Manager Eric Liu stated that users can directly delegate open-ended complex tasks without leaving Notion.

Sentry has created a fully automated process from bug discovery to submitting fixing code. Their AI debug tool Seer identifies the root cause, and Claude directly writes patches and opens PRs (code submission merge requests). Engineering Director Indragie Karunaratne mentioned that they went live in just a few weeks while eliminating ongoing operational costs of maintaining self-built infrastructure.

Atlassian has integrated it into Jira, allowing developers to assign tasks directly to Claude agents within Jira.

Asana has introduced AI Teammates, incorporating AI collaborators into project management that can take tasks and deliverables.

General Legal (a legal tech company) has the most interesting approach: their agents can temporarily create tools to query data based on user questions. Previously, each user question had to be anticipated and developed a retrieval tool, but now the agents generate it on demand. The CTO said development time has been reduced by ten times.

Rakuten has deployed specialized agents across engineering, product, sales, marketing, and finance departments, each going live within a week, taking tasks through Slack and Teams, with results being spreadsheets, PPTs, or apps.

Technical Principle: Decoupling the Brain from the Hands

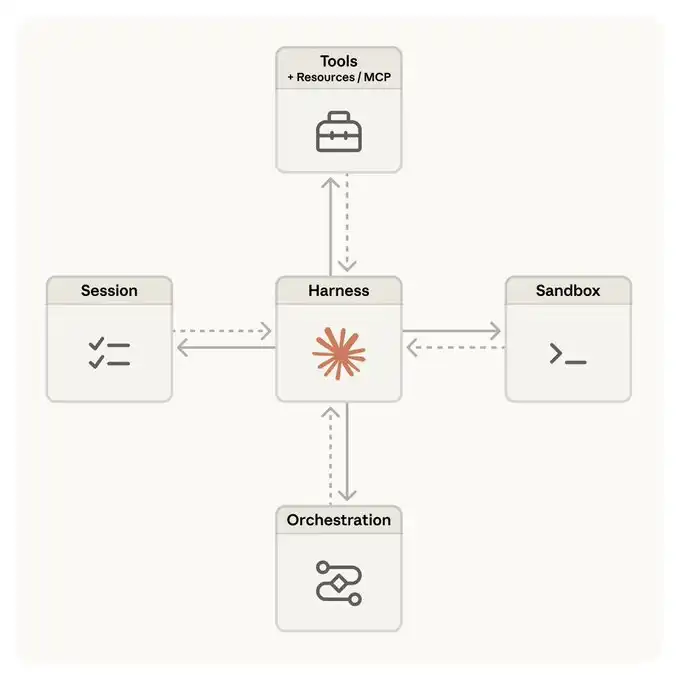

The Anthropic engineering team wrote a technical blog titled "Scaling Managed Agents: Decoupling the Brain from the Hands" discussing the architectural evolution behind Managed Agents.

Initially, they packed everything into one container: the AI's reasoning loop, code execution environment, session records, all together. The benefit is simplicity; the downside is all eggs in one basket. If the container fails, the entire session is lost, and there’s no way to replace a specific part independently.

Later they made a crucial split:

· The "Brain" is Claude and its scheduling framework, responsible for thinking and decision-making.

· The "Hands" are the sandbox and various tools, responsible for executing specific operations.

· The "Memory" is an independent session log that records everything that happens.

The three are independent; if one fails, the other two remain unaffected.

This separation brings several practical benefits:

Fast

Not every task requires starting a full sandbox environment; now, it only starts when the AI truly needs to run code. The median first response latency has dropped by about 60%, and in extreme cases, it has fallen by more than 90%.

Safe

The code generated by AI runs in the sandbox, while the credentials to access external systems are stored in a secure safe outside the sandbox, keeping both physically isolated. For instance, when accessing a Git repository, the system clones the code at initialization, and the AI can normally use git push/pull, but the token itself is invisible to the AI. For services like Slack and Jira, they connect through the MCP protocol, with requests going through a proxy layer that retrieves credentials from the safe to call services, with the AI never handling the credentials directly.

Flexible

The brain doesn’t care what the hands are. A phrase in the engineering blog is quite interesting: the scheduling framework doesn’t know if the sandbox is a container, a mobile phone, or a Pokémon simulator. As long as it meets the "name and input in, string out" interface, it suffices.

This also means multiple brains can share hands, and one brain can hand over its hands to another brain, laying the groundwork for multi-agent collaboration.

Limitations

Managed Agents are not omnipotent. Here are a few points to note:

Some features are still in research preview. Capabilities for multi-agent collaboration, advanced memory tools, and self-assessment iterations (allowing agents to self-evaluate task completion quality and improve repeatedly) are currently not fully open and require application for use.

Platform binding. Choosing Managed Agents means your agent infrastructure is tied to the Anthropic ecosystem. If you want to switch models or platforms in the future, migration costs should not be ignored.

Context management remains a challenge. Although session logs are stored independently, determining which information should be retained and which should be discarded during long-duration tasks still involves irreversible decisions. This is an ongoing challenge; their current approach is to separate context storage and context management: storage ensures nothing is lost, while management policies are adjusted as the model evolves.

Cost predictability. $0.08 per session hour doesn’t sound like much, but for complex tasks running for several hours, total token consumption plus runtime costs can be significant. Enterprises need to do a good budgeting assessment.

Managed Agents indicate that most enterprises still have a long way to go to fully utilize "AI agents for work."

The barrier for infrastructure has lowered, but questions like how to define good tasks, how to design good workflows, and how to establish trust for AI to access core business data are issues that Managed Agents cannot help you with.

The "AWS Moment" for AI Agent Infrastructure

It seems Managed Agents are following the old path that AWS took: first, there was computing power, then the runtime environment was packaged.

Ten years ago, companies were debating "to go to the cloud or not," now they are debating "whether to self-build or outsource agent infrastructure." Historical experience tells us that most companies will ultimately choose to outsource, as infrastructure has never been a core competitive advantage. OpenAI has also launched its own agent platform, Frontier, and the competition in this field has just begun.

From a technical perspective, the "decoupling the brain from the hands" architectural concept is worth paying attention to. It allows each part of the system to evolve independently: when the model is upgraded, the brain is swapped out; when new tools are needed, a new set of hands can be added; when storage solutions change, the memory layer can be replaced.

An analogy in the engineering blog captures this well: the read() command of an operating system doesn't care whether it is accessing a 1970s disk or a modern SSD; as long as the abstraction layer is stable, the underlying implementations can be swapped freely.

From a usage perspective, if you are an enterprise developer looking to embed AI agent capabilities into your products, Managed Agents could save you months of infrastructure work.

Six languages (Python, TypeScript, Java, Go, Ruby, PHP) are supported with SDKs. If you are already using Claude Code, update to the latest version and input /claude-api managed-agents-onboarding to get started.

If you are an ordinary AI enthusiast, the most intuitive feeling in the short term may be: in the SaaS products you use, there will be increasingly more AI agents working behind the scenes, and these agents are likely running on Managed Agents.

Pricing reference: Token costs follow Anthropic API standard pricing, runtime is $0.08 per session hour (idle time is not charged), and web searches are $10 per thousand.

Do you think the infrastructure for AI agents will eventually be monopolized by a few large companies, similar to cloud computing?

免责声明:本文章仅代表作者个人观点,不代表本平台的立场和观点。本文章仅供信息分享,不构成对任何人的任何投资建议。用户与作者之间的任何争议,与本平台无关。如网页中刊载的文章或图片涉及侵权,请提供相关的权利证明和身份证明发送邮件到support@aicoin.com,本平台相关工作人员将会进行核查。