According to several reports, Anthropic filed two lawsuits on March 9 in federal court challenging actions taken by the U.S. Department of Defense and the Trump administration, arguing the government unlawfully retaliated against the company after it refused to remove safeguards from its Claude artificial intelligence system. The suits target a designation that typically applies to foreign adversaries suspected of espionage or sabotage, not domestic technology firms.

The dispute traces back to contract negotiations between Anthropic and the Pentagon over how the company’s Claude AI model could be used by U.S. defense agencies. Anthropic had previously supported national security initiatives and became the first frontier AI company to deploy models on classified U.S. government networks in June 2024, assisting analysts and military planners with intelligence review, simulations, operational planning and cybersecurity work.

Tensions escalated when the Defense Department demanded unrestricted access to Claude for “all lawful purposes” as part of a contract renewal. Anthropic agreed to most conditions but insisted on two restrictions: prohibiting the use of its AI for mass domestic surveillance of Americans and preventing deployment in fully autonomous lethal weapons systems.

Company executives argued that those guardrails were necessary because current frontier AI models remain too unreliable for autonomous weapons and because large-scale surveillance programs could conflict with constitutional protections. Anthropic stated the restrictions had never interfered with any military mission during its prior work with the government.

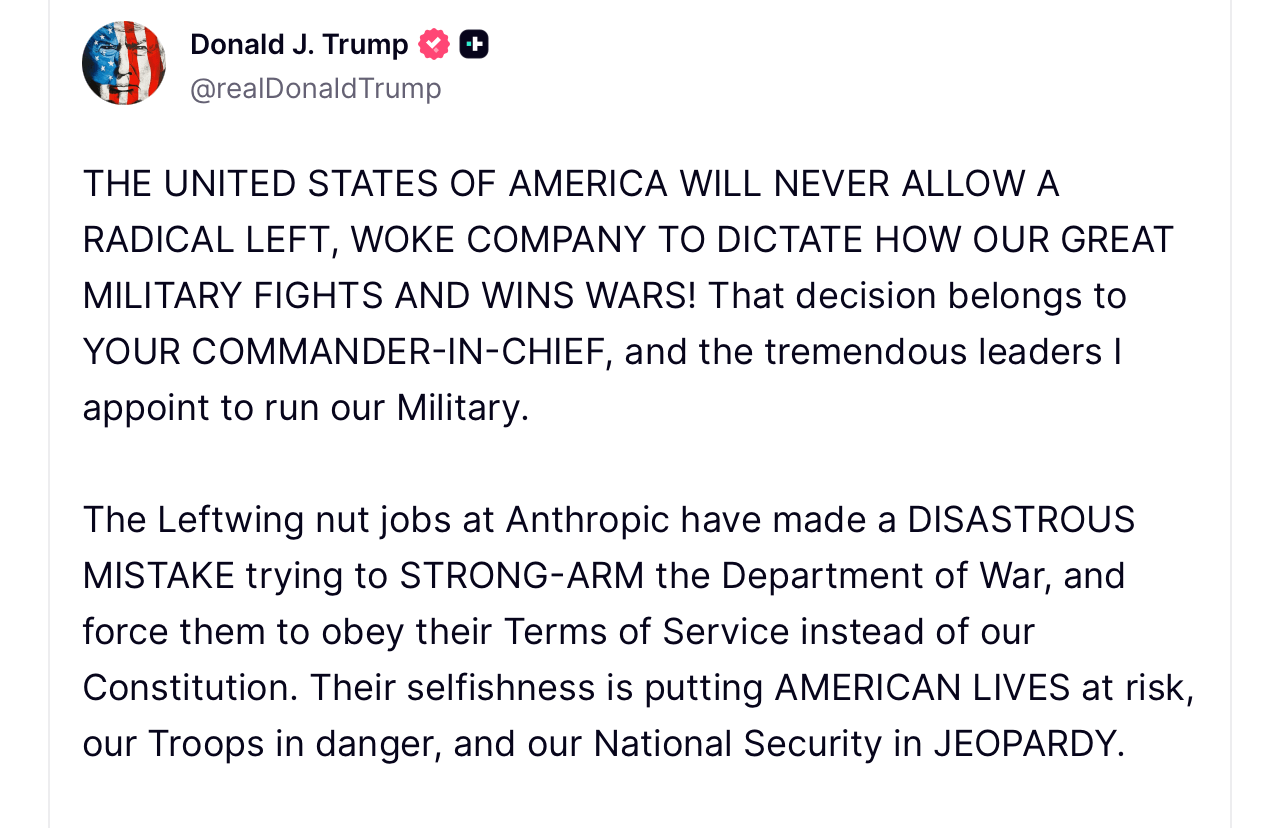

Negotiations collapsed following a Feb. 24 meeting between Anthropic CEO Dario Amodei and Defense Secretary Pete Hegseth. Days later, on Feb. 27, President Donald Trump posted on Truth Social, directing federal agencies to “immediately cease” using Anthropic technology, describing continued reliance on the company as a “disastrous mistake.”

President Trump on Truth Social concerning the White House’s issue with Anthropic.

Soon afterward, Hegseth moved to classify Anthropic as a supply chain risk under federal procurement authority outlined in 10 U.S.C. § 3252. The designation, confirmed in a letter sent to the company around March 4, restricts certain government contractors from using technology considered vulnerable to sabotage or foreign influence.

Anthropic argues the move stretches the statute far beyond its intended purpose. In court filings, the company contends the designation was meant for foreign-linked security threats rather than policy disagreements with domestic suppliers. The lawsuits claim the government failed to use the “least restrictive means” required by law and instead imposed what the company describes as an unofficial blacklist.

The legal filings also raise constitutional concerns. Anthropic argues the designation violates the First Amendment by punishing the company for publicly advocating limits on AI uses such as autonomous weapons and domestic surveillance. According to the complaint, labeling the firm a national security risk damages its reputation and could threaten hundreds of millions of dollars in contracts.

Anthropic is asking courts to block enforcement of the designation, order federal agencies to withdraw directives halting business with the company, and prevent similar actions in the future. The company said its goal is not to force the government to purchase its technology but to prevent retaliation over policy differences.

“Seeking judicial review does not change our longstanding commitment to harnessing AI to protect our national security,” an Anthropic spokesperson said in a statement. “But this is a necessary step to protect our business, our customers, and our partners.”

Pentagon officials have not publicly commented on the litigation, citing policy regarding active court cases. Some defense leaders have previously argued that military agencies must retain full operational authority over contractor technologies during emergencies and cannot allow vendors to dictate how systems are used.

The dispute arrives as the race to secure military AI contracts intensifies. Rival firms, including OpenAI reached agreements with the Pentagon around the same time Anthropic negotiations broke down. Meanwhile, major technology partners such as Google and Microsoft have indicated they intend to continue working with Anthropic on commercial services unrelated to defense.

Industry analysts say the outcome of the case could establish a precedent for how the federal government pressures AI companies to modify safety policies when national security interests are involved. For now, Anthropic’s consumer products and commercial AI services remain available, while the legal battle over the Pentagon designation begins working its way through the courts.

- Why did Anthropic sue the U.S. government?

Anthropic filed lawsuits claiming the Pentagon unlawfully labeled the company a national security supply chain risk after it refused to remove AI safety restrictions. - What triggered the dispute between Anthropic and the Pentagon?

The conflict began during contract negotiations when the Defense Department demanded unrestricted use of Anthropic’s Claude AI system. - What does the “supply chain risk” designation mean?

The label can limit government contractors from using a company’s technology if officials believe it poses security or procurement risks. - Will Anthropic’s AI services still operate during the lawsuit?

Yes, Anthropic’s commercial AI products and consumer services remain available while the legal case proceeds.

免责声明:本文章仅代表作者个人观点,不代表本平台的立场和观点。本文章仅供信息分享,不构成对任何人的任何投资建议。用户与作者之间的任何争议,与本平台无关。如网页中刊载的文章或图片涉及侵权,请提供相关的权利证明和身份证明发送邮件到support@aicoin.com,本平台相关工作人员将会进行核查。