The Feb. 24 meeting followed weeks of tension over Anthropic’s usage policies for Claude, the large language model integrated into classified Defense Department networks under a 2025 pilot contract valued at up to $200 million. Claude is currently the only fully authorized large language model operating across certain secure Pentagon systems.

According to Axios reporting, Hegseth, joined by Deputy Secretary Steve Feinberg and General Counsel Earl Matthews, objected to Anthropic’s restrictions on mass surveillance of Americans and on fully autonomous weapons systems operating without human oversight. Defense officials argued that those limits constrain lawful military applications and should not override congressional and executive authority.

Anthropic has maintained that its safeguards are designed to prevent misuse while still enabling national security applications. The company says its policies do not block legitimate military use but prohibit activities such as tracking individuals without consent or deploying lethal systems without human control.

“According to a source familiar with the talks, Anthropic has never objected to the use of its models for ‘legitimate military operations,’” Fox News’ Chief National Security Correspondent Jennifer Griffin reported on X. “It also told the Hegseth it never complained to the Pentagon or Palantir about the use of its models in the Maduro raid.”

Claude has been used for intelligence analysis, cyber defense, and operational planning. Reports indicate it supported planning and real-time analysis during the Jan. 3 operation that resulted in the capture of Venezuelan President Nicolás Maduro. Anthropic later sought clarification about the model’s involvement as part of an internal compliance review, which Pentagon officials interpreted as criticism.

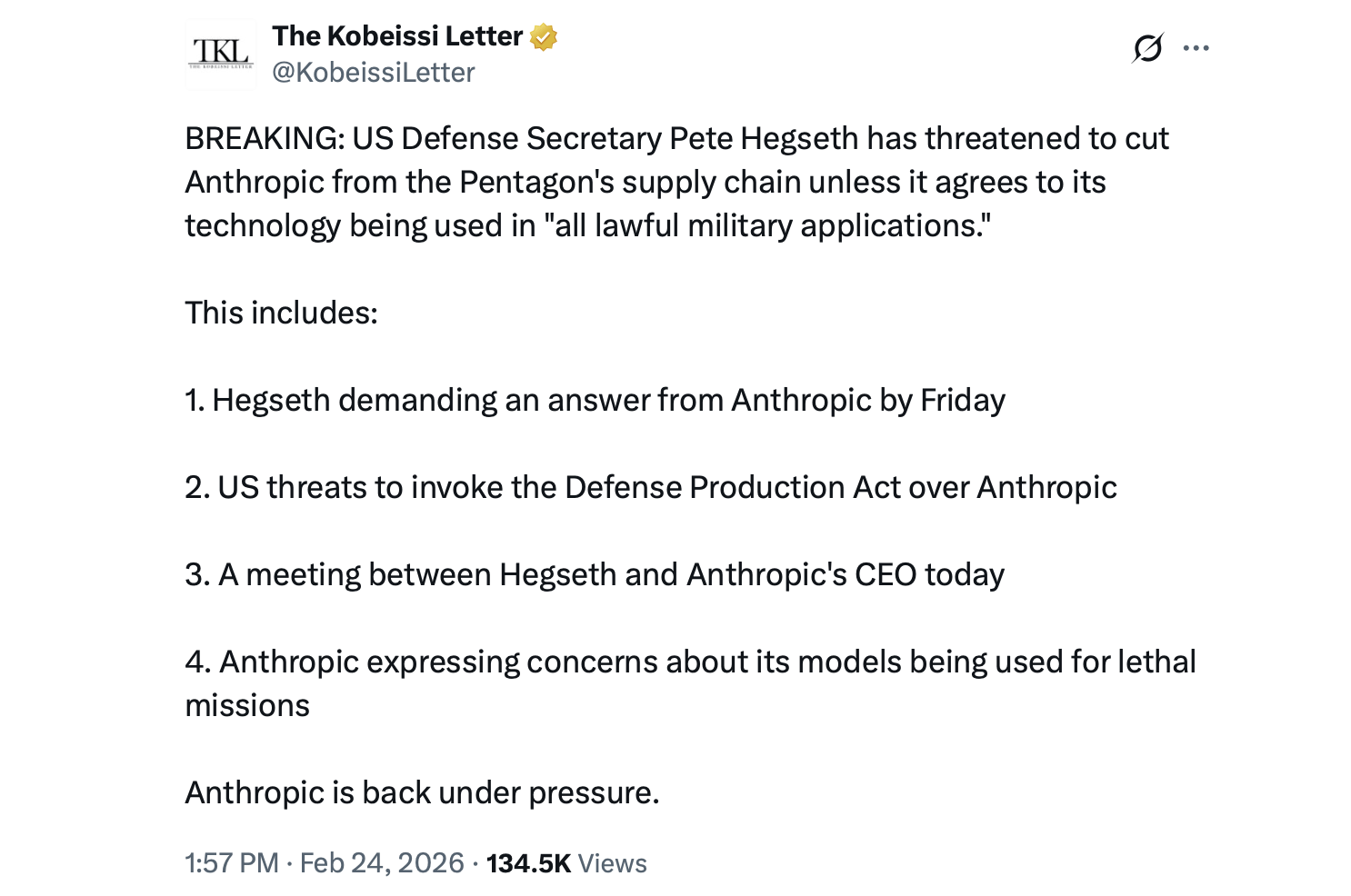

On Jan. 9, Hegseth issued a memo urging artificial intelligence (AI) providers to remove what he described as restrictive conditions. That directive set the stage for renegotiations and ultimately the Feb. 24 meeting.

The Pentagon has set a Feb. 27 deadline for Anthropic to modify its terms. Possible responses include invoking the Defense Production Act to compel compliance or designating Anthropic a supply chain risk, a step that could require contractors to divest from Claude-related systems.

Officials acknowledge that disentangling Claude from existing infrastructure would be operationally complex. Still, the department has alternatives. Competitors such as xAI with its Grok model, OpenAI with ChatGPT, and Google with Gemini are under similar Defense Department contracts and may be positioned to expand their roles if talks falter.

The dispute highlights broader questions about AI governance in military settings, particularly as federal law continues to evolve around emerging technologies. At stake is whether private AI developers can maintain independent ethical policies when their systems become embedded in national security operations.

Reaction across social media platforms has been notably candid. “Warrant less surveillance is back,” one X user wrote in response to Griffin’s X post. “This needs to be way bigger news than what it is. There’s a serious chance of constitutional violations,” another replied on Griffin’s write-up. Others, with a dose of dark humor, joked that Skynet’s arrival might not be far off. “Skynet is here… the 2029 timeline is LIVE,” the individual remarked.

Both sides have characterized the Feb. 24 discussion as substantive. Whether Anthropic adjusts its safeguards before the deadline could determine the future of one of the Pentagon’s most advanced AI integrations.

- What was discussed at the Feb. 24 Pentagon meeting?

The meeting focused on Anthropic’s restrictions on Claude AI and whether they limit lawful military applications. - Why is the Pentagon pressuring Anthropic?

Defense officials argue the company’s safeguards interfere with authorized national security uses. - What could happen if Anthropic does not comply?

The Pentagon could invoke the Defense Production Act or designate the company a supply chain risk. - Are there alternatives to Claude?

Yes, models from xAI, OpenAI and Google are under Defense Department contracts and could expand if needed.

免责声明:本文章仅代表作者个人观点,不代表本平台的立场和观点。本文章仅供信息分享,不构成对任何人的任何投资建议。用户与作者之间的任何争议,与本平台无关。如网页中刊载的文章或图片涉及侵权,请提供相关的权利证明和身份证明发送邮件到support@aicoin.com,本平台相关工作人员将会进行核查。