Author: Sun Pengyue Editor: Da Feng

Source: Zinc Finance

Image source: Generated by Wujie AI

On August 8th, the world's most important roundtable conference in the computer industry, the SIGGRAPH conference of the Association for Computing Machinery, was officially held.

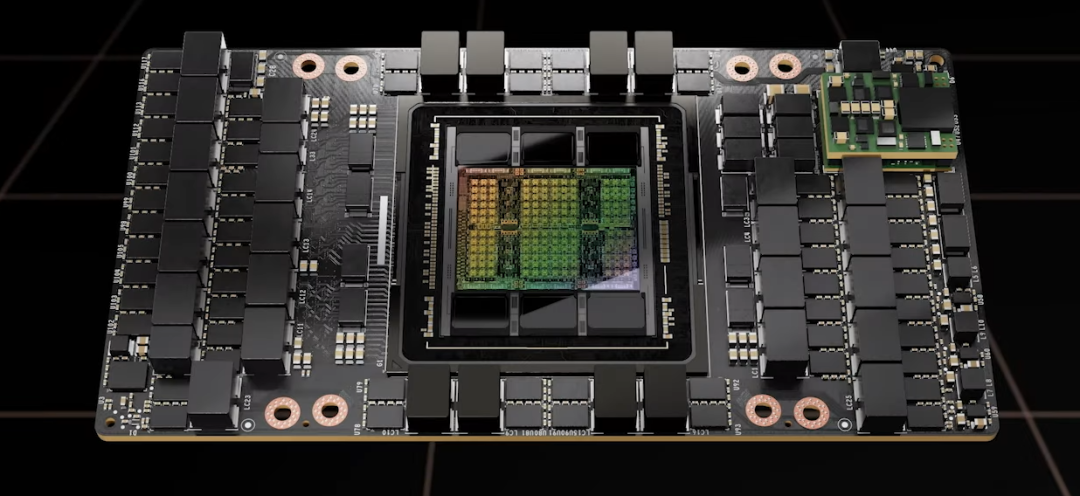

NVIDIA's founder and CEO, Huang Renxun, attended and brought the new generation of NVIDIA's super AI chip, GH200. Huang Renxun is very confident in his new flagship product, calling GH200 "the fastest memory in the world."

In today's AI market, NVIDIA is considered the "center of the entire AI world." Whether it's OpenAI, Google, Meta, Baidu, Tencent, or Alibaba, all generative AI heavily rely on NVIDIA's AI chips for training.

Moreover, according to media reports, the total demand for NVIDIA's AI chip H100 in August 2023 may be around 432,000 units, and the current price of one H100 chip on eBay has even soared to $45,000, equivalent to over 300,000 RMB.

With a shortage of over 400,000 chips and a unit price of $45,000, the total price easily reaches millions of dollars.

NVIDIA is experiencing a market frenzy even more crazy than the "mining era."

AI chips are hard to come by

The so-called AI chip is actually a graphics processing unit (GPU), whose main function is to assist in running the countless calculations involved in training and deploying artificial intelligence algorithms.

In other words, the various intelligent behaviors of generative AI all come from the stacking of numerous GPUs. The more chips used, the smarter the generative AI becomes.

OpenAI has kept the training details of GPT-4 confidential, but according to media speculation, GPT-4 requires at least 8192 H100 chips, with a cost of approximately $21.5 million (150 million RMB) to complete pre-training in about 55 days at a price of $2 per hour.

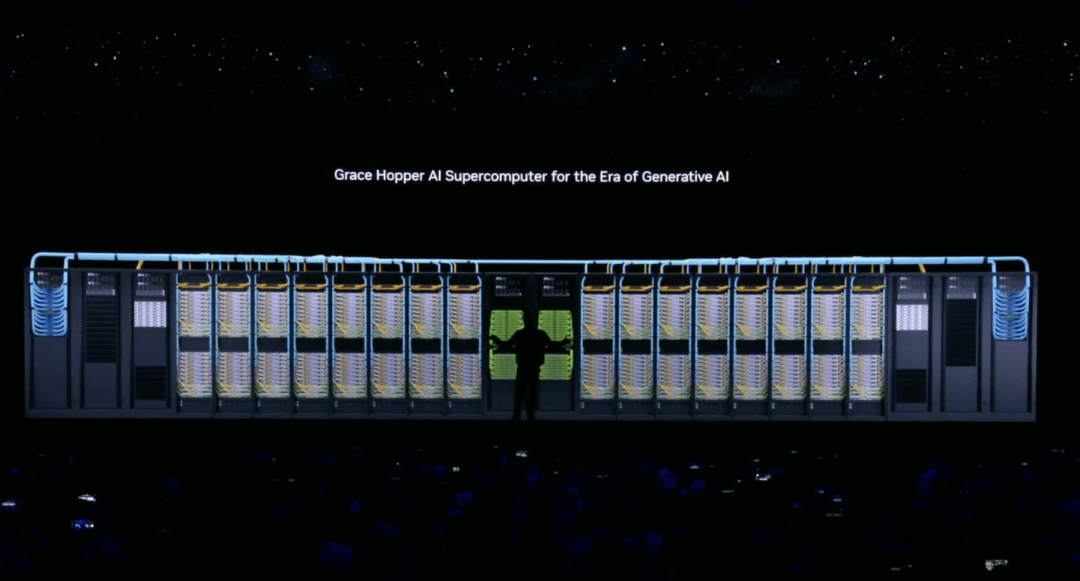

According to a Microsoft executive, the AI supercomputer that supports ChatGPT, which was built by Microsoft in 2019 with an investment of $1 billion, is equipped with tens of thousands of NVIDIA A100 GPUs and over 60 data centers with a total deployment of hundreds of thousands of NVIDIA GPUs.

The AI chips needed for ChatGPT are not fixed but incrementally increasing. The smarter ChatGPT becomes, the more computing power it requires. According to Morgan Stanley's prediction, GPT-5 will likely need to use 25,000 GPUs, about three times as many as GPT-4.

If one wants to meet the demand for a series of AI products such as OpenAI and Google, it is equivalent to NVIDIA being the sole supplier of chips for AI products worldwide, which is a huge test for NVIDIA's production capacity.

Although NVIDIA is ramping up production of AI chips, according to media reports, the large-scale H100 cluster capacity of small and large cloud providers is about to be exhausted, and the "severe shortage of H100" is expected to continue at least until the end of 2024.

Currently, NVIDIA's chips in the AI market are mainly divided into two types: H100 and A100. H100 is the flagship product. In terms of technical details, H100 is about 3.5 times faster in 16-bit inference speed and about 2.3 times faster in 16-bit training speed compared to A100.

Whether it's H100 or A100, all are produced in cooperation with TSMC, which limits the production of H100. Some media have reported that it takes about six months from production to delivery for each H100, and the production efficiency is very slow.

NVIDIA has stated that in the second half of 2023, they will increase their supply capacity for AI chips, but they have not provided any quantitative information.

Many companies and buyers are calling for NVIDIA to increase the production quantity at the foundry, not only cooperating with TSMC, but also giving more orders to Samsung and Intel.

Faster training speed

If it is not possible to increase production capacity, the best solution is to launch higher-performance chips to win with quality.

As a result, NVIDIA has frequently released new GPUs to enhance AI training capabilities. In March of this year, NVIDIA released four AI chips: H100 NVL GPU, L4 Tensor Core GPU, L40 GPU, and NVIDIA Grace Hopper, to meet the increasing computational power needs of generative AI.

Even before the previous generation was mass-produced and launched, NVIDIA, on August 8th at the SIGGRAPH conference, announced the upgraded version of H100, GH200, by Huang Renxun.

It is understood that the all-new GH200 Grace Hopper Superchip is based on the 72-core Grace CPU, equipped with 480GB ECC LPDDR5X memory and GH100 computing GPU, paired with 141GB HBM3E memory, using six 24GB stacks and a 6144-bit memory interface.

The biggest black technology of GH200 is being the world's first chip equipped with HBM3e memory, which can increase its local GPU memory by 50%. This is a "specific upgrade" made specifically for the artificial intelligence market, as top generative AI models are often large in size but limited in memory capacity.

Publicly available information shows that HBM3e memory is SK Hynix's fifth-generation high-bandwidth memory, a new type of high-bandwidth memory technology that can provide higher data transfer rates in a smaller space. It has a capacity of 141GB, a bandwidth of 5TB per second, and can achieve 1.7 times the capacity and 1.55 times the bandwidth of H100.

Since its release in July, SK Hynix has become the darling of the GPU market, leading directly competing products such as Intel's Optane DC and Samsung's Z-NAND flash memory chips.

It is worth mentioning that SK Hynix has always been one of NVIDIA's partners. Starting from HBM3 memory, most of NVIDIA's products use SK Hynix's products. However, SK Hynix's production capacity for the memory needed for AI chips has always been a concern, and NVIDIA has repeatedly requested SK Hynix to increase production capacity.

When one production-constrained giant meets another production-constrained giant, it is inevitable to worry about the production capacity of GH200.

NVIDIA officially stated that compared to the current generation product H100, GH200 has 3.5 times higher memory capacity and 3 times higher bandwidth. In addition, HBM3e memory will make the next-generation GH200 run AI models 3.5 times faster than current models.

Does running AI models 3.5 times faster than H100 mean that one GH200 is equivalent to 3.5 H100s? Everything still needs practical operation to find out.

But for now, one thing that can be confirmed is that as one of the biggest winners in the AI market, NVIDIA has further consolidated its leading position in chip design and market share, widening the gap with AMD and Intel.

NVIDIA's competitors

Faced with a shortage of 430,000 AI chips, no company is indifferent. Especially NVIDIA's biggest competitors, AMD and Intel, will not allow one company to dominate the entire market.

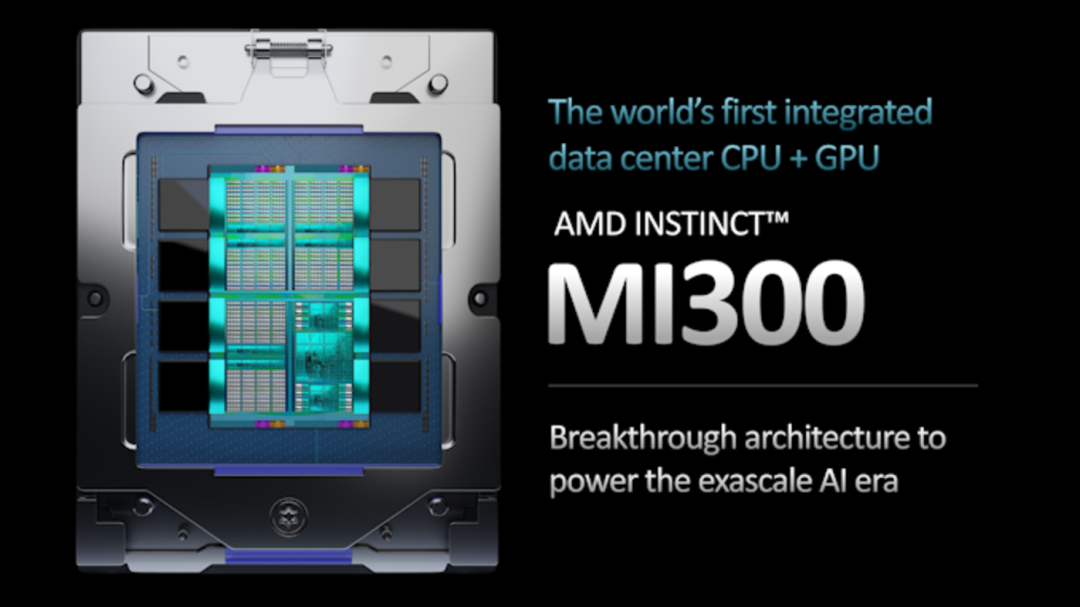

On June 14th of this year, AMD's Chairman and CEO, Su Zifeng, released a series of new AI hardware and software products, including the AI chip designed for large language models, MI300X, officially launching a direct challenge to NVIDIA in the AI market.

In terms of hardware parameters, AMD MI300X has as many as 13 small chips, totaling 146 billion transistors, and is equipped with 128GB of HBM3 memory. Its HBM density is 2.4 times that of NVIDIA's H100, and its bandwidth is 1.6 times that of NVIDIA's H100, meaning it can accelerate the processing speed of generative AI.

Unfortunately, this flagship AI chip is not currently available and is expected to be fully mass-produced in Q4 2023.

On the other hand, another competitor, Intel, acquired the AI chip manufacturer Habana Labs for about $2 billion in 2019, entering the AI chip market.

In August of this year, at Intel's recent earnings conference call, Intel CEO Pat Gelsinger stated that Intel is developing the next-generation Falcon Shores AI supercomputing chip, tentatively named Falcon Shores 2, which is expected to be released in 2026.

In addition to Falcon Shores 2, Intel has also launched the AI chip Gaudi2, which has already started sales, and Gaudi3 is under development.

Unfortunately, the specifications of the Gaudi2 chip are not high, making it difficult to challenge NVIDIA's H100 and A100.

In addition to the foreign semiconductor giants showing off their muscles and starting the "chip competition," domestic semiconductor companies have also begun to develop AI chips.

Among them, Kunlun XAI Accelerator Card RG800, Tianshu Zhixin's Tiangai 100 Accelerator Card, and Suiyuan Technology's second-generation training product Cloud Sui T20/T21 all claim to have the ability to support large model training.

In this battle of chips based on computing power and AI large models, NVIDIA, as one of the biggest winners in the AI market, has demonstrated its strength in chip design and market share.

Although domestic AI chips are slightly behind, the pace of research and market expansion has never stopped, and the future is worth looking forward to.

免责声明:本文章仅代表作者个人观点,不代表本平台的立场和观点。本文章仅供信息分享,不构成对任何人的任何投资建议。用户与作者之间的任何争议,与本平台无关。如网页中刊载的文章或图片涉及侵权,请提供相关的权利证明和身份证明发送邮件到support@aicoin.com,本平台相关工作人员将会进行核查。