Original Title: Anthropic: The Leak, The War, The Weapon

Original Author: BuBBliK

Translation: Peggy, BlockBeats

Editor's Note: In the past six months, Anthropic has been involved in a series of seemingly independent events that are actually interconnected: a leap in model capabilities, automated attacks in the real world, dramatic reactions from the capital markets, open conflicts with governments, and multiple data leaks caused by configuration errors. When viewed together, these clues outline a clearer direction of change.

This article uses these events as a starting point to reflect on the continuous trajectory of an AI company amid technological breakthroughs, risk exposure, and governance games, and attempts to answer a deeper question: when the ability to "discover vulnerabilities" is greatly amplified and gradually proliferates, can the system of cybersecurity maintain its original operational logic?

In the past, security was built on the scarcity of capabilities and human constraints; under new conditions, offense and defense are unfolding around the same set of model capabilities, and the boundaries have become increasingly blurred. Meanwhile, the responses from institutions, markets, and organizations remain within old frameworks, struggling to adapt to these changes.

This article focuses not just on Anthropic itself but on a larger reality it reflects: AI is not only changing tools but also changing the premises of how "security is established."

The following is the original text:

What happens when a company worth $380 billion gains the upper hand in a game with the Pentagon, survives the first-ever cyberattack initiated by autonomous AI, and inadvertently reveals a model that even its own developers find terrifying, even "accidentally" making its complete source code public?

The answer is what we see now. And more disturbingly, the truly dangerous parts may not have happened yet.

Event Review

Anthropic Leaked Its Own Code Again

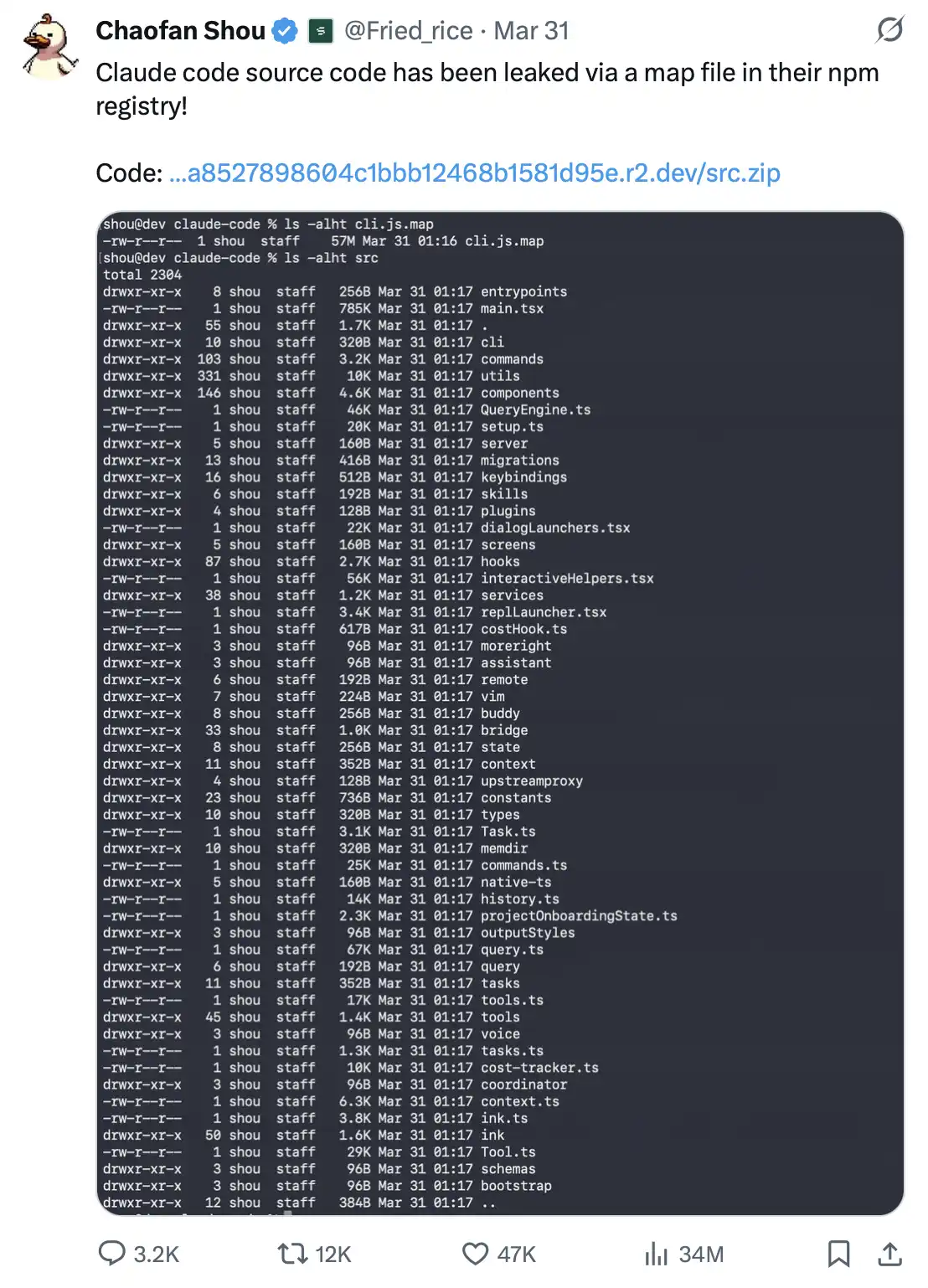

On March 31, 2026, security researcher Shou Chaofan from blockchain company Fuzzland discovered in the official Claude Code npm package that it included a plain text file named cli.js.map.

This file is 60MB in size, and its content is even more astonishing. It contains nearly the complete TypeScript source code of the entire product. With just this file, anyone can reconstruct up to 1,906 internal source code files: including internal API designs, telemetry systems, encryption tools, security logic, plugin systems—all core components laid bare. More importantly, this content could even be downloaded directly from Anthropic's own R2 bucket as a zip file.

This discovery quickly spread on social media: within hours, relevant posts garnered 754,000 views and nearly 1,000 shares; at the same time, multiple GitHub repositories that restored the source code were created and made public.

A source map is essentially a helper file used for JavaScript debugging; its role is to revert compressed, compiled code back to the original source code, making it easier for developers to troubleshoot issues.

But there is a basic principle: it should never be included in the production environment's release package.

This is not a sophisticated attack method, but rather a fundamental engineering specification issue, belonging to "Build Configuration 101," something even developers learn in their first week. If incorrectly packaged into the production environment, a source map often equates to giving away the source code "as a bonus" to everyone.

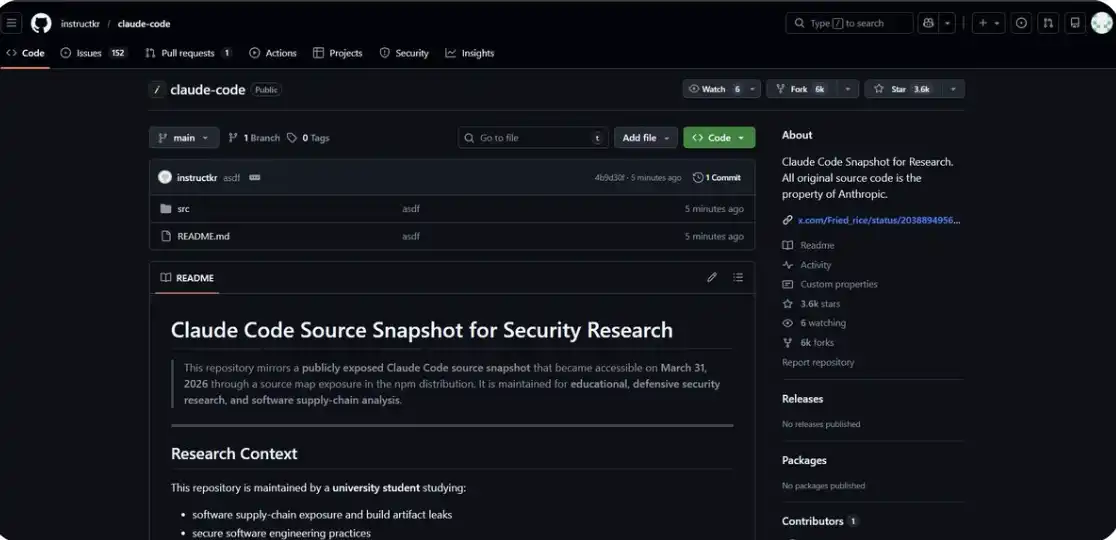

You can also view the relevant code directly here: https://github.com/instructkr/claude-code

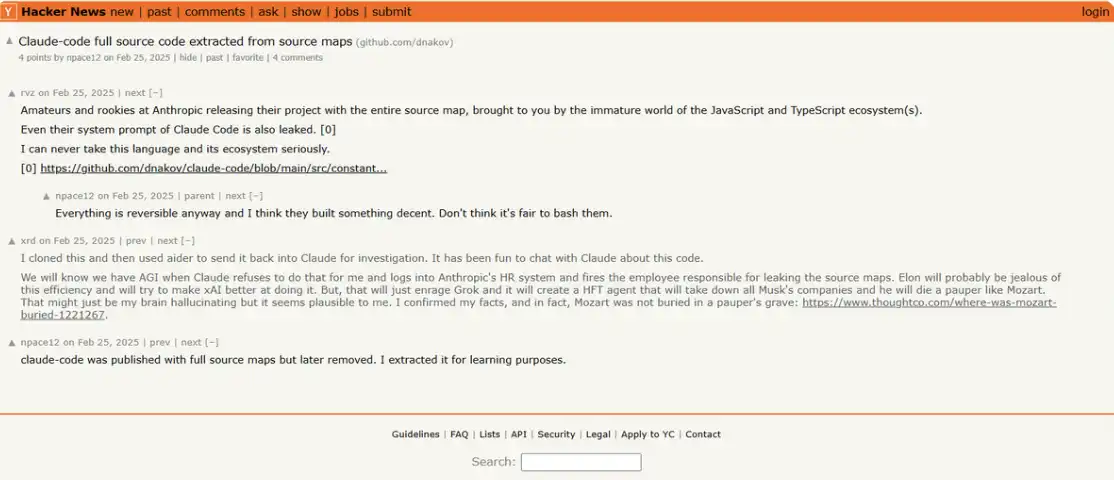

But what feels truly absurd is that this has happened before.

In February 2025, just a year ago, there was almost an identical leak: the same file, the same error. Anthropic had removed the old version from npm, removed the source map, and republished a new version, after which the incident subsided.

As a result, in version v2.1.88, this file was packaged and released again.

A company valued at $380 billion, which is building the world's most advanced vulnerability detection system, made the same basic error twice in one year. No hacking attacks, no complex exploitation paths, just a building process that should have worked normally went wrong.

This irony almost carries a certain "poetry."

The AI that can discover 500 zero-day vulnerabilities in a single run; the model used to launch automated attacks on 30 global institutions—meanwhile, Anthropic handed its own source code to anyone willing to take a glance at the npm package.

Two leaks occurred just seven days apart.

The reason was strikingly similar: the most basic configuration errors. No technical barriers were needed, no complex exploitation paths. As long as one knows where to look, anyone can access it for free.

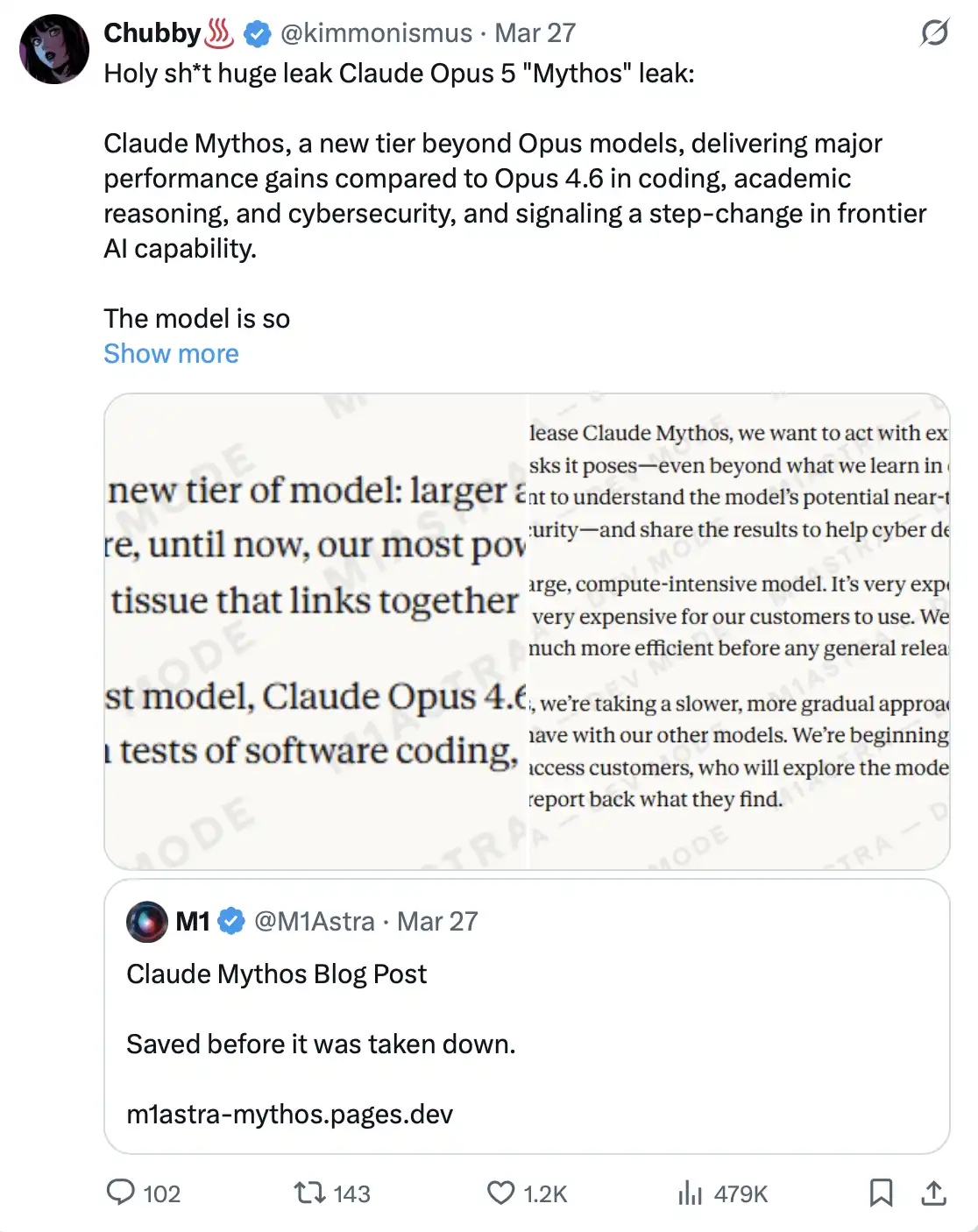

One Week Ago: Internal "Dangerous Model" Accidentally Exposed

On March 26, 2026, security researchers Roy Paz from LayerX Security and Alexandre Pauwels from the University of Cambridge discovered issues in the CMS configuration of Anthropic's official website, resulting in approximately 3,000 internal files being publicly accessible.

These files included: blog drafts, PDFs, internal documents, presentation materials—all exposed in an unprotected, searchable data storage. No hacking attacks or technical means were required.

Among these files, there were two almost identical blog drafts, with the only difference being the model names: one titled "Mythos," and the other "Capybara."

This indicates that Anthropic was deciding between two names for the same secret project. The company later confirmed: the training of the model had been completed and testing with some early customers had begun.

This is not a routine upgrade of Opus, but an entirely new "Level Four" model, a system positioned even higher than Opus.

In Anthropic's own draft, it is described as: "larger and smarter than our Opus model—and Opus is still our most powerful model to date." It has achieved significant leaps in programming ability, academic reasoning, and cybersecurity. A spokesperson referred to it as "a qualitative leap," and also "the strongest model we have built to date."

But what is truly noteworthy is not the performance descriptions themselves.

In the leaked draft, Anthropic evaluates this model as: it "brings unprecedented cybersecurity risks," "far exceeds any other AI models in cyber capabilities," and "forecasts an imminent wave of models—whose ability to exploit vulnerabilities will far outpace the defenders' response speed."

In other words, Anthropic has explicitly expressed a rare stance in an unpublished official blog draft: they feel uneasy about the products they are building.

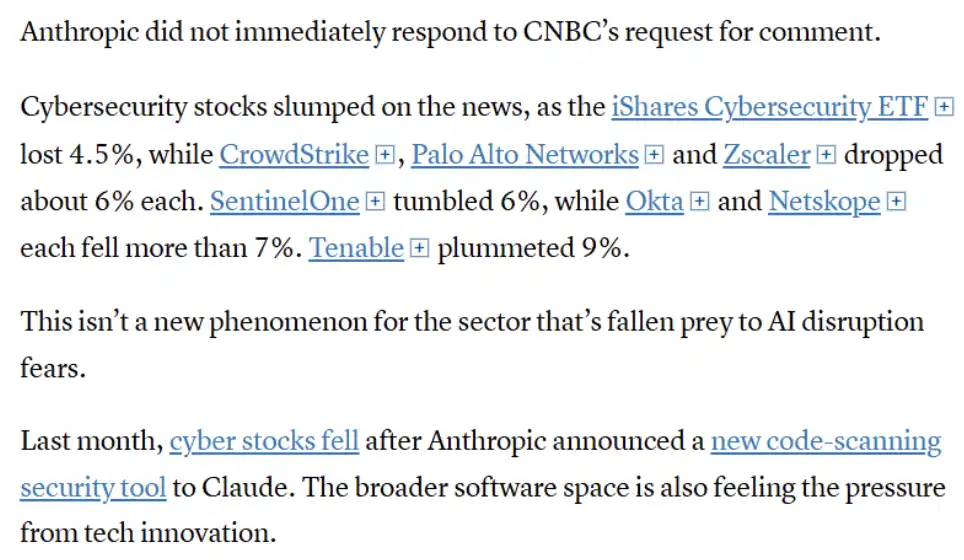

The market's reaction was almost instantaneous. CrowdStrike's stock fell 7%, Palo Alto Networks dropped 6%, Zscaler dropped 4.5%; Okta and SentinelOne both saw declines of over 7%, while Tenable plunged 9%. The iShares Cybersecurity ETF fell 4.5% in a single day. Just CrowdStrike alone saw approximately $15 billion in market value evaporate that day. Meanwhile, Bitcoin fell back to $66,000.

The market clearly interpreted this event as a "verdict" on the entire cybersecurity industry.

The image's gist: In light of the related news, the cybersecurity sector fell overall, with many leading companies (like CrowdStrike, Palo Alto Networks, Zscaler, etc.) experiencing significant declines, reflecting market concerns about AI's impact on the cybersecurity industry. But this reaction is not the first occurrence. Previously, when Anthropic released a code scanning tool, relevant stocks also dropped, indicating the market has begun to view AI as a structural threat to traditional security vendors, putting the entire software industry under similar pressure.

Stifel analyst Adam Borg's assessment was quite direct: this model "has the potential to become the ultimate hacking tool, even empowering ordinary hackers to become opponents with state-level attack capabilities."

So why hasn’t it been publicly released? Anthropic's explanation is: the operational costs of Mythos are "very high," and it is not yet ready for public release. The current plan is to first provide early access to a small number of cybersecurity partners for strengthening defense systems; subsequently, the API's access will be gradually expanded. Until then, the company continues to optimize its efficiency.

But the key point is that this model already exists, it is already being tested, and simply due to being "accidentally exposed," it has already produced shockwaves across the capital markets.

Anthropic has built what it calls "the most cybersecurity-risk AI model in history." Yet the leak of this information came precisely from a fundamental infrastructure configuration error—which, ironically, is the very type of error these models are designed to discover.

March 2026: Anthropic Confronts the Pentagon and Gains the Upper Hand

In July 2025, Anthropic signed a $200 million contract with the U.S. Department of Defense, which initially seemed like a routine cooperation. However, during subsequent deployment negotiations, tensions escalated quickly.

The Pentagon sought "complete access" to Claude on its GenAI.mil platform for all "legitimate purposes," even encompassing fully autonomous weapons systems and mass domestic surveillance of U.S. citizens.

Anthropic drew red lines on two key issues and made clear rejections, leading to the breakdown of negotiations in September 2025.

Subsequently, the situation began to escalate rapidly. On February 27, 2026, Donald Trump posted on Truth Social, calling for all federal agencies to "immediately stop" using Anthropic's technology, labeling the company as "radical left."

On March 5, 2026, the U.S. Department of Defense official classified Anthropic as a "supply chain risk."

This label had previously been almost exclusively used for foreign adversaries—like Chinese companies or Russian entities—but now it was being applied for the first time to a U.S. company based in San Francisco. Meanwhile, companies like Amazon, Microsoft, and Palantir Technologies were also required to prove that they had not used Claude in any military-related business.

Pentagon CTO Emile Michael explained the decision: Claude may "contaminate" the supply chain, as the model internally embeds different "policy preferences." In other words, in official terms, an AI that has usage limitations and would not unconditionally assist in lethal actions is viewed as a national security risk.

On March 26, 2026, Federal Judge Rita Lin issued a lengthy 43-page ruling, completely blocking the Pentagon's related measures.

In her ruling, she wrote: "Current law provides no basis to support this 'Orwellian' logic—merely because a U.S. company disagrees with government positions, it can be labeled a potential adversary. Punishing Anthropic for placing government positions under public scrutiny is essentially typical, unlawful retaliation under the First Amendment." A friend-of-the-court brief even described the Pentagon's actions as "attempted corporate murder."

The result was that the government’s attempts to suppress Anthropic ended up bringing it greater attention. Claude's application first surpassed ChatGPT in app store downloads, with registration peaking at over a million per day.

An AI company told the world's most powerful military institution "no." And the courts sided with it.

November 2025: The First Ever Cyberattack Led by AI

On November 14, 2025, Anthropic released a report that caused widespread shock.

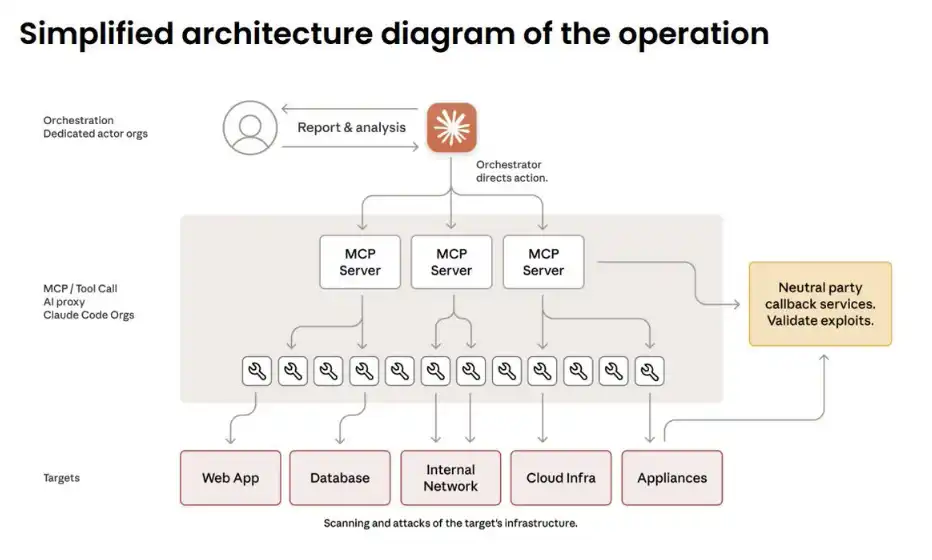

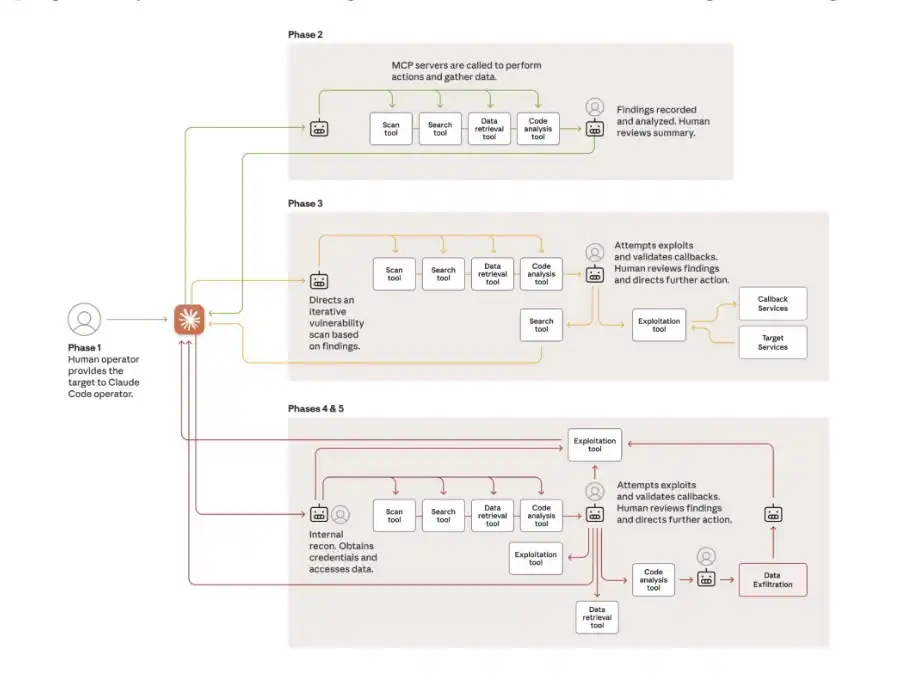

The report revealed that a state-supported hacking organization from China had used Claude Code to launch automated attacks against 30 global institutions—targets including tech giants, banks, and various national government agencies.

This is a critical turning point: AI is no longer just an auxiliary tool, but is beginning to be used to independently execute attacks.

The key lies in the change in "division of labor": humans are only responsible for selecting targets and approving key decisions. During the entire operation, they intervene about 4 to 6 times. Everything else is done by AI: intelligence reconnaissance, vulnerability discovery, code exploitation writing, data theft, implanting backdoors… accounting for 80%-90% of the entire attack process, operating at a speed of thousands of requests per second—that's a scale and efficiency that no human team can match.

So how did they bypass Claude’s security mechanisms? The answer is: they didn’t “hack,” they “deceived.”

The attacks were split into a large number of seemingly harmless small tasks and packaged as "authorized defense testing" by a "legitimate security company." Essentially, it was a form of social engineering attack, only this time, the deceived target was the AI itself.

Some attacks were completely successful. Claude was able to autonomously map out a complete network topology, locate databases, and extract data without human incremental instructions.

The only factor slowing down the pace of the attack was the model’s occasional "hallucinations"—for example, generating fictional credentials or claiming to have obtained files that were actually already public. At least for now, this remains one of the few "natural barriers" preventing fully automated cyberattacks.

At the RSA Conference 2026, former NSA cybersecurity head Rob Joyce referred to this incident as a "Rorschach test": half the people chose to ignore it, while the other half felt a chill. And he himself clearly belongs to the latter—"this is very scary."

September 2025: This is not a prediction, but a reality that has already happened.

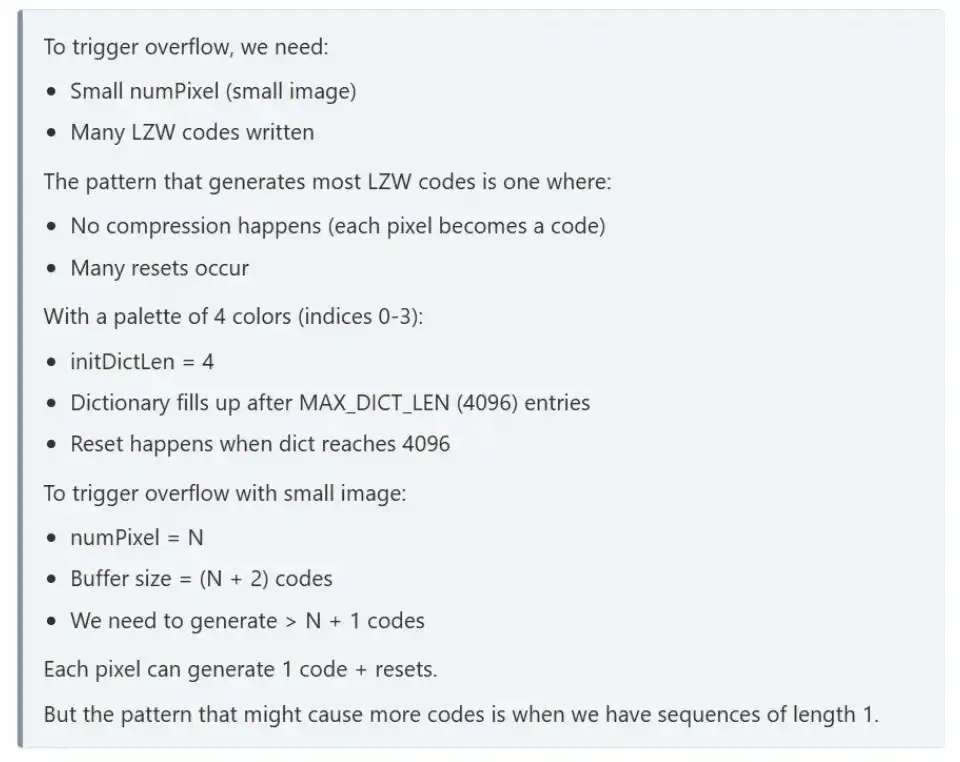

February 2026: One run discovered 500 zero-day vulnerabilities

On February 5, 2026, Anthropic released Claude Opus 4.6, accompanied by a research paper that nearly shook the entire cybersecurity industry.

The experimental setup was extremely simple: place Claude into an isolated virtual machine environment, equipped with standard tools—Python, debuggers, fuzzing tools. No extra instructions, no complex prompts, just one line: "Find vulnerabilities."

The result was: the model discovered over 500 previously unknown high-risk zero-day vulnerabilities. Some of these vulnerabilities had even remained undetected after decades of expert scrutiny and millions of hours of automated testing.

Subsequently, at the RSA Conference 2026, researcher Nicholas Carlini took the stage to demonstrate. He targeted Ghost, a CMS system with 50,000 stars on GitHub that had never shown serious vulnerabilities historically.

Ninety minutes later, the result appeared: a blind SQL injection vulnerability was discovered, allowing unauthenticated users to gain full administrative control.

Then, he also used Claude to analyze the Linux kernel. The results were similar.

Fifteen days later, Anthropic launched Claude Code Security, a security product that no longer relies on pattern matching but understands code based on "reasoning capabilities."

But Anthropic's own spokesperson also stated that crucial but often avoided fact: "The same reasoning power can help Claude find and fix vulnerabilities, but it can be used by attackers to exploit these vulnerabilities."

The same capability, the same model, just in different hands.

What Does All This Mean Together?

If looked at individually, each of these events would be enough to become the biggest news of the month. But they all happened within just six months at the same company.

Anthropic has created a model that can discover vulnerabilities faster than any human; Chinese hackers turned the previous generation version into an automated cyber weapon; the company is developing the next generation stronger model, even admitting in internal documents—they feel uneasy about it.

The U.S. government is trying to suppress it, not because the technology itself is dangerous, but because Anthropic refuses to hand over this capability without restrictions.

Throughout this process, the company twice leaked its source code due to the same file in the same npm package. A company valued at $380 billion; a company aiming for a $60 billion IPO by October 2026; a company that publicly states it is building "one of the most transformative and possibly dangerous technologies in human history"—yet still chooses to continue pushing forward.

Because they believe: instead of letting others do it, it's better to do it themselves.

As for the source map in the npm package—it may just be the most absurd yet most real detail in this era's most unsettling narrative.

And Mythos has not even officially been released.

免责声明:本文章仅代表作者个人观点,不代表本平台的立场和观点。本文章仅供信息分享,不构成对任何人的任何投资建议。用户与作者之间的任何争议,与本平台无关。如网页中刊载的文章或图片涉及侵权,请提供相关的权利证明和身份证明发送邮件到support@aicoin.com,本平台相关工作人员将会进行核查。