In the evolution of AI programming tools, Claude Code was the absolute king that set the industry standard — with its core capabilities of deep reasoning, full-link autonomous execution, and global code library understanding, it pushed AI programming from "code completion tools" to a new era of "autonomous development agents," creating technical and engineering barriers that competitors struggled to surpass. However, a sudden source code leak at the end of March 2026 shattered this pattern completely: due to packaging oversight, Anthropic accidentally exposed about 510,000 lines of core TypeScript source code, complete architectural logic, toolchain design, and unreleased features via debug files (.map) across the internet. This non-hacker attack, non-vulnerability exploit “blunder leak” not only made Claude Code's technical trump cards public but also directly flattened the core barriers in the AI programming tool sector — all players suddenly found themselves on the same technical starting line, and the industry shifted from "barrier competition" to "security and trust competition." As AI evolved from an assisting tool to an agentic proxy that autonomously manages assets and executes transactions, the "architectural security flaws" exposed by the Claude incident became the key to breaking through for OKX Agentic Wallet: only by reconstructing the security paradigm from the ground up can one safeguard assets in the era of AI autonomy.

1. Claude Code: Once Defined as the Absolute Benchmark for AI Programming, Why Did Its Barriers Collapse Overnight

(1) What is Claude Code: An Industry Revolution from Tool to Agent

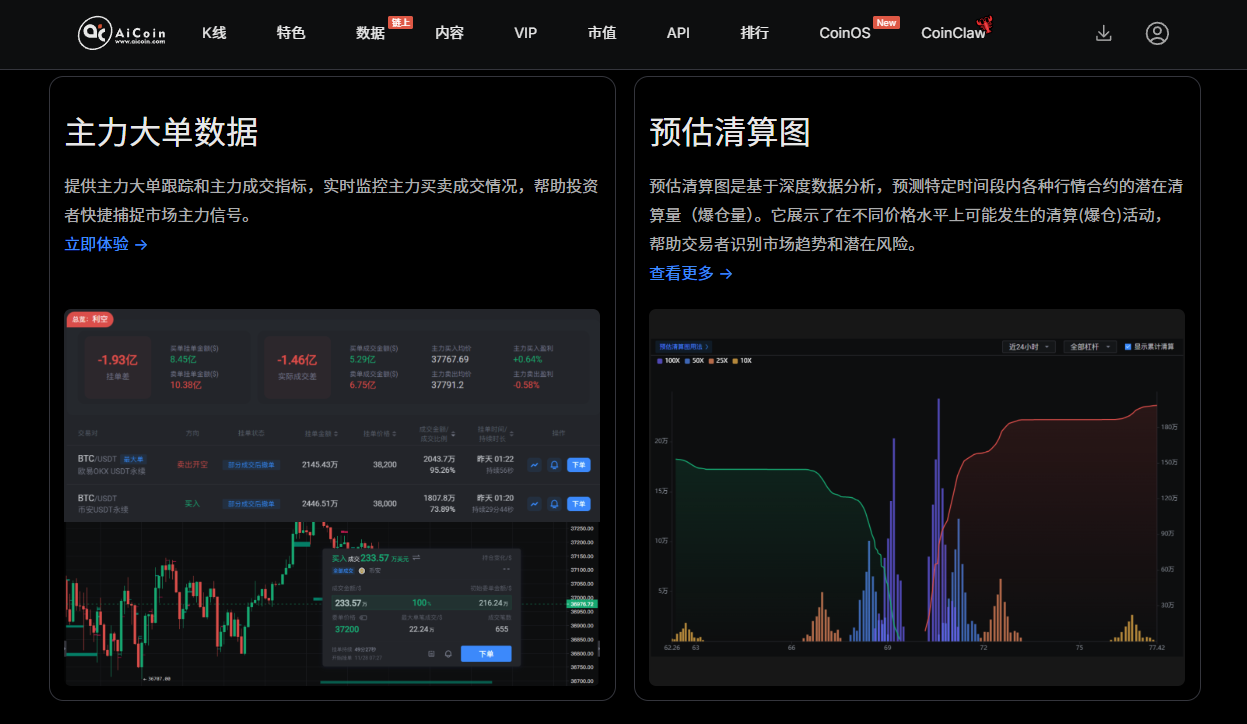

Claude Code is an end-to-end native AI programming agent developed by Anthropic based on the Claude 3.5 Sonnet/Opus model, which started public testing at the end of 2024, launched the official v1.0 version in March 2025, and iterated to v2.0 in early 2026, quickly topping the global AI programming tool rankings. Unlike traditional tools like GitHub Copilot and Cursor, it is not just a simple code completer but an intelligent agent that can deeply understand the entire codebase, autonomously break down tasks, collaboratively develop across files, execute terminal commands, and complete full-process development and deployment:

- It has a 1 million token super large context window, capable of parsing large project architectures and dependencies all at once, thoroughly resolving the pain points of traditional tools like "context truncation, logical gaps";

- It supports system-level autonomous execution, able to read and write files directly, run tests, manage Git operations, fix complex bugs, and even complete project refactoring and deployment;

- The accuracy of code generation, multi-file refactoring quality, and bug-fixing success rate are all industry-leading (third-party evaluations show: code completion accuracy of 92%, excellent refactoring quality, and a bug-fixing success rate of 88%);

- It covers over 50 programming languages, deeply integrates IDE, terminal, desktop, and web, making it the preferred tool for professional developers and enterprise research teams, with annual revenue exceeding 2.5 billion dollars and accounting for 4% of GitHub public code submissions.

At that time, Claude Code established an absolute leading advantage with its three core barriers of model capability, engineering architecture, and full-link workflow: its underlying agent orchestration, context management, code security verification, and multi-step task validation engineering logic are core secrets of Anthropic, forming a “technical moat” that cannot be replicated by small and medium firms or competing products.

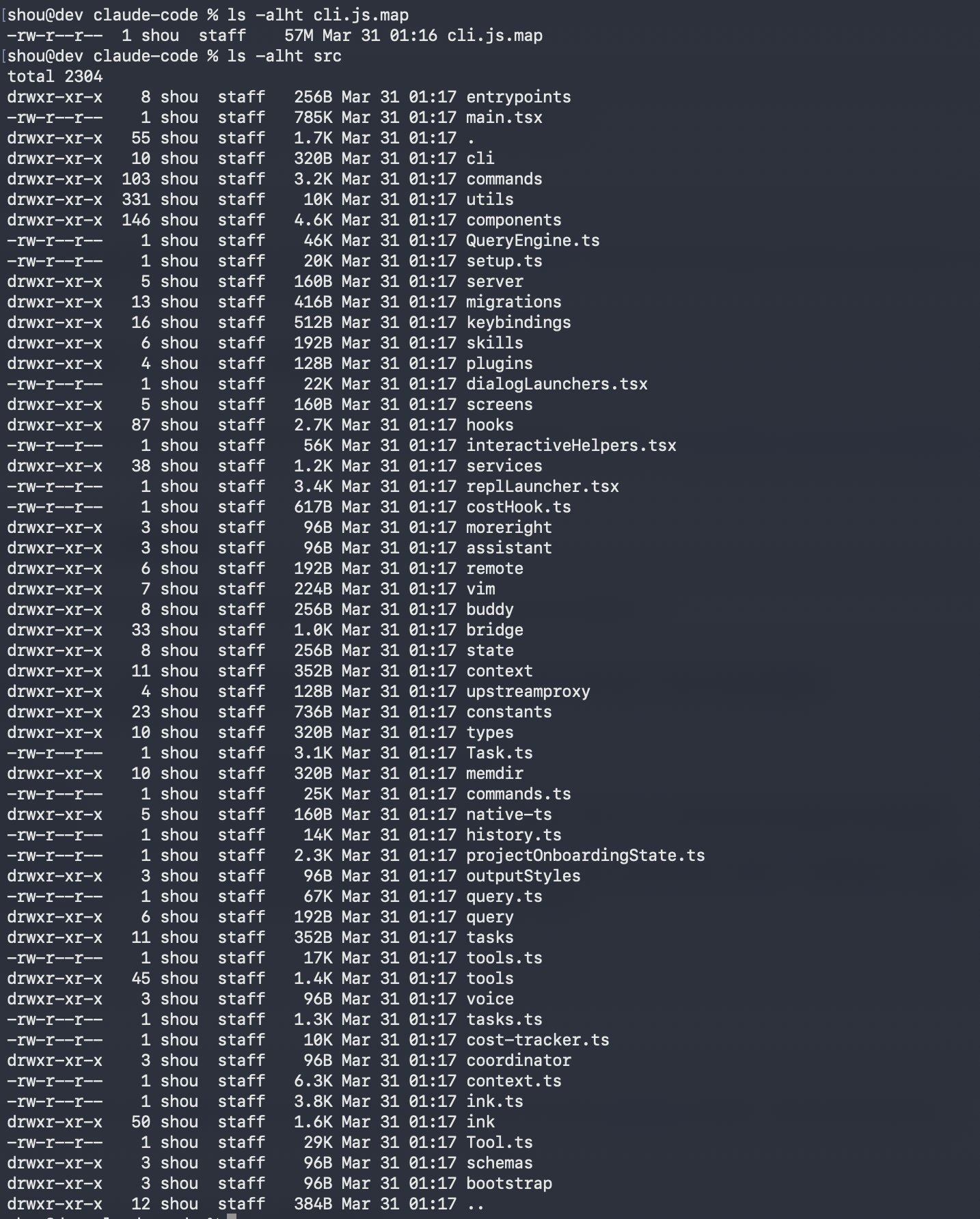

(2) The Essence of the Leak: Barriers Flattened, Industry Returns to the Same Starting Line

The leaked 512,000 lines of code and over 1,900 files fully exposed all engineering details of Claude Code's client architecture, core tool modules, prompt engineering logic, permission calling mechanisms, project parsing links, and unreleased features. More critically, after the code leak, it was quickly mirrored and rewritten by the community (Python/Rust refactoring), forming an irretrievable "open-source version of Claude Code" — this means:

1. The technical barriers have completely disappeared: all manufacturers and developers can directly learn, replicate, and modify its core architecture, no longer needing to build an AI programming agent engineering system from scratch;

2. Feature homogenization accelerates: unreleased features like "long-term memory assistant," "undercover mode," and "timed trigger agent" were quickly referenced throughout the industry, significantly narrowing the functional differences in AI programming tools;

3. The focus of competition has completely shifted: the industry has moved from "which AI can write better code" to "which AI is safer, more controllable, and more trustworthy" — after all, when technical capabilities converge, security vulnerabilities and architectural defects will become critical shortcomings.

The fall of Claude Code's position is fundamentally a failure of the "function first, safety deferred" model: it built robust AI capabilities with top-notch technology, but due to basic configuration oversights, permission isolation failures, and lack of architectural security constraints, all advantages were rendered null. This lesson directly translates into the fields of AI + Web3 and AI asset proxies: no matter how powerful AI capabilities are, without a foundational security architecture, a minor misstep can lead to an entire collapse.

2. The Claude Source Code Leak: Not a Technical Vulnerability, but a Fundamental Flaw in AI Security Architecture

The Claude leak incident superficially appears as an engineering configuration error — the frontend packaging did not remove the .map debug mapping files, leading to full exposure of the complete source code, internal interfaces, permission logic, and environmental configurations. But its deeper essence is a common security design shortcoming in centralized AI systems:

1. No physical isolation of core assets and business logic: source code, keys, and permission configurations are at the same access level, with no independent security domain established. A single point error can trigger total leakage, with no disruption mechanism;

2. Security relies on manual control, rather than architectural enforced constraints: it depends on the self-inspection by developers and procedural norms to mitigate risks, ignoring the objective law that "humans inevitably make mistakes," and lacks unavoidable technical protection;

3. Over-concentrated permission system, with no minimum permission constraints: internal accounts and high-permission interfaces lack graded isolation, with leakage meaning a complete loss of control, leading to uncontrollable and irretrievable risks;

4. No post-incident loss mitigation mechanism, leakage is permanently diffuse: once the code is public, it is replicable, analyzable, and usable by anyone on the internet, with no closed-loop control capability, making risks persist long-term.

This type of risk only affects business secrets in regular internet products, but in AI + finance and AI + Web3 fields, it will directly translate into irreversible asset losses. When AI agents autonomously manage wallets, execute transactions, and sign contracts, any architectural oversight equates to assets being exposed.

3. The Rise of the Agentic Economy: The Financial Security Paradigm Shift Brought by AI Autonomy

With the popularization of AI agent technology, the Agentic Economy is forming: AI no longer passively responds to instructions, but autonomously plans, executes, and interacts, deeply participating in asset circulation, transaction decisions, and on-chain operations.

This trend brings two major security transformations:

1. Risk主体转移: risk has shifted from "user operational error" to "AI system vulnerabilities, configuration defects, permission loss of control".

2. Risk intensity increases: AI has 7×24 hour execution and batch operation capabilities, and once it goes out of control, asset losses occur instantly, leaving no window for human intervention.

The Claude incident proves that: traditional security approaches have completely failed. Relying on patches, audits, and manual verification cannot cope with the systemic risks brought by AI autonomy. True security must meet three major prerequisites:

- Absolute isolation of core assets, not to be touched by AI;

- Minimized, manageable, and breakable permissions;

- Avoidance of single points of failure causing global collapse at the architectural level.

This is precisely the security logic that OKX Agentic Wallet established from the very beginning of its design.

4. OKX Agentic Wallet: Redefining AI Wallet Risk Boundaries Based on Architectural Security

Faced with the security challenges of the Agentic economy, OKX did not simply layer AI capabilities onto traditional wallets but reconstructed the security system from the ground up, completely eliminating all the fatal risks exposed by the Claude leak at the architectural level. Its security logic forms a fundamental generational difference from traditional AI products:

(1) TEE Hardware-Level Trusted Execution Environment: Permanently Isolating Private Keys from AI, Eliminating Leakage at the Source

The core tragedy of Claude is that keys and code were stored on the same layer, while OKX Agentic Wallet adopts a TEE Trusted Execution Environment for physical isolation:

- Mnemonic phrases and private keys run independently within a hardware encryption area, inaccessible, unreadable, and non-transferable by AI agents;

- Transaction signing is completed in a secure area without exposure to the external environment;

- No matter what errors occur in frontend configurations, AI plugins, or system scripts, core assets remain unaffected.

Isolation is the highest form of security. This design fundamentally avoids the possibility of "single point errors leading to core assets being exposed."

(2) Minimal Permission Architecture: AI Only Has Authorized Capabilities, No Implicit Permissions, No God Mode

After the Claude leak, high permissions were fully opened, while OKX implements a fine-grained permission control system:

- Users can customize trading limits, contract whitelists, target addresses, and operation types;

- AI agents can only operate within the authorized scope, no exceeding authority, no elevating privileges, and no hidden backdoors;

- Permissions can be modified, paused, and revoked at any time, achieving lifecycle control.

Security no longer relies on AI not making mistakes but on the architecture not allowing AI to make mistakes.

(3) Sandbox Isolation Mechanism: Separating Agent from Core Wallet, No Point of Failure Propagation

OKX operates AI agents in a dedicated secure sandbox:

- AI logic, plugins, skills, and core asset layers are completely separated;

- Even if the AI module has vulnerabilities, anomalies, or is externally hijacked, it cannot penetrate the secure domain;

- Avoids chain risks of "one crash leads to global failure."

(4) Full-Link Audit and Real-Time Break: Risks Are Traceable, Blockable, and Terminable

Unlike the irretrievable situation post-Claude leak, OKX has complete operational logs and risk control capabilities:

- All AI actions are traceable on-chain and auditable throughout the process;

- Real-time interception of abnormal transactions, phishing authorizations, and risky contracts;

- Supports one-click breaks, emergency stops, and authorization reversals to achieve a risk closed-loop.

AiCoin Users Enjoy Exclusive Benefits Using OKX Boost:

Bind invitation code AICOIN88 to enjoy a 20% fee reduction!

Binding link:

https://web3.okx.com/ul/joindex?ref=AICOIN88

📢 Join the AiCoin community to follow more new coins and earning information from exchanges

Official Telegram community: t.me/aicoincn

AiCoin Chinese Twitter: https://x.com/AiCoinzh

OKX Benefits Group:

https://aicoin.com/link/chat?cid=l61eM4owQ

Experience Web3 in the AI era

5. Industry Insights: The Endgame of AI Finance Competition is a Contest of Security Architecture

The Claude source code leak event provides a clear conclusion: the intelligence level of AI does not determine financial value; the security boundaries of AI determine financial trust.

Many AI wallets in the current industry are still stuck in the "AI + wallet" additive model, failing to address underlying issues such as isolation, permissions, and interruption, essentially replicating Anthropic's security flaws. The core value of OKX Agentic Wallet lies in: it is not about making AI smarter, but ensuring that AI operates intelligently within an absolutely secure framework.

In the era of the Agentic economy, the core logic of financial security has shifted:

- From "avoiding mistakes" to "allowing mistakes, but never allowing fatal consequences";

- From "manual control" to "architectural enforcement";

- From "function first" to "safety foundation first."

This is not just an innovation at the product level, but a paradigm upgrade of Web3 + AI financial infrastructure.

The leak of Claude Code's source code is a wake-up call for the AI era: technical barriers can be flattened, functional advantages can be replicated, but defects in security architecture are eternally irreparable fatal wounds. As AI programming tools return to the same starting line due to leaks, and as the Agentic economy becomes a mainstream trend in Web3, OKX Agentic Wallet provides the optimal solution for AI asset security with its four core capabilities of "hardware isolation, minimal permissions, sandbox protection, and real-time interruption" — AI can continue to iterate and evolve, but asset security must eliminate risks from the root.

The future competition in AI finance will never be a competition of model capabilities, but a long race of security architecture. Those who can maintain the bottom line of "architecture does not allow mistakes" will truly control the future of Web3 assets in the era of AI autonomy.

This article reflects the author's personal views and does not represent the position of this platform. The opinions, conclusions, and recommendations in the article are for reference only and do not constitute any investment advice related to this platform. The market has risks; investment requires caution.

免责声明:本文章仅代表作者个人观点,不代表本平台的立场和观点。本文章仅供信息分享,不构成对任何人的任何投资建议。用户与作者之间的任何争议,与本平台无关。如网页中刊载的文章或图片涉及侵权,请提供相关的权利证明和身份证明发送邮件到support@aicoin.com,本平台相关工作人员将会进行核查。