Author: Garry's List

Translation: Deep Tide TechFlow

Deep Tide Guide: Anthropic has just released the most comprehensive real-world usage study of AI Agents to date, with the core data showing that software engineering accounts for nearly 50% of Agent tool calls, while healthcare, law, education, and 16 other verticals combined make up less than half of the remaining total, with each sector's share being below 5%.

This is not a signal of market saturation, but a map of 300 vertical AI unicorns waiting to be built—what's even more valuable is the counterintuitive finding cited in the article: the models can work independently for nearly 5 hours, but users actually only let them work for 42 minutes; this "trust deficit" itself is the next product opportunity.

The full text is as follows:

Software engineering occupies nearly 50% of all AI Agent tool call activities. The other half is scattered across 16 verticals, none of which exceed 9%. This indicates that there are 300 vertical AI unicorns waiting to be built.

If I were to start a business today, I would focus on the red area of the bar chart above until I see my future.

Box founder Aaron Levie stated:

This chart reminds us well of the immense opportunities present in the AI Agent domain right now.

Horizontally, there will certainly be numerous Agent opportunities, but there are also many workflows that require deep domain expertise to truly help users automate unique processes in their verticals.

The template is: build Agent software that accesses proprietary data, processing workflows in a way that effectively connects users and Agents, while also having the capability for deep domain-specific contextual engineering and driving client-side change management.

Currently, many fields still have vast gaps.

Software engineering occupies half of all AI Agent activities. The other half is scattered across 16 vertical fields, none exceeding 9%. Healthcare accounts for 1%, law for 0.9%, and education for 1.8%. These are not saturated markets, but rather markets that hardly exist.

Anthropic has just released the most comprehensive real-world usage study of AI Agents to date. The core discovery is: software engineering accounts for 49.7% of Agent tool calls on its API. The buried core conclusion is: everything else is blue ocean.

Deployment Lag

There is one piece of data that should excite entrepreneurs: the capabilities of the model have already far exceeded the boundaries of what users are willing to trust it with.

METR's capability assessment shows that Claude can tackle tasks that would take a human nearly five hours to complete. However, in actual use, the 99.9th percentile session duration is only about 42 minutes. This gap—the difference between what AI can do and what we allow it to do—is a huge opportunity.

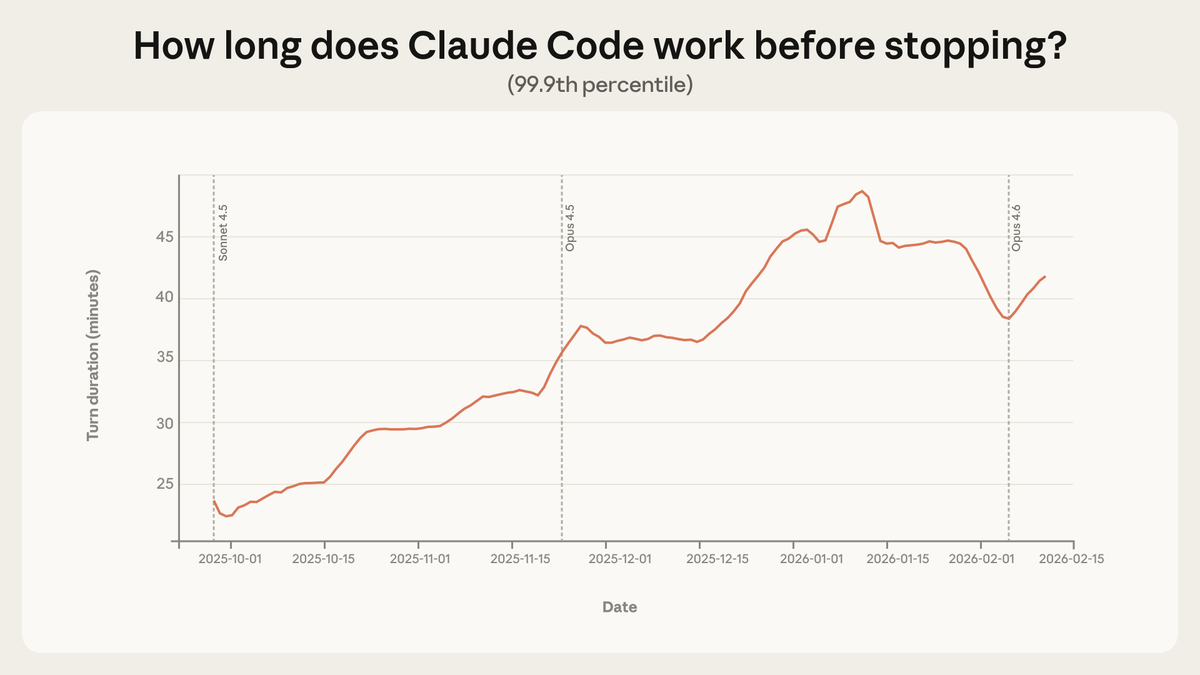

Image: The longest training sessions for Claude Code almost doubled within three months. This not only enhances capabilities but also builds trust.

Source:x.com

From October 2025 to January 2026, the 99.9th percentile of single-session durations almost doubled, increasing from less than 25 minutes to over 45 minutes. Growth has been steady across various model versions. This not only reflects the model getting stronger but also users learning repeatedly through usage, gradually extending their trust in the Agent.

"From August to December, Claude Code's success rate on internally challenging tasks doubled, while human intervention per session dropped from 5.4 to 3.3."

The capability is already there; deployment has not kept pace. This is not a problem, but a product opportunity.

How Trust Evolves

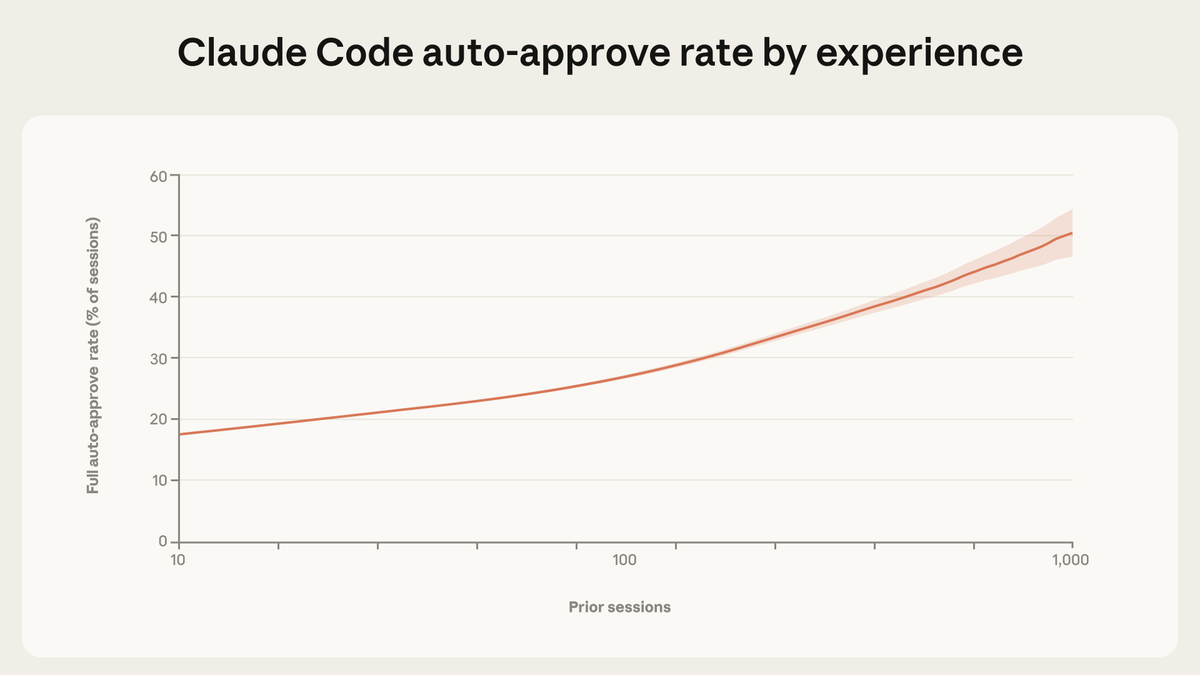

Among new users, 20% automatically approve Claude Code's actions. By the time there are 750 sessions, over 40% of the sessions operate entirely in auto-approval mode. But there is a counterintuitive finding: experienced users tend to intervene more often, not less. New users intervene in 5% of rounds, while old users do so in 9%.

Image: Trust is a skill that accumulates over time. New users automatically approve 20% of sessions. By the time there are 750 sessions, this proportion exceeds 40%.

Image: Anthropic

Source:x.com

This is not contradictory but rather a shift in supervisory strategy. Beginners gradually approve before operations take place, while experienced users grant permission and only intervene when problems occur—they have transitioned from pre-approval to active monitoring.

Here is a noteworthy finding in terms of safety: when dealing with complex tasks, Claude Code requests clarification more than twice as often as humans intervene. The Agent pauses for confirmation instead of pushing through without stopping. This is a feature, not a flaw.

"The core insight from this research is that the autonomy exercised by Agents in practice is co-constructed by models, users, and products. Claude pauses to ask questions when uncertain to limit its own independence. Users build trust through collaboration with the model and adjust their supervisory strategy accordingly."

Levie's Vertical AI Approach

Aaron Levie pointed to the immense wealth and value waiting to be unlocked: building Agent software that accesses proprietary data, genuinely tackling real people and problems, stuffing the context full to maximize intelligent output, and—this is the part most entrepreneurs overlook—driving client-side change management.

This final point is precisely why vertical AI is so difficult to replicate. Anyone can set up an API wrapper, but very few can truly navigate the unique workflows, regulatory constraints, and organizational resistance inherent in medical billing, legal discovery, or building permit approvals.

SaaS has grown tenfold every decade over the past few decades. Over the past 20 years, more than 40% of venture capital funding has flowed to SaaS companies. This industry has given birth to more than 170 SaaS unicorns. The logic is simple: each of these unicorns has a vertical AI version waiting to emerge. And the AI version could be ten times larger because it replaces not just the software but also the operators.

The Nature of Co-Construction

Anthropic's core findings merit serious attention from anyone involved in AI policy-making. Autonomy is not an inherent attribute of the model but is co-constructed by models, users, and products. Pre-deployment assessments cannot capture this; you must measure it in real use.

Anthropic officially stated:

Software engineering accounts for about 50% of Agent tool calls on our API, but we also see other industries emerging. As the boundaries of risk and autonomy continue to expand, post-deployment monitoring becomes crucial. We encourage other model developers to expand this research.

The numbers regarding safety are reassuring: 73% of tool calls involve human participation in the loop, with only 0.8% of actions being irreversible. The highest-risk deployment scenarios—such as API key leaks or self-encrypting transactions—are mostly safety assessments, not actual production environments.

"Regulatory requirements that specify particular interaction patterns—for example, requiring human approval for every action—only create friction and do not necessarily yield safety benefits."

Mandating the "approval of every action" policy will kill productivity gains without increasing safety. A better objective is to ensure that humans can monitor and intervene, rather than stipulating specific approval workflows.

Where Unicorns Are Hidden

The map is drawn. Software engineering is already being addressed. Healthcare, law, finance, education, customer service, logistics—16 vertical fields, each with single-digit market shares—are waiting for someone to genuinely embed domain expertise into Agents.

Previously, 300 SaaS unicorns have been born; the next 300 vertical AI unicorns are about to emerge. Founders who select vertical fields, embed domain expertise into Agents, and figure out how to drive change management will own the enterprise software market for the next decade.

The models can already work for five hours, but users only let them work for 42 minutes. This is the signal: we are still in the very early stages, with a lot to build, and in countless places where there has not even been a minute of intelligent function.

免责声明:本文章仅代表作者个人观点,不代表本平台的立场和观点。本文章仅供信息分享,不构成对任何人的任何投资建议。用户与作者之间的任何争议,与本平台无关。如网页中刊载的文章或图片涉及侵权,请提供相关的权利证明和身份证明发送邮件到support@aicoin.com,本平台相关工作人员将会进行核查。