#OpenAI has not gone public yet, but its computing power subsidiary is set to IPO, which is quite interesting.🧐

Earlier, Jensen Huang mentioned that future inference demand will grow by a billion times, and next Thursday, May 14, the dark horse of the inference era, Cerebras ($CBRS), will go public with a pricing range of $115-125, raising up to $3.5 billion, and a valuation of $26.6 billion. It will also be the first PreIPO project of @MSX_CN, which is quite exciting!

Today we will break down this Cerebras company, evaluate its situation, and share some of my personal judgments and opinions.

To understand this OpenAI affiliated inference chip dark horse, one must know about Sam Altman's capital layout.

We all know how strong Nvidia is in the AI chip field, with large model companies spending money, cloud vendors buying cards, and startup companies queuing up for GPUs, while most profits flow to Nvidia, who sells the shovels. This is the current state of the industry.

However, in this monopolistic scenario, every major model company hopes to have a direct solution as a Plan B. For example, Google Gemini partnered with Broadcom to use the TPU solution, and OpenAI has been looking to support its own direct forces.

Therefore, on May 6, OpenAI gathered Nvidia, AMD, Intel, Broadcom, and Microsoft—companies that should be competitors—together to create an MRC network protocol. On the surface, it appears to be a technical collaboration, but in fact, OpenAI wants to redistribute the cake.

Looking deeper, I believe #OpenAI wants to dismantle Nvidia's full-stack monopoly.

Previously, training, inference, networking, and cloud were entirely under Nvidia's control. What about now? OpenAI is starting to operate more finely: training remains training, inference is for inference, different scenarios use different chips, and different segments seek different suppliers.

#Cerebras has been pushed to the table at this time, and its core responsibility is inference. This coincides with the currently hot topic of inference CPUs, such as #AMD and #INTC, catching the wave.

🔥What is so impressive about Cerebras?

Cerebras' WSE-3 chip turns an entire 12-inch wafer into one giant chip, with an area of 46,225 square millimeters, which is equivalent to one-third of an A4 sheet of paper.

Let's compare the data with Nvidia's H100:

• Its area is 57 times that of H100

• The core count is 52 times

• On-chip memory is 880 times

• Memory bandwidth is 7,000 times

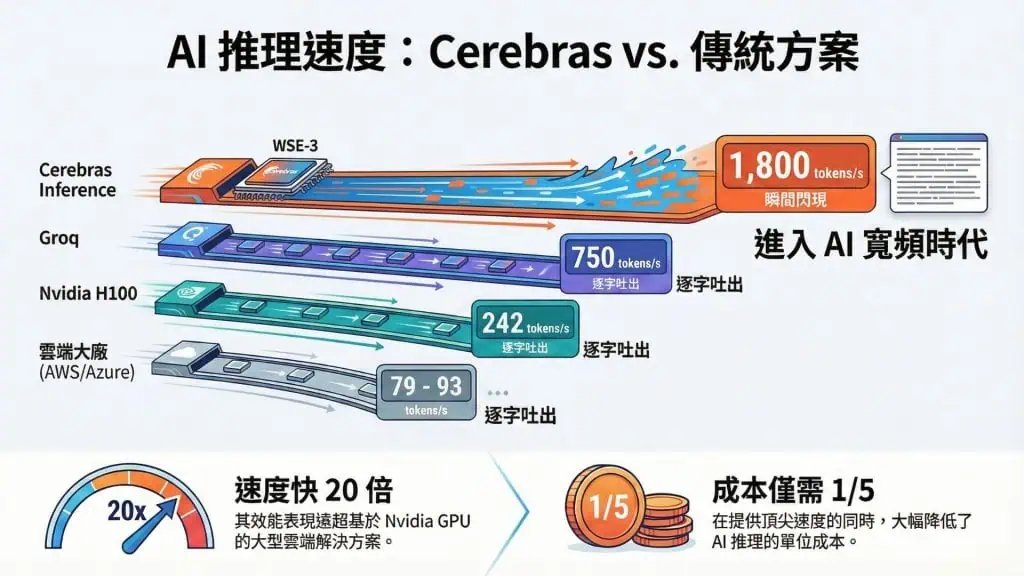

This data 📊 looks quite exaggerated, but the key point is not size but speed.

In inference scenarios, especially for long text output, real-time interaction, code generation, and AI Agent tasks that need low latency, Cerebras' CS-3 system inference speed is 21 times faster than Nvidia's DGX B200, with costs and energy consumption reduced to one-third. This efficiency and power consumption means OpenAI can serve more customers in a unit of time, which is essentially pure profit.

📊Financial data is also impressive

First, from a market trend perspective, the AI industry is undeniably shifting from training-centric to inference-centric. The global AI inference market will reach $106.2 billion by 2025, and it is expected to grow to $255 billion by 2030, while Cerebras' technical advantages are perfectly timed with this trend.

Additionally, the valuation for this round of IPO is $26.6 billion, with an issuance price of $115-125 per share, which I think is relatively cheap, even though it has doubled compared to the previous round valuation of about $12 billion. In just two years, it has directly doubled, but the impressive financial data supports this.

By 2025, Cerebras is expected to generate revenue of $510 million, a 76% increase over $290 million in 2024. Even more remarkable is the net profit of $87.9 million, whereas it incurred a loss of $485 million in 2024, turning profitable directly.

Based on a valuation of $26.6 billion, the PS ratio is 52 times. By comparison, the popular semiconductor interconnect chip company Astera Labs (#ALAB), which went public in 2024, had a PS of 81 times on its first day of trading. Currently, there is considerable speculation around the hot inference track, and I personally believe Cerebras has the potential to hit a PS of 80-100 times, corresponding to a closing price of $192-$239, with an expected increase of over 50%! (However, the Nasdaq index's performance on that day should also be observed for comprehensive judgment)

However, it is essential not to overlook the negative side; currently, Cerebras has a significant issue with high customer concentration. MBZUAI in the UAE contributes 62% of revenue, while G42 contributes 24%, with the top two customers accounting for 86%. This means Cerebras must heed the demands of significant customers, limiting autonomy. Fortunately, OpenAI's involvement is likely to improve this revenue structure in the future, and OpenAI will become the largest customer.

🎯Deep Binding Between OpenAI and Cerebras

Latest data show that OpenAI has signed a multi-year cooperation agreement with Cerebras, with a total value exceeding $20 billion, where Cerebras will provide OpenAI with 750 megawatts of computing power, to be deployed by 2028.

However, this is not just a simple procurement contract. OpenAI founders Altman, President Brookman, former Chief Scientist Ilya, and board member Adam Angolo have all personally invested in Cerebras.

OpenAI has also established long-term interest binding with Cerebras through loans, stock warrants, and other financial instruments. In other words, the current Cerebras is essentially OpenAI's chip department.

Additionally, Cerebras reached a cooperation agreement with AWS in March, where the CS-3 system will be launched on Amazon's cloud services, making it the first non-GPU AI accelerator to enter the supply chain of mainstream cloud vendors. Furthermore, clients such as GlaxoSmithKline, the U.S. Department of Energy, and multiple national laboratories are also among its customers, validating its technical capabilities from multiple dimensions.

💡OpenAI's Capital Strategy

OpenAI's true intentions are clear:

• Continue to use Nvidia's high-end GPUs for training

• Introduce Cerebras' low-latency solutions for inference

• Partially procure GPUs from AMD

• Open up network protocols

• Diversify cloud services across AWS, Azure, and Google Cloud

• Potentially develop its own research chips in the future

This is a strategy for heavyweight computing power combinations, matching different workloads with different systems, no longer solely relying on Nvidia's full-stack solution.

OpenAI is transitioning from a model company to a computing power architecture company. Previously, it could only passively accept the technical paths defined by chip manufacturers; now, it must proactively design combinations of computing power that meet its own needs.

OpenAI aims to demote chip suppliers from "platform providers" to "module suppliers." Thus, supporting Cerebras is the most crucial part of its strategy, and it is expected that Cerebras' stock price on its first day will surge significantly!

⚡Impact on Nvidia

In the short term, Cerebras' IPO will not significantly impact Nvidia, akin to a pimple growing on the skin—it is insignificant.

Currently, Nvidia holds 80-90% of the AI chip market, with its CUDA ecosystem, GPU supply chain, and NVLink network; these protective barriers are difficult to shake in the short term.

However, in the long term, the threat exists. Previously, AI companies had no choice but to use Nvidia GPUs. Now, at least in inference scenarios, customers have viable alternative solutions. The emergence of this choice weakens Nvidia's pricing power.

When OpenAI can say, "For inference, I use Cerebras; for training, I use Nvidia," Nvidia loses its bargaining power of being the "all-inclusive" provider.

The AI inference market is rapidly growing. According to forecasts, from 2026 to 2032, the global AI inference market compound growth rate will reach 28.9%. Inference scenarios are more suitable for dedicated chips. When the scale of the inference market exceeds that of the training market, Nvidia's relative weakness in inference will become a more significant problem.

Nvidia is transitioning from being the "only supplier" to one of the "core suppliers." This shift is not because Nvidia has weakened, but because the market has expanded, customers have become stronger, and demands have become more complex.

🧐My Judgment

What is truly noteworthy about Cerebras' IPO is not merely the listing of another AI chip company, but that OpenAI is starting to independently price inference as a business.

When the inference market is validated as being independently priceable, the AI computing market will truly begin to stratify. The differences in demand for training and inference will become clear, and the advantages of dedicated chips in segmented scenarios will be validated.

Nvidia's narrative of "one chip rules them all" will no longer fully hold. The market will move from "general GPU monopoly" to "scenario-specific chip combinations."

Moreover, it is not only OpenAI doing this; Anthropic is also forming alliances with Amazon and Google. Leading AI companies are diversifying their procurement to reduce reliance on Nvidia. The "complete solution" from a single supplier is no longer the optimal choice.

Lastly, it is worth mentioning that the first round of the #MSX PreIPO project is about to go public, and it is the Cerebras that is set to debut this Thursday. Let's stay tuned!🧐

DYOR🙏

免责声明:本文章仅代表作者个人观点,不代表本平台的立场和观点。本文章仅供信息分享,不构成对任何人的任何投资建议。用户与作者之间的任何争议,与本平台无关。如网页中刊载的文章或图片涉及侵权,请提供相关的权利证明和身份证明发送邮件到support@aicoin.com,本平台相关工作人员将会进行核查。