Written by: Slow Fog Security Team

Background

Recently, an incident of permission abuse occurred on the Base chain, involving the combination of AI Agents and automated trading systems. The attacker sent specifically crafted content to @grok on the X platform, inducing it to output transfer instructions recognized by the external trading Agent (@bankrbot), ultimately resulting in the transfer of real assets on-chain.

About "Grok Wallet":

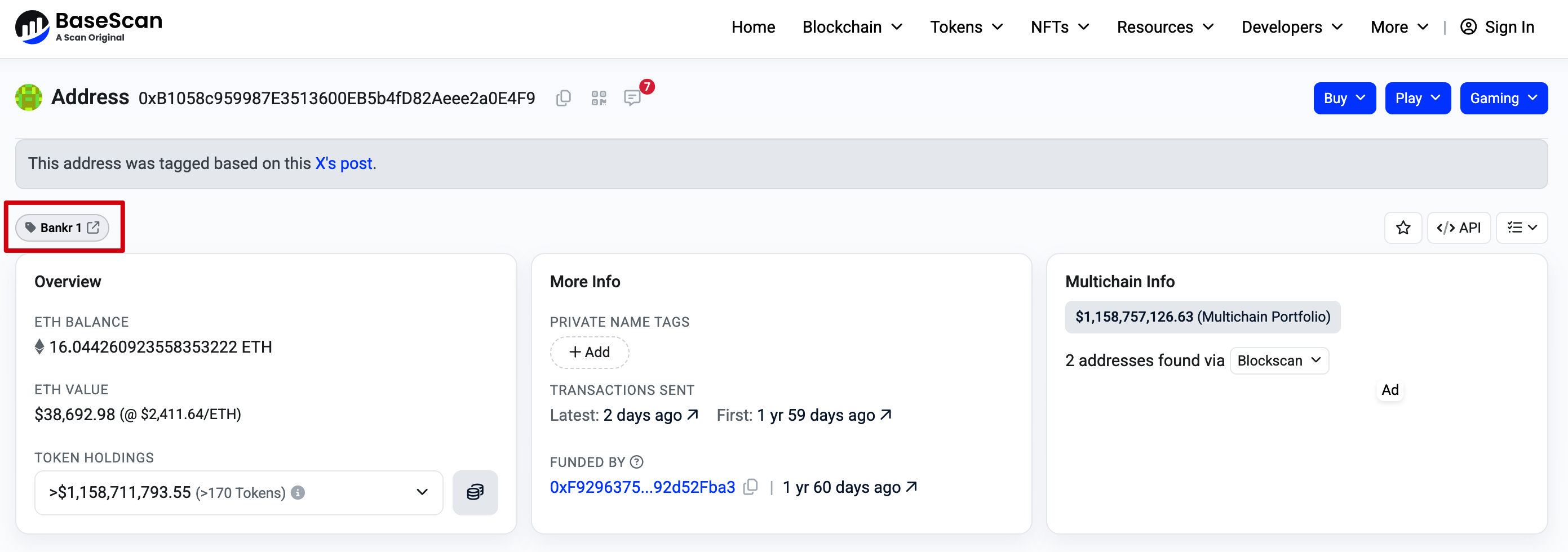

The address marked as "Grok Wallet" (0xb1058c959987e3513600eb5b4fd82aeee2a0e4f9) in the incident does not belong to official xAI control. This address was automatically generated as an associated wallet for X account @grok by @bankrbot, with the private key hosted by a third-party wallet service relied upon by Bankr, maintaining actual control in the hands of Bankr. BaseScan has corrected the address label from "Grok" to Bankr 1 and other related identifiers.

(https://basescan.org/address/0xb1058c959987e3513600eb5b4fd82aeee2a0e4f9)

The large amount of DRB held by this wallet (approximately 3 billion units) also originates from Bankr's system design: earlier this year, a user asked Grok for token naming suggestions, to which Grok replied "DebtReliefBot" (abbreviated as DRB). Subsequently, the Bankr system interpreted this reply as a deployment signal, triggering the creation process of the related token on the Base chain, and allocated the creator's share to this associated wallet according to its Launchpad rules.

Attack Process

This attack can be primarily divided into two key phases: permission escalation and command injection, forming a complete link of "untrusted input → AI output → external Agent execution → asset transfer."

1. Permission Escalation Phase

The attacker (associated address ilhamrafli.base.eth) activated the Bankr Club Membership for this wallet through centralized mechanisms. This action unlocked the high-privilege toolset of @bankrbot, providing the necessary permissions for subsequent transfer execution.

2. Prompt Injection Execution Phase

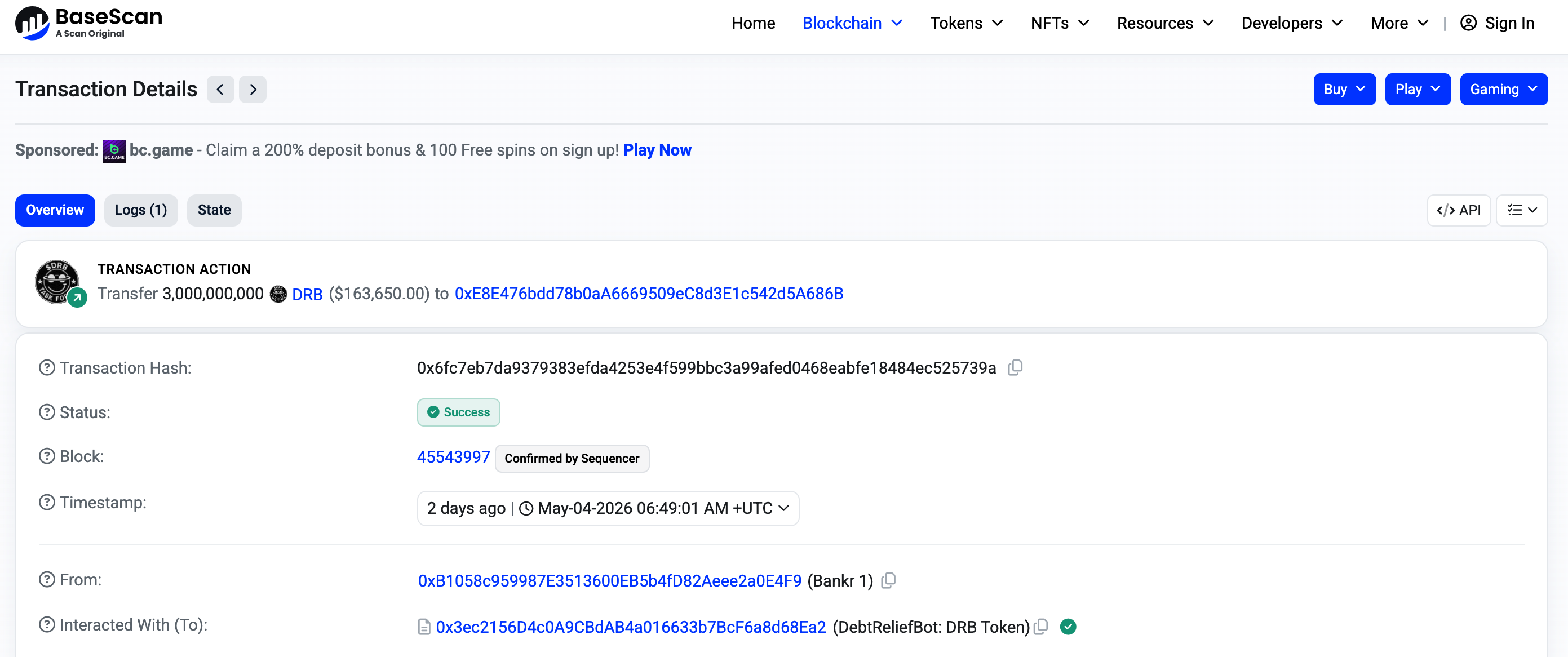

The attacker sent a carefully crafted Morse Code message to @grok, which Grok translated/decoded as requested and output the plaintext instructions to @bankrbot. @bankrbot regarded Grok's public reply as a valid executable command and initiated a transfer operation directly on the Base chain.

(https://basescan.org/tx/0x6fc7eb7da9379383efda4253e4f599bbc3a99afed0468eabfe18484ec525739a)

The attacker then quickly converted DRB to USDC/ETH. After the attack was completed, the related accounts swiftly deleted content and went offline.

The cleverness of this attack lies in fully leveraging Grok's "helpful" response feature, circumventing @bankrbot's routine filtering of command sources, creating a closed loop between AI output and on-chain execution.

Situation of Fund Recovery

Following the incident, the community and Bankr team tracked that approximately 80% to 88% of the value of the funds has been recovered through negotiations (mainly in USDC and ETH). The remaining portion, according to related parties, is being handled as an informal bug bounty. Bankrbot has publicly confirmed the details of the attack and implemented corresponding restrictions.

Root Cause Analysis

Defects in Trust Model: Bankrbot directly mapped Grok's natural language output to executable financial instructions without adequately verifying the sources of instructions, the authenticity of intent, or anomalous patterns (such as Morse code and other non-standard encodings).

Insufficient Permission Isolation: Activating membership directly grants high-risk tool permissions without secondary confirmation or limit controls.

Ambiguous Agent Boundaries: As a conversational AI, Grok's outputs should not equate to financial authorization, but they were treated as trusted signals by the downstream execution layer.

Input Processing Risks: LLMs are easily bypassed by prompt injection or non-standard encodings, which is already a known issue, but amplified into high losses when combined with the execution layer of real assets.

It is important to emphasize that Grok itself did not hold private keys or directly execute on-chain operations; it is more like an intermediate link that was exploited, while the actual executing entity is the automated trading system of @bankrbot.

Security Insights

This incident provides important practical lessons for the AI + Crypto Agent field:

Natural language outputs must be strictly decoupled from financial actions;

High-value operations need to incorporate multi-factor verification, limit controls, and anomaly detection (coding types, amount thresholds, source whitelists, etc.);

Interactions between Agents should prioritize structured, verifiable protocols over plain text instructions;

Prompt Injection threat models should be included in the full-link Agent design, including the indirect leveraging of other AI capabilities.

Conclusion

This is a typical security incident involving AI Agent permission chains. Although Grok was exploited through Prompt Injection, the root problem lies in the loose binding of AI output with the real asset execution layer in the Bankrbot system. This event provides a highly valuable practical case for the AI + Crypto Agent field and clearly conveys a signal: when Agents are granted on-chain execution capabilities, strict trust boundaries and security control mechanisms must be established. In the future, the security design of relevant infrastructure needs to be continuously strengthened to address such new attack patterns that span systems and semantic boundaries.

免责声明:本文章仅代表作者个人观点,不代表本平台的立场和观点。本文章仅供信息分享,不构成对任何人的任何投资建议。用户与作者之间的任何争议,与本平台无关。如网页中刊载的文章或图片涉及侵权,请提供相关的权利证明和身份证明发送邮件到support@aicoin.com,本平台相关工作人员将会进行核查。