Written by: 0xFrancis

In the past two years, I have been interacting with AI agents daily. What keeps me awake at night is not how smart they are, but whose mind they are in.

Descartes said "I think, therefore I am," and this statement has survived for four hundred years, but today it suddenly has a bug.

You open ChatGPT, input a question, it thinks for a moment, and gives you an answer. During this process, who is thinking?

You might say, of course, it's me who is thinking, AI is a tool, but if you look closely at your actions, you opened its interface, followed its rules, asked in a way it can understand, it stores the results on its server, presents them to you in its format, and if you want to continue this thought next time, you have to go back to it. If you want to use another tool, sorry, you can't take it with you.

You say you are "thinking with AI," but to be precise, you are "going to AI to think."

These two statements differ by one character, and that character distinguishes whether you are the master or the guest.

Thinking has a location

Heidegger discussed a concept called "ready-to-hand" indicating that effective tools should be transparent. When you use a hammer to drive a nail, you are not aware of the hammer's existence; you only realize that the nail is going into the wood. The tool disappears, leaving only you and your task.

Today's AI products are exactly the opposite; you must log into it, enter its space, operate in its way, and complete your thinking on its territory. The tool has not disappeared; it has become a place you must go to.

When you look at stars through a telescope, the telescope extends your eyes; you are still you. You go to an observatory to see stars, you must buy a ticket, queue up, and adhere to opening hours. What you can see depends on what the observatory allows you to see.

The entire AI industry is turning what should be a telescope into an observatory.

And when your thinking must occur on someone else's territory, a deeper question arises: what happens to the things you left behind if that place suddenly shuts down?

Memory is your organ

Locke understood over three hundred years ago that a person is defined by the continuity of memory. You remember yesterday's self, last year's self, ten years ago's self, so you are you. The body may change, the cells may be replaced, but the river of memory flows continuously, and you remain you.

Now think of one thing.

You have been conversing with AI for two years; two years of work decisions, thought processes, value judgments, knowledge gaps, and 3 AM anxieties are all in there. These things together form a mirror of you that is more complete and retrievable than yourself—a digital cognitive archive that records how you think about problems, more reliable than your own memory.

And then the account gets suspended.

Locke would say, this is not just losing some data; this is the death of a digital life. Your cognitive continuity has been severed, and those fragments of memory that constitute "who you are" are stored on a server you cannot access, with a company whose customer service number you cannot reach deciding their fate.

Do you think this is just a user experience issue? No, this is about existence. When your memory is outsourced to a third party, your identity no longer wholly belongs to you.

Memory is an organ, not luggage; luggage can be replaced, but if an organ is removed, that part of you is lost.

The most exquisite form of alienation

If Locke helps us see what you have lost, Marx helps us understand how it is taken away.

One hundred and fifty years ago, Marx described a structure where the fruits of labor do not belong to the worker, but instead, become a force that controls the worker. You built a factory, and the factory, in turn, controls you; he called this alienation.

In the age of AI, a new form of alienation has emerged, more exquisite than anything Marx had seen.

Every time you ask a question, provide feedback, or choose and correct the output, you are training the model, improving it, making it stronger. Your thinking habits, ways of expression, professional knowledge, and aesthetic preferences are extracted, aggregated, and distilled into the model's parameters.

Then this capability is packaged into a subscription service, costing $100 per month, roughly the price of Claude Max or ChatGPT Pro, sold back to you.

You have fed a system with your cognitive labor, and then you must pay to rent back the ability to think from this system. What does this structure resemble?

Some say this is a fair trade; you use the service, you pay for it. However, the premise of a fair trade is that both parties know what they are giving up. You know you paid $100, but you don’t know you also paid with your thought patterns, decision-making trajectories, and knowledge structures. These things are not priced, but they are worth a hundred times more than $100.

The "body" and the "use" are reversed

Subjectivity has been transferred, memory has been outsourced, labor has been extracted. When viewed together, these three issues are fundamentally the same problem.

Chinese philosophy has a framework that intuitively grasps it better than any discourse system in the West.

The relationship between "body" and "use."

"Body" is fundamental, "use" is a means; a knife is a use, cutting vegetables is the body, a vehicle is a use, reaching a destination is the body. "Use" serves "body," and "body" determines "use."

What is LLM? It is a use, a capability, a tool.

What are your data, your memory, your identity, your intentions? They are the body, the purpose itself.

But the entire industry's construction is reversed. The LLM brand has turned into the user's identity tag; "I am a ChatGPT user," "I am a Claude user." The LLM's memory system has become the user's memory, and the LLM's ecosystem has become the user's ecosystem.

"Use" has reversed its role, turning tools into spaces, identities, and platforms you have to rely on; the "body" has been marginalized.

Every time there has been a reversal of body and use in history, the same thing has happened: people become means, and tools become purposes.

We are walking into this script.

You have seen this script

Maybe you think philosophy is too distant, so let me recount something you have definitely experienced.

You wrote content on Weibo for five years, accumulated tens of thousands of followers, and got your account banned. The content is gone, the relationships are gone, starting from zero; you operated a shop on an e-commerce platform for three years, accumulating tens of thousands of reviews, customer relations, and operational data. The platform changed the rules, and you realized that everything you ran was grown on someone else's land.

At that time, someone shouted a slogan called "data sovereignty," saying that your data should belong to you. This slogan has been shouted for ten years, never realized in the Web2 world, because the business model of every platform is built on the same premise: your stuff stays here, and the cost of leaving is so high that you dare not leave.

The AI industry has brought this script back to life and has done it even more heavily this time.

The place where they are stored, the power to control their fate, is exactly the same as it was ten years ago, not in your hands.

And companies have realized this; in early 2026, multiple corporate surveys from EY, Netskope, etc., listed data sovereignty as the biggest challenge for AI. The percentage of IT leaders acknowledging this rose from 49% last year to 72%. They are not discussing a theoretical issue; they are looking at their balance sheets.

The situation is becoming more urgent

In the past, when you chatted with AI or wrote copy, losing it was not painful.

Now that AI agents have arrived, they handle your schedules, do analyses, communicate with clients, and remember that detail from a meeting three months ago that you didn’t finish discussing. They accumulate work memories, business knowledge, and decision context. An agent that has been running for six months may have more knowledge stored than a new assistant can learn in three months.

Where is this brain located?

In November 2025, the open-source agent framework OpenClaw was launched and became one of the fastest-growing projects in GitHub history within 60 days. Three months later, its author was poached by OpenAI to oversee the next generation of personal agents. Both sides of this event are worth watching.

OpenClaw initially adopted a local-first approach, with agents running on your own machine and memories stored on your own hard drive. Theoretically, sovereignty was in your hands—a correct direction. However, a bunch of security reports soon emerged: instances of data theft and prompt injection were discovered in community-shared skill packs, raising questions about the official repository's audit mechanisms not keeping pace with expansion. To preserve the data sovereignty of the agent, you expose everything else on your local hard drive to some unknown code. You have sovereignty, but security is gone.

You may say, then I’ll get a clean machine just to run it? That’s possible, but you have to handle maintenance, deal with faults, switch models—these troubles are enough for you to drink a pot of tea; the average person can't afford to manage this.

Another route is the hosted version, where your agent runs on the platform's servers. Everything it remembers about you can be seen and taken by the other party. You gain a sense of safety, but sovereignty is lost.

Choosing A is unsafe, choosing B still means the memory is not yours, and the real issue behind both options remains unsolved by anyone.

If your agent resides on the platform's servers, it is like an employee living in the company dormitory. The platform claims you violated a term of service you have not read; your agent gets kicked out without even having time to pack its things. All knowledge it gathered, workflows established, and contexts remembered are completely reset.

Today, their homes have been demolished

Do you think this is hypothetical?

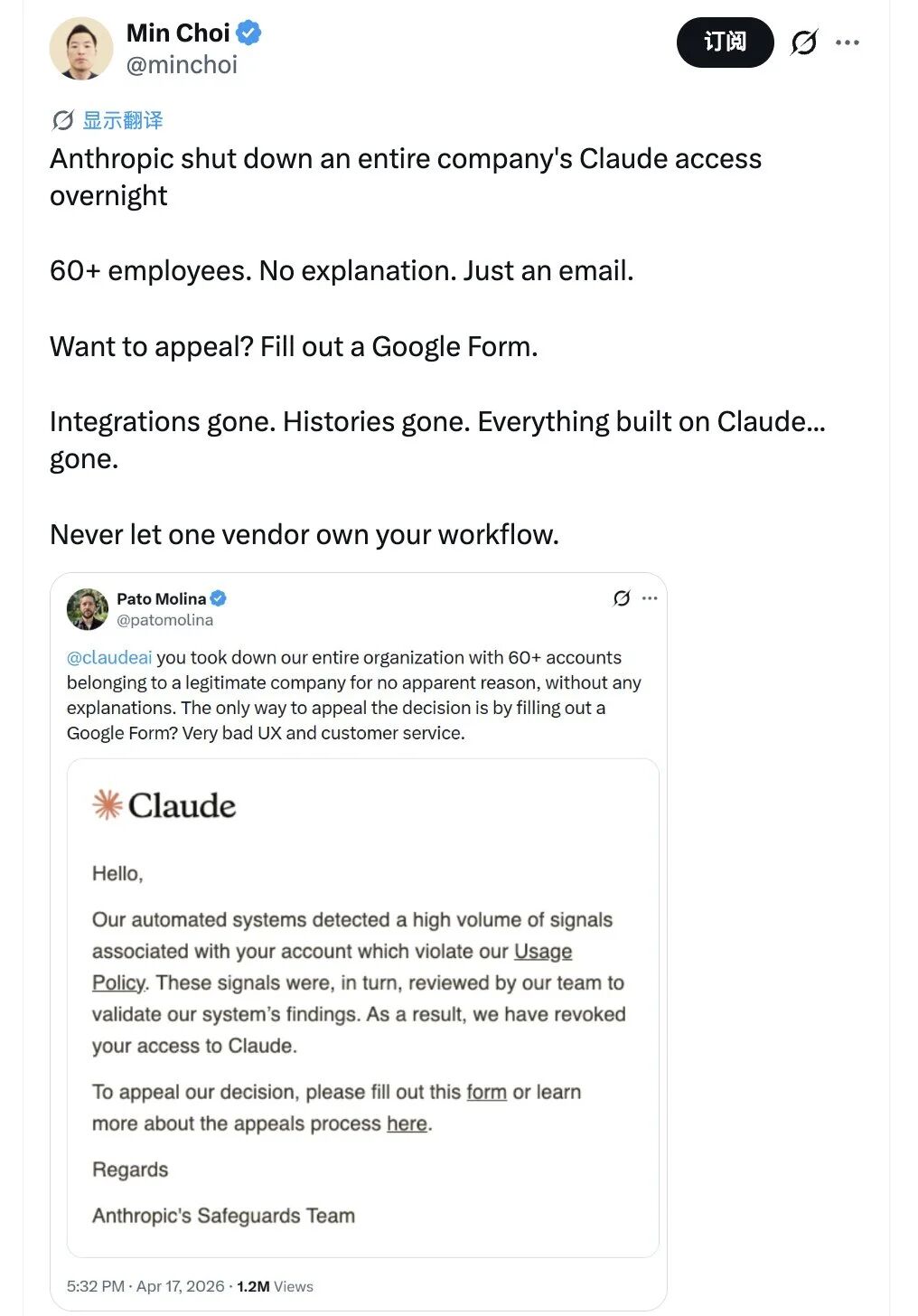

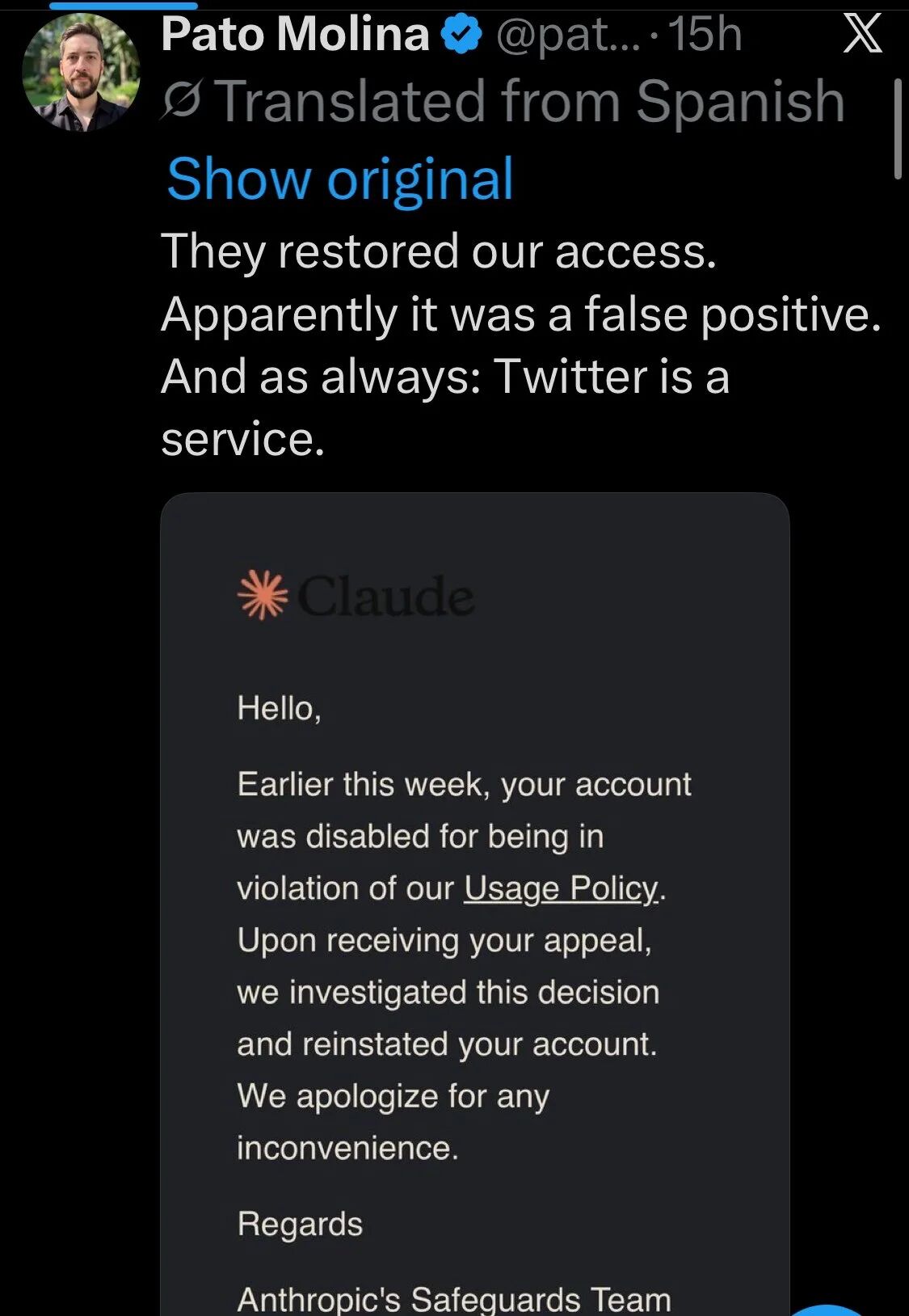

On April 19, 2026, Anthropic cut access to Claude for over 60 employees of a software company overnight, citing a violation of terms of service without specifying which one. Want to appeal? Fill out a Google form; will anyone care? Who knows? Now workflow is gone, skills are gone, conversation history is gone, all things built on Claude are gone.

The owner of this company wrote a sentence on Twitter that rose to the top of the discussions in the AI circle:

Never let a vendor own your workflow.

This is the realistic version of dormitory employees, where even the dramatic flair is unnecessary; a Google form is all that it takes.

You think you are building a digital team, but in reality, you are building on someone else's foundation, and the foundation belongs to them. The higher you build, the harder you fall.

It should be flipped

Descartes said, "I think, therefore I am." Locke said, "I remember, therefore I am me." Marx warned, "Be careful that your labor is taken from you." Three people separated by hundreds of years speak about the same thing: Who you are depends on what you possess, and what you possess depends on what is in your own hands.

At this point, the correct direction can actually be summarized in one sentence.

Your data should be with you, LLM should come to you, not the other way around.

Your memory, your knowledge, your work context should exist in a space controlled by you, invisible to anyone else, not accessible to anyone else. When you need the capability of AI, you simply call in a model to process it. Once processed, the results are written back to your space, and the model exits. You use Claude today, switch to GPT tomorrow, and run an open-source model the day after. When the model changes, everything about you remains.

Just like your home appliances, when you change electricity companies, the food in the fridge does not disappear, and the photos on the wall do not fall down, because the house is yours; electricity is just a service. But now the entire AI industry has you living in a dormitory provided by the power company; using electricity is very convenient, but your phone, TV, fridge—everything belongs to the power company. Absurd, right? Indeed.

This path is technically not without examples. A product that focuses on local-first note-taking has achieved 5 million deep users in three years by positioning itself as "refusing to be a cloud tenant," of which 40% have migrated from centralized tools. A few open-source agent harnesses publicly recommended by LangChain's founder are moving toward "decoupling capabilities and data." Some are creating encrypted data spaces, while others are developing multi-signature collaborative protocols between AI and humans that require AI to obtain your authorization to access your data assets first, though this direction is still early, its outline is becoming clear.

What can be done today

First, ask yourself a question: If this platform disappears tomorrow, what do I have left of everything I've accumulated in the AI App?

If the answer makes you uncomfortable, it’s correct; discomfort is the starting point for change.

Demand that the AI product you are using provides a complete data export: your conversation history, your preference settings, your knowledge base, your agent configurations—all the data assets you have invested time and thought into should be portable at any time. If that is not possible, you’ll understand what its business model is really built upon.

Follow those who are working on "decoupling the data layer from the capability layer." Whoever is doing this is on your side.

Then spread this question, let more people see the whole picture of this issue.

The right relationship with AI is for it to work for you. You pay the salary, you give the tasks, it finishes the work and leaves. Those who perform well stay; those who do not get replaced. Your office, your file cabinet, all your accumulations, should always be in your own hands.

The current situation is just the opposite; you work for it, surrender your memory and thought, and it decides when you get to leave.

This issue should be flipped.

The source of the story about Anthropic cutting off access for a company’s Claude.

It garnered widespread attention on the day of publication.

免责声明:本文章仅代表作者个人观点,不代表本平台的立场和观点。本文章仅供信息分享,不构成对任何人的任何投资建议。用户与作者之间的任何争议,与本平台无关。如网页中刊载的文章或图片涉及侵权,请提供相关的权利证明和身份证明发送邮件到support@aicoin.com,本平台相关工作人员将会进行核查。