In 2016, Sam Altman built a bunker underground in Wyoming. 1,200 square meters, three stories, 500 kilograms of gold, 5,000 iodine tablets, 5 tons of freeze-dried food, 100,000 rounds of ammunition. That year, OpenAI had just celebrated its first anniversary.

Ten years later, the leader of the world's most powerful AI company was attacked on two consecutive weekends, first with a Molotov cocktail, then by gunfire. He himself wrote on his blog that he severely underestimated "the power of narrative." Was he talking about others' narratives or his own?

48 Hours, Two Attacks

At 3:40 AM on April 10, in San Francisco's Chestnut Street, a 20-year-old man, Daniel Moreno-Gama, smashed a Molotov cocktail against the metal gate of Sam Altman's apartment. The fire ignited near the outer door, and he immediately fled. About an hour later, the same person appeared near the OpenAI San Francisco office, continued to threaten arson, and was subsequently arrested. Charges included attempted murder and arson.

Sam Altman's San Francisco residence and the surveillance footage of the arson suspect

Two days later, at 1:40 AM on April 12, a Honda sedan stopped next to Altman's other residence in Russian Hill. A passenger extended their hand out of the window and fired a shot at the residence. Surveillance footage recorded the license plate, and police later arrested two individuals: Amanda Tom (25) and Muhamad Tarik Hussein (23). Three guns were found during a search of the residence, and the two faced charges of negligent discharge of a firearm.

One weekend, two attacks.

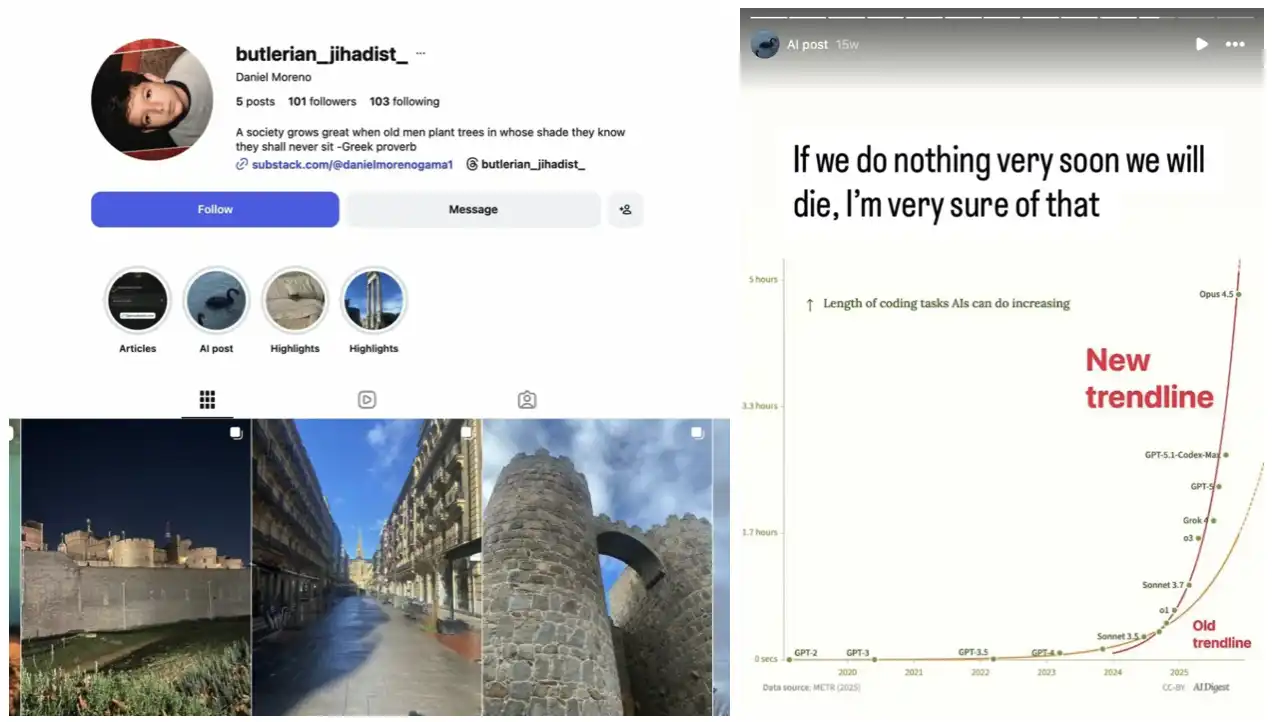

The suspect in the first case, Daniel Moreno-Gama, is an AI pessimist. He quoted the theme of humans against machines from "Dune" on social media, wrote articles arguing that AI alignment failures pose existential risks, and criticized tech leaders for betting the fate of all humanity in the pursuit of "transhumanism."

What was his argument?

Over the past five years, one of OpenAI's standard moves in constructing the AI narrative has been to repeatedly emphasize how real the "existential" threat of AGI is. This was intended to make the government take regulation seriously, help investors understand the magnitude of the stakes, and ensure the entire industry knew that this race was one not to be missed. This narrative served its function, allowing OpenAI to simultaneously establish itself as: on the cutting edge of the most dangerous technology, the most responsible, and therefore deserving of funding.

However, the statement "this is the most dangerous technology in human history" does not only stay within the tech and investment circles. It trickles down, becoming a literal call to action for some individuals. Moreno-Gama wrote in an Instagram post, "Exponential progress combined with alignment failure equals existential risk." The original source of this argument framework comes from the mainstream literature on AI safety, much of which has been funded or endorsed by OpenAI.

Daniel Moreno-Gama's social media account

After the first attack, Altman wrote a blog post. He posted a picture with a child, expressing his hope that this photo could stop the next person from throwing a Molotov cocktail at his home. He acknowledged the "legitimate moral positions" of his opponents and called for public discourse to be "less explosive both literally and metaphorically."

He also responded to a deep report in The New Yorker. That article had been published days before the attacks, openly questioning his credibility as the supreme power holder of AI. He wrote, "I severely underestimated the power of public opinion narratives and words."

Two days later, his residence was shot at again.

Security Budget as a Statement, Bunker as Another

The starting point of this trajectory predates what most people realize by a year.

On December 4, 2024, in New York, UnitedHealthcare CEO Brian Thompson was shot outside a Hilton hotel. The suspect, Luigi Mangione, an Ivy League graduate, left a handwritten statement criticizing the healthcare industry. The case triggered an unusual reaction on social media: many ordinary users openly expressed sympathy for the shooter and even idolized him as a symbol of resistance.

At that moment, some doors were opened.

After the Thompson case, executive security shifted from being a "benefit" to a "survival necessity." According to research data cited by Fortune magazine, the proportion of personal crime attacks against executives of large enterprises increased by 225% since 2023. Among S&P 500 component companies, 33.8% reported executive security expenditures in their financial statements for 2025, compared to 23.3% in 2020. The median cost for security service companies rose to $130,000, increasing by 20%, having doubled in the past five years.

The AI industry is the latest and most obvious recipient of this trend. The ten largest tech CEOs collectively spent over $45 million on security in 2024. Zuckerberg alone spent over $27 million, more than the combined expenses of the CEOs of Apple, Google, and three others. Jensen Huang of NVIDIA is set to spend $3.5 million in 2025, an increase of 59%. Sundar Pichai of Google is at $8.27 million, with a 22% increase.

The AI industry possesses something that some other industries do not: even the builders themselves believe that this technology could destroy civilization. A 2025 survey by the Pew Research Center of 28,333 respondents worldwide found that only 16% were excited about AI development, while 34% expressed concern. A more counterintuitive finding is that the higher the level of education and income, the stronger the worries about losing control of AI. The most informed are the most afraid.

Recently, Indianapolis city councilor Ron Gibson's home was shot at 13 times by a gunman late at night, waking his 8-year-old son. A handwritten note was left at the door that read, "No data centers permitted." The FBI has intervened in the investigation. Jordyn Abrams, a researcher at George Washington University's Extremism Research Project, pointed out that data centers are becoming targets for anti-tech and anti-government extremists.

The scene of Ron Gibson's shooting

This fear is no secret within the industry; it just isn't spoken loudly.

Altman built that bunker in Wyoming in 2016. That year, OpenAI had just declared its establishment and was painting a picture of how AI would benefit humanity worldwide. Both things were concurrently true: he was publicly proclaiming that AI is humanity's greatest opportunity, while privately stockpiling enough ammunition to support an armed militia.

This is a rational dual bet: publicly betting that AI will succeed, while privately preparing for the possibility that AI will go out of control.

Altman's Boomerang

On February 27 of this year, OpenAI signed a contract with the U.S. Department of Defense, allowing the Pentagon to deploy ChatGPT in confidential defense networks for "any lawful purpose." On the same day, Altman publicly expressed agreement with Anthropic's position on limiting military applications of AI. Subsequently, the download rate of ChatGPT surged by 295% in one day, and one-star reviews increased by 775% within 24 hours. The QuitGPT boycott movement reportedly accumulated over 1.5 million participants.

On March 21, about 200 protesters marched in San Francisco, spanning across Anthropic, OpenAI, and xAI, demanding that the three CEOs commit to pausing advanced AI development. Simultaneously, London witnessed the largest anti-AI march to date.

Altman's bunker in Wyoming and the security he employed address two different risks: one from outside people and one from what he is building himself. He takes both risks seriously in private, while only acknowledging one in public.

The same week as the first attack, The New Yorker published an in-depth report on Altman. Reporters Ronan Farrow and Andrew Marantz interviewed over 100 insiders, with the core argument being just two words: untrustworthy. The report quoted a former OpenAI board member stating that Altman is "antisocial" and "not bound by the truth." Several former colleagues described him as repeatedly shifting his stance on AI safety and redefining power structures when necessary.

In his blog post response, Altman admitted to having a tendency for "conflict avoidance." Altman constructed the public narrative that "AI is an existential threat" as a tool for fundraising and regulatory leverage. As a result, this tool flew out of his hands, circled around, and smashed back at his door.

免责声明:本文章仅代表作者个人观点,不代表本平台的立场和观点。本文章仅供信息分享,不构成对任何人的任何投资建议。用户与作者之间的任何争议,与本平台无关。如网页中刊载的文章或图片涉及侵权,请提供相关的权利证明和身份证明发送邮件到support@aicoin.com,本平台相关工作人员将会进行核查。