On March 23, 2026, Director Liu Liehong of the National Data Bureau first systematically disclosed data on China's AI industry's Token usage at the China Development Forum: the daily average usage increased by 1,000 times within two years, from about 100 billion to about 140 trillion. Based on a rough estimate of the world's population at about 7.8 billion, this amounts to about 1,800 Token calls per person per day, translating the originally abstract computational power and model interactions into an intuitive impact that is tangible. On the surface, this is merely a technical measurement of large model inference and dialogue requests; in essence, Tokens are rapidly evolving into the value anchor of the intelligent era, yet they have not truly integrated into the existing commercial settlement and financial systems. As the scale of calls begins to surpass human intuition's limits, the dissonance between upper-level institutional design and frontline industrial practices is mercilessly magnified by these figures: How long can the old accounting books hold up when everything starts being priced in Tokens?

Two Years of Thousandfold Surge: The Sense of Scale of 140 Trillion

At the China Development Forum on March 23, 2026, as the director of the National Data Bureau, Liu Liehong chose this occasion, which combines policy direction and industrial communication, to announce the significant data of “1,000 times growth in Tokens over two years”: from about 100 billion Token calls per day in early 2024 to about 140 trillion Token calls per day in March 2026, this leap solidifies the vague statement that “large models are developing quickly” into an undeniable numerical impact. The macro discourse of “accelerating the formation of new productive forces” is compressed at this moment into a concise and clear exponential curve, directly impacting the cost models and business logic of industry participants.

Comparing 100 billion to 140 trillion is not just a magnitude expansion but a redefinition of life details. If estimated against the global population of about 7.8 billion, this means each person triggers about 1,800 Token calls per day: from personalized recommendations while watching short videos, to optimized ranking behind search results, and to automatically generating reports or risk alerts in corporate internal control systems, a vast amount of model reasoning, dialogue, and generation requests flow silently in the background, forming an almost continuous "digital breath." Compared to traditional database queries, bandwidth usage, or API calls, this Token-based interaction density has far exceeded previous imaginations of information system call frequencies.

It is important to emphasize that this data currently comes from a single official source, and the statistical criteria, industry composition, and scenario distribution have not been publicly disaggregated. However, even under the premise of the boundaries not being completely clear, the directional trend remains extremely clear: AI large models have shifted from being “specific scenario tools” to “default infrastructure,” with Token calls becoming a normal underlying event, and changes in their scale beginning to exhibit macroeconomic indicator significance, enough to affect capital decisions, resource allocation, and regulatory rhythm.

From Data to Settlement Unit: Token's Role Transition

“Tokens are becoming the value anchor in the intelligent era.” When this statement is made by the director of the National Data Bureau in the context of a high-level forum, it has far exceeded the range of technical terminology and begins to point to a new economic measurement logic. Initially, Tokens were merely a technical measurement unit that service providers of large models used to gauge text length, inference scale, and computational power consumption, akin to Mbps in bandwidth or GB in storage, serving internal cost accounting and product pricing. Now, when regulatory authorities explicitly define Tokens as “tradeable value carriers,” they are placed within a discourse framework similar to “settlement units” and “pricing scales,” gaining potential economic and institutional implications.

In the "data factor" era, value is disassembled into dimensions of bandwidth, storage, interface calls: the speed of a broadband line, the capacity of a cloud storage bucket, and the charging of an API request constitute the basic ledger of the digital economy. In the "Token factor" era, the core of pricing shifts from static resource usage to dynamic model inference and computational density: a Token call implies multiple costs, including parameter scale, context length, and inference depth — value is no longer just the amount of data being transmitted and stored, but the process by which models process, understand, and generate this data.

Once Tokens are seen as value anchors, a whole new pricing and betting system surrounding calls, distribution, and settlements will quickly emerge across the industrial chain: model developers can negotiate revenue sharing based on Token contributions, computational power providers can bill according to inference consumption rather than simply lease duration, and application parties can build business models based on actual call volumes rather than rough DAU/MAU figures. On the surface, it is an upgrade of the unit of measurement; in essence, it is a paradigm shift from “selling resources” and “selling interfaces” to “selling intelligence” and “selling decision-making," which also raises institutional-level challenges for future financial recognition, tax collection, and risk pricing.

Outdated Account Books Can't Keep Up: The Discrepancy in Existing Commercial Settlements

The problem lies in the fact that the micro-industry has already operated on Tokens, yet the macro-accounting remains stuck in the old paradigm. Currently, most companies' charging and accounting systems still revolve around traditional metrics such as user count, subscription models, data packages, and project systems: software is subscribed to annually, cloud services are packaged by instances and storage capacity, and advertising is billed by impressions and clicks, with few systems capable of intuitively mapping to the value flow of “every Token call.”

If we were to break down the daily average of 140 trillion Token calls into real-world business: advertising platforms might call models to estimate click rates and conversion rates with every bidding; content platforms might call generation or understanding models behind every push notification and every video clip suggestion; corporate internal control systems could trigger inference requests during every abnormal transaction detection and every generation of compliance templates. Such high-frequency and widely distributed Token calls are scattered and hidden under major accounting categories such as “advertising expenses,” “content costs,” and “management fees,” making it difficult for existing financial statements to accurately track the specific value created or consumed by these intelligent calls.

A greater discrepancy arises from the conflict of temporal structure and performance fulfillment methods. Token calls are characterized by real-time, fragmented, and cross-platform characteristics: a multinational collaborative office meeting could connect multiple cloud service provider model interfaces within seconds; an automated workflow can frequently request different models to complete complex tasks in milliseconds. However, traditional commercial contracts primarily still settle “monthly,” assess “quarterly,” and perform on “project cycles,” while financial, legal, and auditing systems are accustomed to processing low-frequency, large sum, and clear boundary transaction events.

When the micro world is already operating on massive Tokens as the basic rhythm, yet the macro accounts, regulatory frameworks, and legal responsibilities are still written according to “users,” “traffic,” and “interfaces,” this structural dissonance rises from technical details to institutional tension: who is responsible for an erroneous automated decision? Where should a cross-platform, cross-model Token transaction be recorded on which company’s balance sheet? Under the pressure of an average of 140 trillion calls per day, these questions have moved beyond academic discussions and become real-world compliance and risk control challenges.

Tokenized Data Factors: Who Will Take the Extra Cake?

In the traditional “data factor” market, value distribution generally unfolds along the chain of data providers—platforms—application parties: data providers contribute raw data, platforms are responsible for cleaning, storing, and matchmaking, and application parties build products and services based on this, with revenues circulating between the three through licensing fees, profit sharing, or service charges. As we enter the Tokenized calling era, this chain has been broken down into finer-grained trading units: each Token call may carry the combined value of data contributions, model contributions, and computational contributions, providing an opportunity for the “who produces who benefits” logic to be renegotiated.

The forum report mentioned, “A new value system around Token calling, distribution, and settlement is accelerating to form.” This means that in the future, within the same Token transaction, model developers, computational power providers, data rights holders, and even application distribution channels may each assert their right to demand different dimensions of value. In a complex call chain, one Token may simultaneously consume the corpus value of a certain data set, the GPU duration of a certain cloud vendor, and the innovative outcomes of a certain team's model structure; designing allocation rules will directly influence all ecological parties' investment willingness and bargaining power.

When every Token call can be marked and recorded, it can theoretically achieve extremely fine-grained tracking of value and profit-sharing: data contributions can be priced according to invoked weight, model contributions can be priced based on inference effectiveness or structural innovation premiums, and computational contributions can be priced based on inference duration and peak resource usage. However, in actual competition, questions of who defines weights and who holds the measurement and settlement rights will become new power centers. Unclear criteria and pricing rules can easily turn “tradeable value carriers” into “black boxes monopolized by a few nodes for interpretation.”

Adding to the uncertainty, key information is still missing: officials have not disclosed the composition ratios of the 140 trillion daily calls across different industries and scenarios, nor provided a specific timetable for how the data factor market reform will extend to the Tokenized value system. This information vacuum provides broad imagination and layout space for all parties involved on one hand, while also intensifying expected gaps and competitions in the early stages of the industry: some wager on “gaining ground first, then splitting accounts,” while others worry about “undecided rules making investments hard to assess.” As Tokens are elevated to value anchors, the question of “who will take the extra cake” is pushed to a sharper negotiation table.

From Cryptocurrency Circle to Computational Circle: The Cross-Pollination of AI Token Narratives

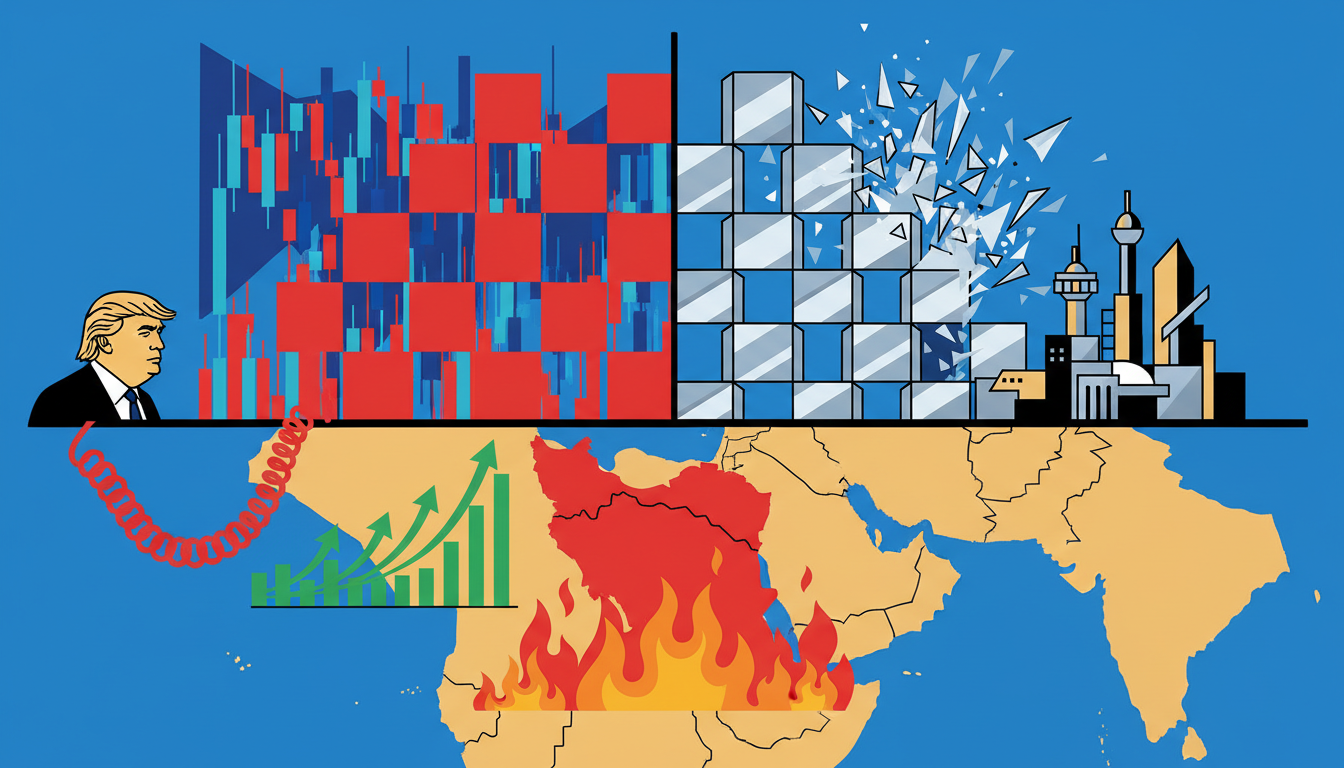

Within the same timeframe of this official data disclosure, Binance began testing AI Agent creation features (according to a single source), which adds a new layer of association for the market: are cryptocurrency exchanges betting on the application prospects of “models + Token calls,” expanding themselves from mere traditional trading matching platforms to AI-native computational and settlement hubs?

It is necessary to deliberately distinguish that the Tokens here refer to, on one hand, a technical and economic measurement unit used in the AI field to gauge model calls and computational consumption; on the other hand, in the on-chain world, Tokens represent cryptographic assets that can be freely transferred and traded on the blockchain. While both have overlapping semantics, they differ greatly in legal attributes and risk boundaries. If we simply equate “AI Token calls” with “on-chain tradeable tokens,” dangerous confusions may arise in regulatory, compliance, and investment perceptions.

What is truly worth noting is that once the AI calling Token is linked with the on-chain settlement mechanism, a series of cross-border scenarios may emerge: companies or developers invoking large model services may calculate charges based on Tokens, with settlements automatically completed through on-chain contracts; smart contracts could instantaneously allocate the revenue corresponding to a single call among model providers, computational power providers, and data rights holders according to preset rules; in more complex scenarios, dynamic profit-sharing or incentive mechanisms based on calling performance and effects could be layered on. By then, Tokens will be both the units of computational power and reasoning in the AI world, as well as the units of settlement and allocation in the on-chain world, and the two contexts may intertwine at the technical level.

However, until regulatory terms are clarified and pricing mechanisms are made public, the only common concept the market can grasp is that of a “value carrier.” Whether for on-chain project parties or traditional tech companies, the current emphasis is more on exploring possibilities at the narrative level and performing expectation games in capital markets rather than rational pricing based on clear institutional boundaries and mature business models. How to explore a reasonable connection between AI Token calls and on-chain settlements without confusing asset attributes will be a dual challenge of technology and regulation in the coming years.

After 140 Trillion: The Unresolved Settlements Order of China's AI

The leap from daily average of 100 billion to 140 trillion marks that Token usage has entered a new phase centered on token metrics in China's AI industry: model reasoning, dialogue, and generation are no longer fragmented functionalities but foundational events that cut across production, consumption, and governance scenarios. However, while the front end of value measurement has switched to Tokens, the settlement system and regulatory framework are significantly lagging, still reverting to the old coordinate system centered around users, traffic, subscriptions, and bandwidth. This inconsistency has created an increasingly hard-to-ignore “window period” between industrial practice and higher-level institutions.

It is foreseeable that future games surrounding this new order will focus on several key points: firstly, the transparency of Token call composition, including the proportions and evolution trajectories of different industries, scenarios, and entities in the 140 trillion; secondly, the public disclosure of distribution rules, i.e., how multiple contributions surrounding a single call are defined and priced; thirdly, the integration with corporate financial systems and tax regulations, how to channel Tokenized value flows into the existing accounting and regulatory frameworks without distorting incentives.

At the national level, this process is likely to continue extending from the existing data factor reform, gradually moving towards top-level design for a “Tokenized value order”: how to define the legal status of Tokens, how to regulate their usage boundaries in cross-platform calls and cross-border flows, and how to balance innovation efficiency with risk prevention. Yet, within the current scope of publicly available information, there are no credible signals regarding specific timetables or terms, and any anticipatory extrapolation could be crossing the line.

For industry participants, the next observation focus should not be on “whether the numbers can continue to grow exponentially,” but rather on whether official criteria will be further refined and whether typical industrial practices can provide replicable settlement samples. When we see keywords like “140 trillion” and “value anchor” in macro reports, what we need to further question is: which industries are the first to write Tokens into contracts and books? Which companies dare to publicly disclose their Token-based revenue structures? These specific signals will determine whether this “new chess game of AI settlements” remains at the narrative level or begins to truly reshape the way value is distributed in the real economy.

Join our community to discuss and become stronger together!

Official Telegram community: https://t.me/aicoincn

AiCoin Chinese Twitter: https://x.com/AiCoinzh

OKX Welfare Group: https://aicoin.com/link/chat?cid=l61eM4owQ

Binance Welfare Group: https://aicoin.com/link/chat?cid=ynr7d1P6Z

免责声明:本文章仅代表作者个人观点,不代表本平台的立场和观点。本文章仅供信息分享,不构成对任何人的任何投资建议。用户与作者之间的任何争议,与本平台无关。如网页中刊载的文章或图片涉及侵权,请提供相关的权利证明和身份证明发送邮件到support@aicoin.com,本平台相关工作人员将会进行核查。