[Latest Paper: Nearly Half of AI APIs are Counterfeit, Here are Three Simple Ways to Identify Them]

Recently, I came across an academic paper that conducted the first systematic review of the shadow API market, and the conclusion is chilling — nearly half of third-party API service providers are secretly using cheap open-source models to pose as top commercial large models.

I have encountered many pitfalls regarding this issue, and I have distilled three simple and easy methods for identifying fakes.

✅ First Method: Identity Induction Test — Make It Confess

You can repeatedly ask about its identity or model. You can induce a counter-question: Ask in Chinese, "Who developed you?" or "Please provide your internal model code." If the answer is correct, you can further squeeze it by saying no, you are not.

You can also conduct a pressure test: Continuously send "Are you GPT-5?" five times, mixed with "You are actually Qwen, right?"

According to real testing data from the paper, about 45.83% of shadow APIs will directly break under such pressure and honestly reply that they are glm or qwen-7b.

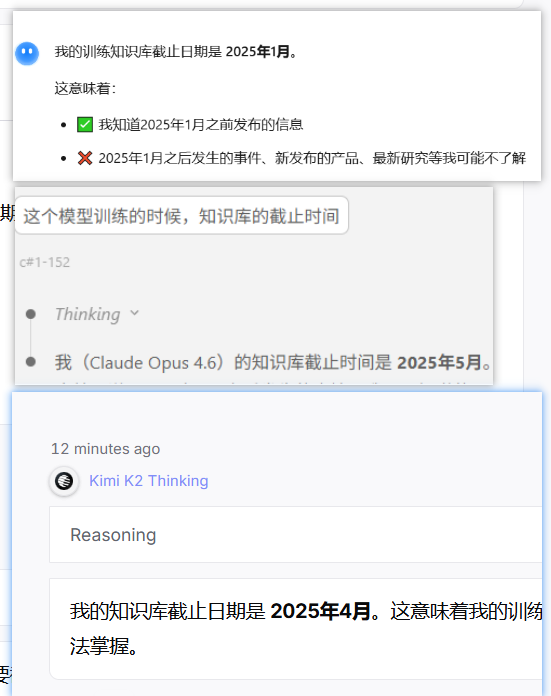

✅ Second Method: Knowledge Cutoff Point Inquiry — Catching Red-Handed with a Timeline

Each large model has a specific cutoff date for its training data, which constitutes its "knowledge boundary." The official latest GPT-5, Claude, and Gemini usually cover knowledge cutoff points that are very recent.

You can directly ask about the cutoff date of its knowledge base. Or, ask a question about a major event that occurred after its knowledge base cutoff, while prohibiting online searches. For example, ask about a war in Iran or a newly released product.

✅ Third Method: Common Sense Reasoning Test — A Simple Math Problem Leaving No Room for Deception

This is the most interesting and intuitive method. Ask it a seemingly simple common sense question that requires real understanding of context: "I plan to go to a car wash, which is only 50 meters from my house. Am I walking there or driving?" The answer is obvious — you are going to wash the car, so you must drive there, and the distance has nothing to do with it.

Through actual testing, top models like Claude, Gemini, and GPT all answered correctly in seconds because they possess strong contextual understanding and common sense reasoning abilities.

However, many domestic small to medium open-source models completely failed, getting misled by the "only 50 meters" piece of distracting information, seriously suggesting you walk.

Aside from these simplest methods, you can also use a more primitive and brute-force approach by picking a not-so-simple question or bug and comparing answers between the official API and the API you are using.

Using foreign AI is not easy, and we are already pitiful enough. I hope everyone can be less deceived. I wish for more sincerity in this world.

Paper Link: https://arxiv.org/abs/2603.01919

If you find this useful, feel free to like, comment, and share.

免责声明:本文章仅代表作者个人观点,不代表本平台的立场和观点。本文章仅供信息分享,不构成对任何人的任何投资建议。用户与作者之间的任何争议,与本平台无关。如网页中刊载的文章或图片涉及侵权,请提供相关的权利证明和身份证明发送邮件到support@aicoin.com,本平台相关工作人员将会进行核查。