Text | Sleepy.txt

In February 2026, the hedge fund Situational Awareness LP submitted its quarterly holdings report, which showed that as of the end of the fourth quarter of 2025, the total market value of the fund's U.S. stock holdings was $5.517 billion.

Wall Street manages trillions of dollars in assets, and $5.5 billion is just a drop in the ocean. However, this fund had a management scale of less than $400 million just 12 months ago, and its founder and chief investment officer is a young man born in 1999.

His name is Leopold Aschenbrenner. 24 years old.

In just 12 months, he grew the fund from $383 million to $5.517 billion, an increase of over 14 times. During the same period, the S&P 500 saw single-digit growth.

What’s even more surprising is his holdings. Opening the quarterly holdings report, you won't find any of the AI star companies you always see in financial news headlines. Instead, there are companies that make fuel cells, bitcoin miners that just crawled back from the brink of bankruptcy, and chip giants that are being abandoned by the entire market.

He claims his fund invests in AI, but this does not resemble the holdings of an AI fund at all; it looks more like a madman's shopping list.

But this madman happens to be one of the first and most profound understanders of how AI will change the world. Before joining Wall Street, he was a researcher at OpenAI, responsible for thinking about how to ensure that when AI becomes smarter than humans, it won't run amok; later, he was fired for saying things he shouldn't have said and wrote a 165-page manifesto predicting a future that most people find absurd.

Later, he bet all his wealth on it.

Breaking Down $5.5 Billion: What Did He Buy?

To understand how brilliant Leopold Aschenbrenner is at investing, the most direct way is to open his holdings report and read it line by line.

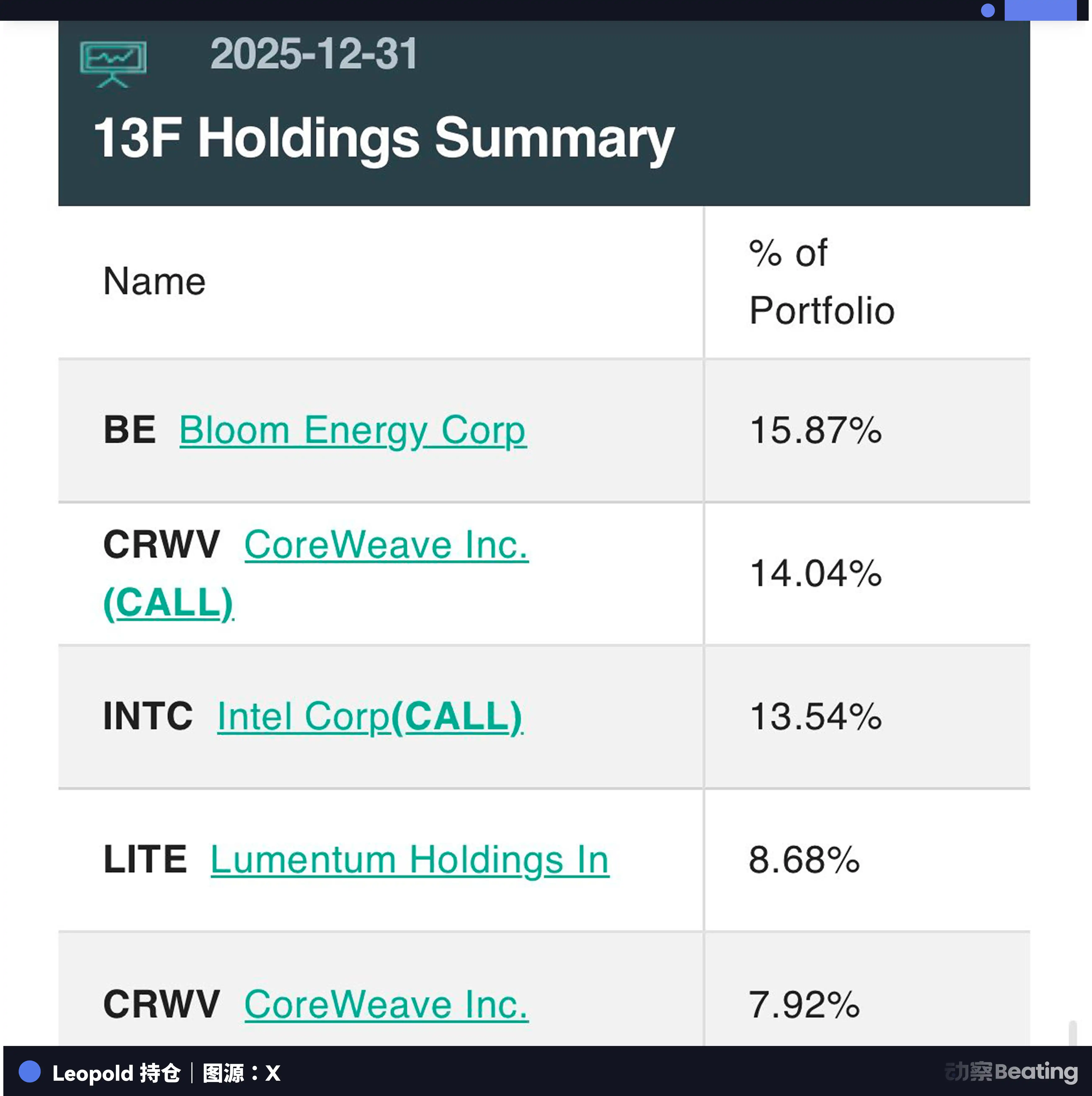

His largest position is Bloom Energy, with a holding value of $876 million, accounting for 15.87% of the total position.

This company makes fuel cells. More precisely, it produces something called "solid oxide fuel cells," which can convert natural gas directly into electricity with high efficiency. The founder, KR Sridhar, was once an engineer for NASA's Mars Exploration Program and was named by Fortune magazine as "one of the top five futurists creating the future today."

An AI fund has placed its biggest bet on a power generation company.

According to Gartner's predictions, the global power consumption of AI-optimized servers will soar from 93 terawatt-hours in 2025 to 432 terawatt-hours by 2030, nearly a fivefold increase in just five years. The power demand from U.S. data centers will nearly triple by 2030, reaching 134.4 gigawatts. Meanwhile, the average age of the U.S. power infrastructure has exceeded 25 years, with many components between 40 and 70 years old, far exceeding their design lives.

In other words, the electricity required by AI exceeds what the entire power grid can provide. And the power grid itself is already aging to the point of falling apart.

The scarcest resource in the AI era is not chips; it's electricity.

Bloom Energy's fuel cells can aptly circumvent this bottleneck. They do not require connection to the grid and can generate power right next to data centers, operating continuously 24 hours a day. In 2025, Bloom Energy secured a contract with CoreWeave to provide fuel cells for its AI data center in Illinois.

Speaking of CoreWeave, it just happens to be Leopold's second-largest position.

He holds CoreWeave call options worth $774 million, plus $437 million in common stock, totaling over $1.2 billion, accounting for 22% of the total position. CoreWeave is a GPU cloud service provider that transitioned from cryptocurrency mining.

In 2017, Mike Intrator and Brian Venturo banded together to mine bitcoin. In 2018, the cryptocurrency market crashed, and mining became unprofitable. But they had a pile of GPUs. In 2019, they had an epiphany: GPUs could not only mine but also run AI.

Thus, the company transformed from a mining operation into an arms dealer of AI computing power. On March 27, 2025, CoreWeave went public on NASDAQ, raising $1.5 billion at a price of $40 per share. A company that crawled out from a mining operation became a core supplier of AI infrastructure.

Leopold is keen on the large number of GPUs CoreWeave possesses and its deep partnership with Nvidia. In an age where computational power equals productivity, whoever holds GPUs is king.

But what is truly puzzling is his third-largest position: Intel. With a holding value of $747 million, entirely in call options, it represents 13.54% of the total position.

In 2025, Intel was one of the least favored companies on Wall Street. Its stock price had halved from its peak in 2024, its market share was being eaten away by AMD and Nvidia, and the CEO had changed multiple times. Almost all analysts were declaring Intel finished.

Yet Leopold boldly bought heavily into call options at this time. This is an extremely aggressive move that could either launch sky-high or plummet to zero.

What is he betting on? Just two words: foundry.

In November 2024, the U.S. Department of Commerce announced that Intel would receive up to $7.86 billion in direct funding through the Chips and Science Act. The purpose of this funding was solely to establish Intel as a domestic chip foundry in competition with TSMC.

Against the backdrop of the U.S.-China technological decoupling, the U.S. needs a "home-grown" entity to manufacture chips. Although Intel is behind, it is the only option. Leopold is betting not on Intel's technology, but on the will of the American state.

The following holdings are even more interesting. Core Scientific, holding $419 million; IREN, $329 million; Cipher Mining, $155 million; Riot Platforms, $78 million; Hut 8, $39.5 million.

These companies share a common characteristic: they are all bitcoin mining companies.

Why would an AI fund invest in a bunch of bitcoin miners?

Simply put, because bitcoin miners have the cheapest electricity and the largest data center spaces in the U.S.

Core Scientific boasts over 1,300 megawatts of power capacity. IREN plans to expand its capacity by 1.6 gigawatts in Oklahoma. These miners have already locked in the cheapest power resources globally to survive in the fierce competition for computing power, having signed long-term power purchase agreements.

And right now, what's most lacking for AI data centers is precisely electricity and space.

In 2022, Core Scientific declared bankruptcy due to the cryptocurrency market crash. It completed restructuring in January 2024, cutting about $1 billion in debt and relisted on NASDAQ. Then, it signed a 12-year contract valued at over $10.2 billion with CoreWeave, transforming its mining farms into AI data centers. To fully commit to this transformation, Core Scientific even plans to sell off all its bitcoin holdings.

IREN (formerly Iris Energy) signed a $9.7 billion AI contract with Microsoft, receiving $1.9 billion in advance. Cipher Mining entered into a 15-year lease agreement with Amazon. Riot Platforms signed a 10-year, $311 million contract with AMD.

Overnight, bitcoin miners became landlords in the AI era.

Now, let’s piece this puzzle together.

Bloom Energy provides power, CoreWeave offers GPU computing power, bitcoin mining companies provide space and cheap electricity, and Intel provides domestic chip manufacturing capacity. Added to this are the fourth-largest position Lumentum ($479 million, which produces optical components, a core component in interconnecting AI data centers), the ninth-largest position SanDisk ($250 million, for data storage), and the eleventh-largest position EQT Corp ($133 million, a natural gas producer providing fuel for the fuel cells).

This is a complete supply chain for AI infrastructure.

From power generation to transmission, to chip manufacturing, to GPU computing power, to data storage, to fiber optic interconnection. In every link, he has invested.

At the same time, he did another thing that made this logic even clearer. In the fourth quarter of 2025, he completely cleared out Nvidia, Broadcom, and Vistra. These three companies were some of the biggest star stocks that surged in the AI market of 2024.

He also shorted Infosys, one of India's largest IT outsourcing companies.

He sold off the hottest AI chip stocks and bought into forgotten power plants and mining sites. He shorted traditional IT outsourcing, believing that AI programming tools were making programmers more efficient, leading to a contraction in outsourcing demand.

Every transaction pointed to the same judgment: the bottleneck of AI is not in software, but in hardware; not in algorithms, but in electricity; not in cloud models, but in the physical world.

So the question arises: how did a 24-year-old young man form this understanding?

From the Son of an East German Doctor to the Rebel at OpenAI

Leopold Aschenbrenner was born in Germany, to parents who were both doctors. His mother grew up in East Germany, while his father came from West Germany, and they met after the fall of the Berlin Wall. This family itself carries a mark of historical rupture—Cold War, division, reunion. His later obsession with geopolitical competition may find its initial seed here.

But Germany couldn't hold him. In a later interview, he said, "I really wanted to leave Germany. If you are the most curious child in class, wanting to learn more, the teachers won’t encourage you; they will envy you and try to suppress you."

He referred to this phenomenon as the "high poppy syndrome," where whoever grows tall gets cut down.

At 15, he convinced his parents to let him fly to the U.S. alone and enter Columbia University.

Reading at university at 15 is unusual anywhere. But Leopold’s performance at Columbia turned the "outlier" into a "legend." He majored in economics and mathematics-statistics, winning every award he could, including the Albert Asher Green Memorial Award, Romine Economics Prize, and becoming a member of the Junior Phi Beta Kappa Honor Society.

At 17, he wrote a paper on economic growth and existential risk. The renowned economist Tyler Cowen commented, "When I read it, I couldn't believe it was written by a 17-year-old. If this were an MIT doctoral thesis, I would also be impressed."

At 19, he graduated from Columbia University as the valedictorian, the highest honor for undergraduates. In 2021, amidst the shadow of the pandemic, a 19-year-old German boy stood at Columbia's graduation ceremony, delivering a speech on behalf of all graduates.

Tyler Cowen gave him a piece of advice: don’t pursue a PhD in economics.

Cowen felt the academic field of economics had become somewhat "decadent," encouraging him to do bigger things. He also introduced him to the "Twitter weirdos" culture of Silicon Valley, a group fascinated by AI, effective altruism, and the long-term fate of humanity.

After graduating, Leopold first went to the Forethought Foundation, researching long-term economic growth and existential risk. He then joined the Future Fund founded by SBF, working with core figures of the effective altruism movement, Nick Beckstead and William MacAskill. His title was "Economist at the Global Priorities Institute of Oxford University."

This experience was crucial. It means that before entering the AI industry, Aschenbrenner spent several years systematically thinking about one question: what kind of event can fundamentally change the course of human civilization.

Then, he joined OpenAI.

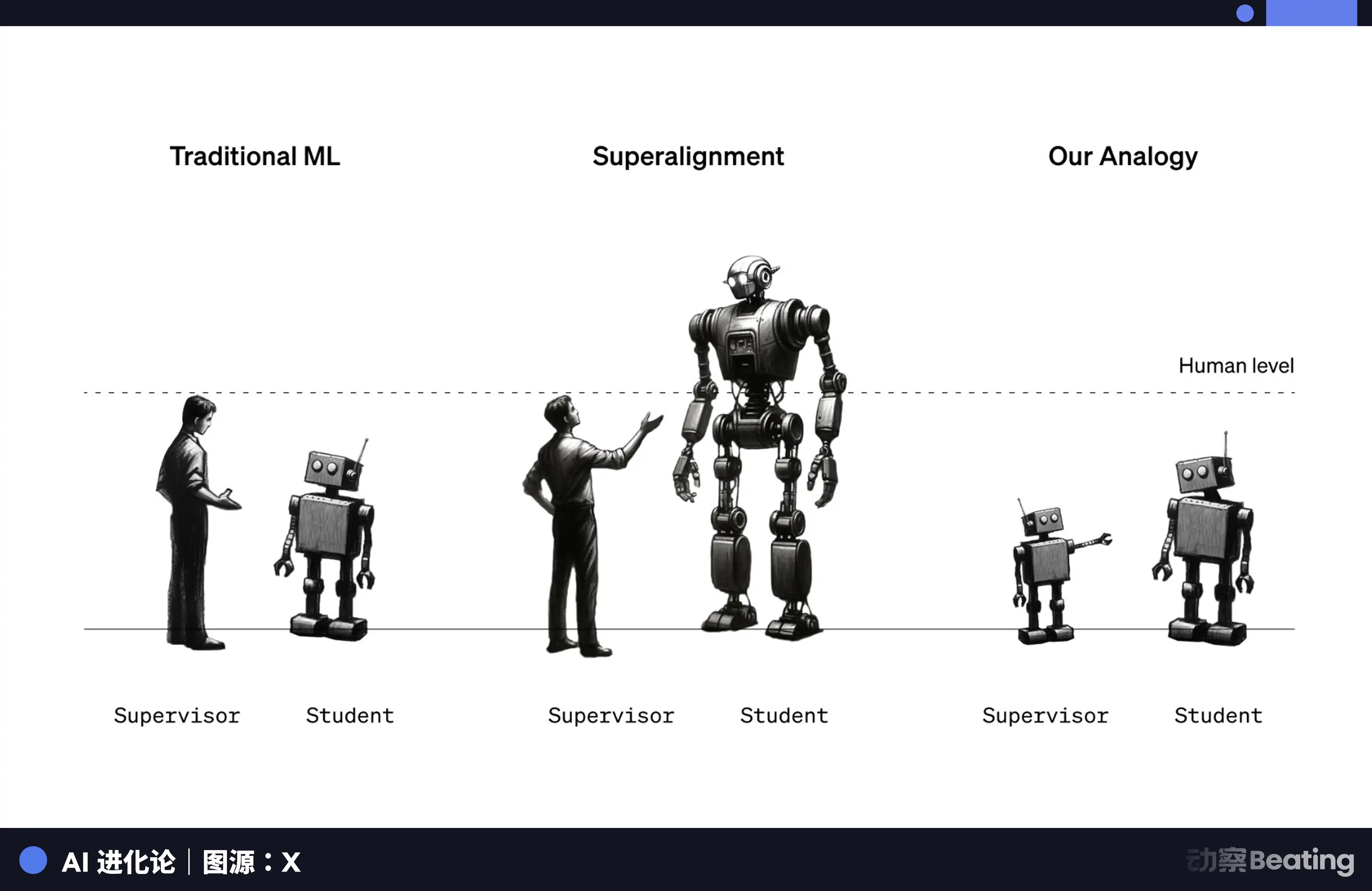

The specific timing is unclear, but he joined a special team—the "Superalignment" team. This team was established on July 5, 2023, co-led by OpenAI co-founder Ilya Sutskever and alignment team leader Jan Leike. Their goal was to solve the alignment problem of superintelligent AI in four years, ensuring that an AI that is much smarter than humans will still listen to human commands.

OpenAI promised to allocate 20% of its computing power to this team. But a gap lies between promises and reality.

Leopold saw some unsettling things within OpenAI. He submitted a safety memo to the board, warning that the company's safety measures were "seriously inadequate" to prevent foreign governments from stealing critical algorithm secrets. The company's reaction was unexpected. The HR department spoke to him, saying his concerns about espionage were "racist" and "unconstructive." Company lawyers interrogated him about his views on AGI and his loyalty to the team.

In April 2024, OpenAI fired him for "leaking confidential information."

The so-called "leak" was sharing a brainstorming document about AGI safety measures with three external researchers. Leopold stated that the document contained no sensitive information and sharing such documents internally for feedback was normal practice.

A month later, Ilya Sutskever left OpenAI. Three days later, Jan Leike also departed. The Superalignment team was disbanded, and OpenAI's promised 20% computing power was never fulfilled.

A team researching "how to control superintelligence" was disbanded by the very company producing superintelligence.

The irony of this situation cannot be overstated. But for Leopold, being fired became a kind of liberation. He was no longer employed by anyone, no longer needed to word things carefully in internal memos. He could say what he really wanted to say to the whole world.

On June 4, 2024, he published a 165-page article on a website called situational-awareness.ai. The title was "Situational Awareness: The Decade Ahead."

165 Pages of Prophecy

To understand Leopold's investment logic, you must first read this manifesto. Because that $5.5 billion in holdings is the financial translation of these 165 pages of text.

The core argument of the manifesto can be summarized in one sentence: AGI (Artificial General Intelligence) is very likely to be achieved by 2027.

This assertion sounded insane in June 2024. But Leopold's way of reasoning is very straightforward: by orders of magnitude.

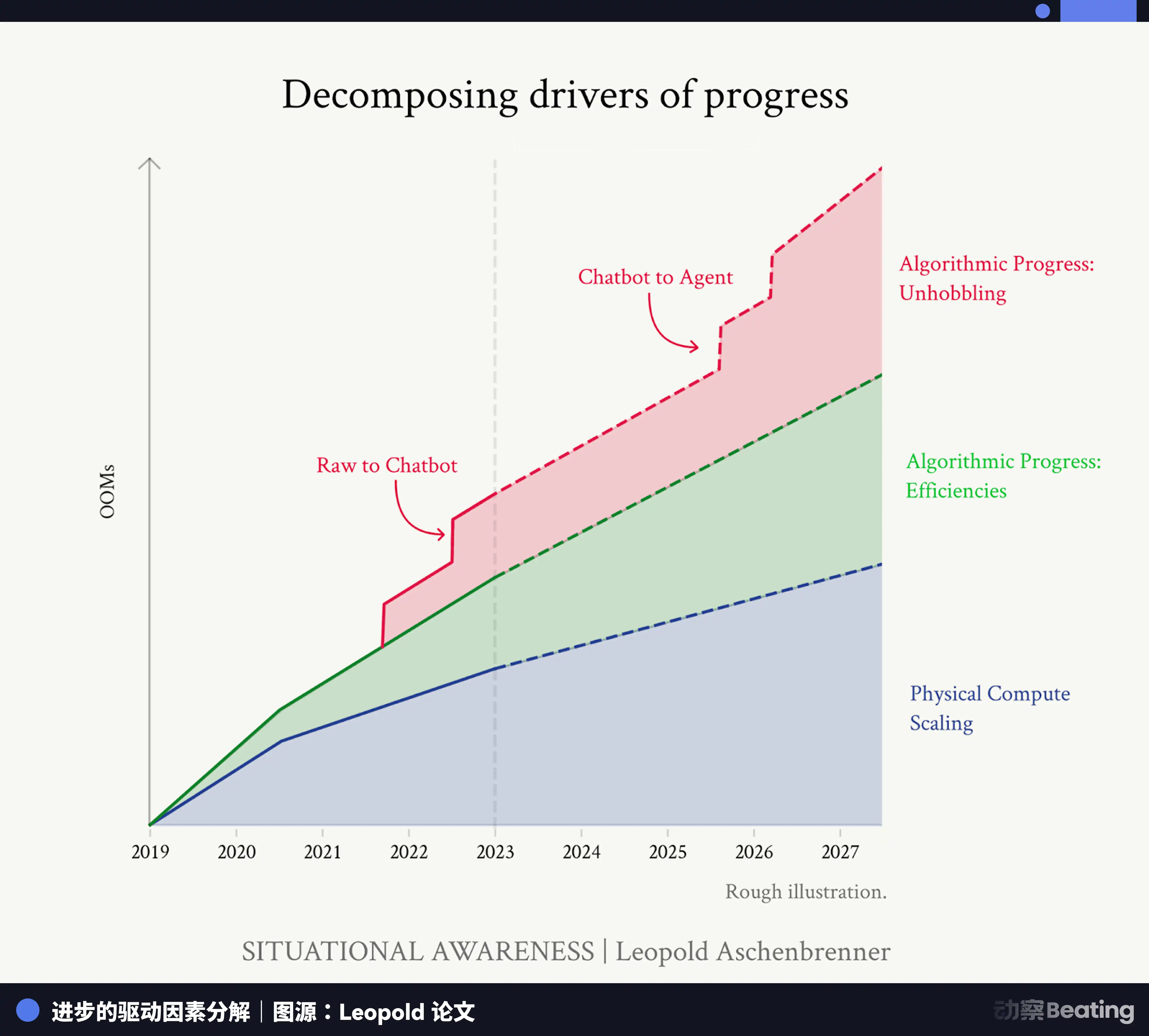

From GPT-2 to GPT-4, AI's capabilities underwent a qualitative leap, transforming from a preschool child into a smart high school student. Behind this leap is about a 100,000-fold (5 orders of magnitude) effective computational growth. This growth comes from the stacking of physical computing power, improvements in algorithm efficiency, and the capability releases brought about by "de-constraint."

His prediction is that by 2027, a similar scale of growth will happen again. In terms of physical computing power, the computational resources used to train cutting-edge models will exceed those of GPT-4 by 100 times. Regarding algorithm efficiency, it will improve by about 0.5 orders of magnitude each year, accumulating to about 100 times over four years. Along with the gains of "de-constraint," allowing AI to evolve from a chatbot to an intelligent agent capable of using tools and acting autonomously, there will be another order-of-magnitude leap.

Three factors of 100 multiplied together yield another 100,000 times, another qualitative leap—from smart high school students to surpassing humanity.

What really makes people uneasy is that starting from this prediction, he deduces a series of consequences.

The first consequence: trillion-dollar-scale computing clusters.

He wrote that in the past year, the conversation in Silicon Valley has shifted from $10 billion computing clusters to $100 billion clusters, and then recently to trillion-dollar clusters. Every six months, there is an additional zero on the boardroom agenda. By the end of this decade, hundreds of millions of GPUs will be operational.

This prediction sounded exaggerated in June 2024. But in January 2025, the Trump administration announced the Stargate project, a joint investment by SoftBank, OpenAI, Oracle, and MGX, planning to invest $500 billion in U.S. AI infrastructure over four years. The first immediate deployment was $100 billion, and construction has already begun in Texas.

What he wrote in his manifesto about "trillion-dollar clusters" became an official plan from the White House six months later.

The second consequence: an electricity crisis.

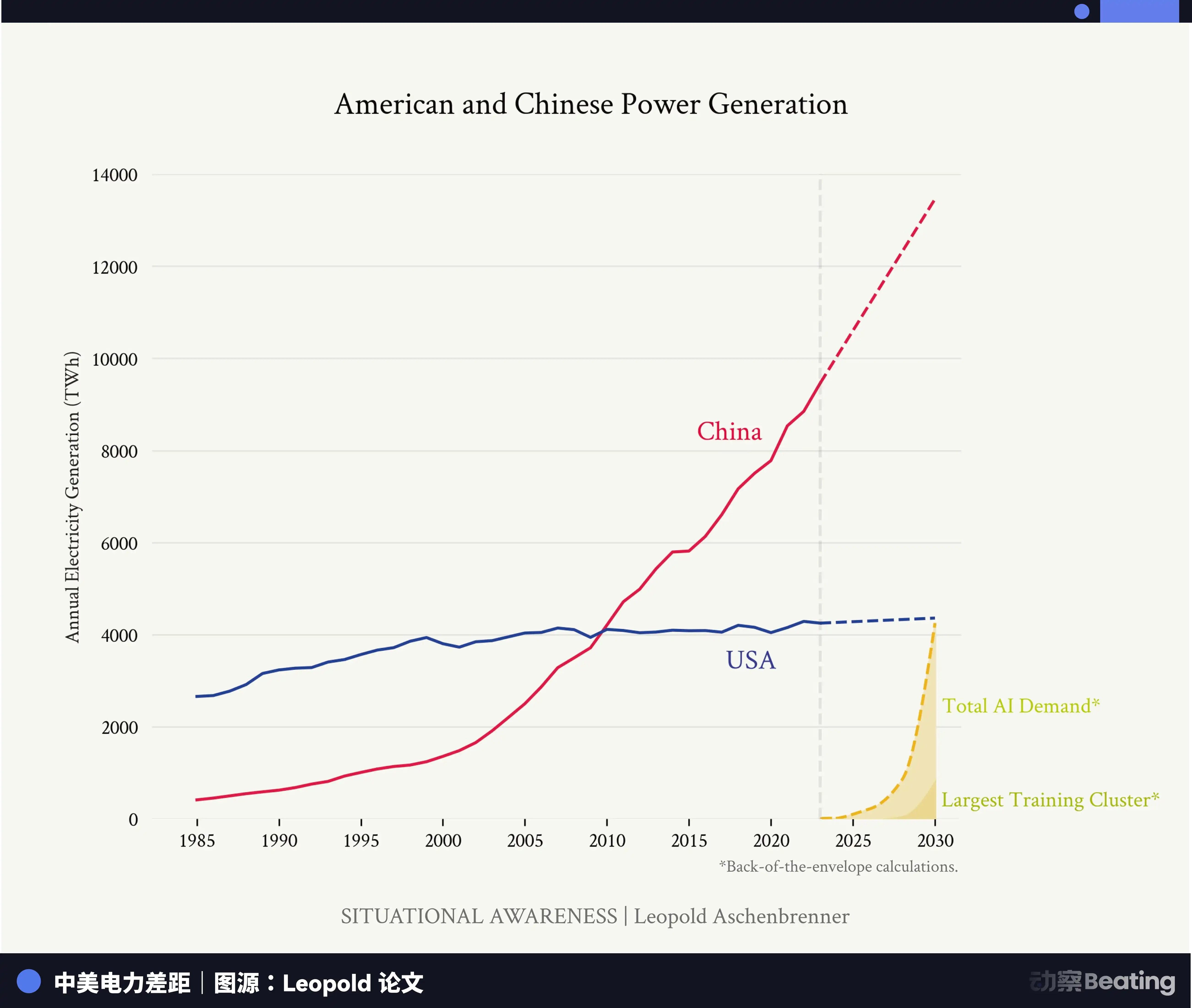

How much electricity do hundreds of millions of GPUs require? Leopold's answer is that it would require increasing the U.S. electricity production capacity by several percentages.

Data confirms his judgment. In 2024, the total capital expenditures of Amazon, Microsoft, Google, and Meta exceeded $200 billion, a 62% increase over 2023. Among them, Amazon alone spent $85.8 billion, an increase of 78% year-over-year. In 2025, Amazon's capital expenditure is expected to surpass $100 billion.

The vast majority of this money is being spent on data centers and electricity infrastructure.

Microsoft even did something unimaginable a decade ago: it signed a 20-year power purchase agreement with Constellation Energy to restart the Three Mile Island nuclear power plant.

Yes, it's the same Three Mile Island that experienced the worst nuclear accident in U.S. history in 1979.

This nuclear power plant is set to reopen in 2028, renamed the Cranes Clean Energy Center, to specifically power Microsoft's data centers. Constellation Energy's CEO Joe Dominguez stated: "Providing power to critical industries, including data centers, requires ample, carbon-free and reliable energy, which nuclear power is the only source capable of consistently delivering."

When a software company begins to restart a nuclear power plant, you know that power has shifted from being an infrastructure issue to a strategic resource issue.

The third consequence: a geopolitical competition.

The most controversial part of the manifesto is that Leopold defines the AGI competition as a struggle concerning the survival of the "free world" in almost Cold War terms. He harshly criticizes the security measures of America's leading AI labs as being virtually nonexistent. He urgently calls for AI algorithms and model weights to be treated as the nation's top secrets.

He even predicts that the U.S. government will ultimately have to launch a national AGI project similar to the "Manhattan Project."

These assertions sparked intense debate. Critics argue that he oversimplifies the complexities of geopolitics, justifying unrestrained accelerated development through a narrative of panic.

But others believe he speaks the truth. Dario Amodei of Anthropic and Sam Altman of OpenAI share his belief that AGI will soon become a reality.

The true value of the manifesto lies not in whether its predictions are 100% accurate, but in that it provides a complete, actionable framework of thought.

If AGI truly arrives around 2027, then before that,

what does the world need? It needs massive computational power.

What does computational power need? It needs GPUs.

What do GPUs need? They need electricity.

Where does the electricity come from? From power plants, from nuclear stations, from bitcoin mines with cheap electricity.

Where are chips made? At TSMC.

But what if the U.S. and China decouple? Then Intel is needed.

How are data centers interconnected? Optical components—Lumentum.

Where is data stored? Storage—SanDisk.

You see, this is the logic of the holdings report.

The manifesto is the map; the holdings are the route. Leopold translated this 165-page macro prediction into an investment portfolio that can be wagered with real money. Each buy corresponds to a point made in the manifesto. Each sell reflects a hypothesis he believes is mispriced by the market.

But just having the map isn't enough. In the real world of investing, you also need one more thing: to continue believing in yourself when everyone tells you you're wrong.

This ability was put to the test in the harshest way on January 27, 2025.

DeepSeek Shock

On January 27, 2025, the release of DeepSeek's DeepSeek-R1 model sent Wall Street into panic. The model's performance was close to OpenAI's o1, but its operating costs were 20 to 50 times cheaper. Even more shocking was that its predecessor, DeepSeek-V3, allegedly had training costs of less than $6 million, using Nvidia H800 chips that were sanctioned by the U.S. and had limited performance.

Market logic collapsed instantly.

If the Chinese could train a top model for $6 million using crippled chips, what did that mean for the hundreds of billions that American tech giants spend annually? Did those trillion-dollar computing cluster plans still hold any meaning? Would the demand for GPUs plummet?

Panic spread like a plague. Nvidia's stock fell nearly 17%, erasing $593 billion in market value in a single day—the largest single-day loss in Wall Street history. The Philadelphia Semiconductor Index plummeted 9.2%, marking the largest single-day drop since the pandemic panic in March 2020. Broadcom dropped 17.4%, Marvell fell 19.1%, and Oracle was down 13.8%.

The decline began in Asia, transmitted to Europe, and ultimately detonated in the U.S. Within a single day, the NASDAQ 100 index constituents lost nearly a trillion dollars in market value.

Silicon Valley venture capital mogul Marc Andreessen referred to DeepSeek as AI's "Sputnik moment," stating, "This is one of the most astonishing and impressive breakthroughs I've ever seen, and as an open-source project, it's a gift to the world."

For Leopold's fund, this day should have been a disaster. His holdings were all AI infrastructure stocks, while the market was questioning the entire logic of AI infrastructure.

But according to Fortune magazine, an investor in Situational Awareness LP revealed that on that day, during the market's panicked sell-off, a large tech fund called to inquire about the situation. Their response was five words:

"Leopold says it's fine."

Why was Leopold so calm? Because he believed that the emergence of DeepSeek not only did not overturn his logic but instead confirmed it.

One of the core arguments in his manifesto is that AI's progress will not slow down; it will only accelerate.

The improvement in algorithm efficiency is one of the three engines driving AI development. DeepSeek trained a stronger model with less money and weaker chips, which exactly proves that algorithm efficiency is rapidly improving. Higher algorithm efficiency means that the same computing power can produce stronger AI, which will stimulate more demand for computing power, not diminish it.

To phrase it using his manifesto's framework: DeepSeek didn’t prove that "we don't need so many GPUs," but that "each GPU has become more valuable." When you can train better models with less money, you won't stop; you'll train more, larger, and stronger models.

Panic stemmed from the fear that "demand will disappear." But those who truly understand AI know that falling costs never eliminate demand; they only create more demand.

In the midst of the panic, Leopold bought in against the trend. The market quickly proved him right. Nvidia and the entire AI sector rebounded rapidly in the weeks that followed, reaching levels higher than before the crash.

In the world of investing, conviction is the rarest asset. It's not because forming a conviction is hard, but because holding onto a conviction when everyone tells you you're wrong is nearly anti-human.

The End of the Physical World

The story of Leopold Aschenbrenner can certainly be simplified into a tale of a genius teenager becoming rich. But if money is all you see, you miss the true value of this story.

What he did right was to shift his focus from the code and model parameters on the screen to the smokestacks of power plants, the substations of mining sites, and the fiber optic cables crossing continents.

In 2024, the world was discussing how powerful GPT-5 would be, how realistic videos could be generated by Sora, and when AI would replace programmers. These discussions are undoubtedly important. But Leopold pressed on a more fundamental question: how much electricity do these things need? Where does the electricity come from?

This question sounds too simple, but precisely this simple question points to the greatest investment opportunity in the AI era.

AI is growing at an exponential rate, while the physical infrastructure supporting it is stuck in the last century. Leopold saw this gap. Then, tracing along this gap all the way to the physical world's end. Every step starts from a physical bottleneck, finds a company that solves this bottleneck, and then bets on it.

This methodology is not new in essence. During the California Gold Rush in the 19th century, the people who made the most money were not the prospectors but those selling shovels and jeans. Levi Strauss rose during that time.

However, knowing this principle is one thing; executing it in the AI era is another.

To execute it, you need to possess two capabilities simultaneously: one is a profound understanding of technological trends, knowing the development paths and resource needs of AI; the other is a concrete understanding of the physical world, knowing where electricity comes from, how to build data centers, and how to lay fiber optics.

The former requires you to have spent time in an OpenAI lab; the latter requires you to be willing to squat down and study the power contracts of a bankrupt mining company.

Technical people understand AI but not the electricity market. Financial people understand the market but not the physical constraints of AI. Leopold has both.

But more important than ability is perspective.

A quote from his manifesto often gets cited: "You can see the future first in San Francisco." The subtext of this statement is: the future is not evenly distributed.

The essence of investing is finding price mismatches in a future that has already arrived but is not yet evenly distributed.

Leopold has seen the capability curve of AI firsthand in an OpenAI lab; he knows that GPT-4 is not the endpoint but the starting point. He knows larger models, greater computing power, and crazier capital investments are coming next. Meanwhile, the market is still debating whether "AI is a bubble."

This is the mismatch. What he did was turn that mismatch into $5.5 billion.

免责声明:本文章仅代表作者个人观点,不代表本平台的立场和观点。本文章仅供信息分享,不构成对任何人的任何投资建议。用户与作者之间的任何争议,与本平台无关。如网页中刊载的文章或图片涉及侵权,请提供相关的权利证明和身份证明发送邮件到support@aicoin.com,本平台相关工作人员将会进行核查。