╰┈➤Starting from Pseudo AI Layer1 and Quasi AI Layer1

▌ AI Information Storage Methods Differ from Traditional Applications

In traditional applications, program code stores the behavior of applications, and the objects of these behaviors are data, which is stored in databases.

For example, in an e-commerce system, actions like adding to cart, placing an order, shipping, and confirming receipt are complex behaviors written into code and stored. The objects of these behaviors include buyer information, seller information, product information, etc., which are stored in a database. When a user interacts with the system, the program executes according to the algorithm and interacts with the database. For instance, when a buyer purchases a phone, an order record is added to the database, while the seller's inventory data decreases by one.

However, AI information storage differs from traditional applications; AI simulates the human brain and possesses learning and thinking capabilities. AI's learning and thinking behaviors are executed through algorithms, and the objects of learning and thinking are the parameters of the AI. Regardless of whether it is algorithm programs or parameter data, both are stored within the AI model.

For example, when a user communicates with a large language model, teaching it about Chinese poetry, explaining concepts like tonal patterns and the structure of quatrains and regulated verse, the model compresses this learned knowledge and stores it as parameters.

Thus, the scale of AI models is vast and continues to grow. Current mainstream AI models are approximately 100GB to 1TB in size (data source: Claude).

This scale is difficult for blockchain to accommodate.

Ethereum has an average block capacity of about 125KB, making it challenging for the blockchain to store a complete AI model.

▌ Existing AI + Blockchain

◆ AI Fine-tuning on the Blockchain

Although it is difficult for the blockchain to store a complete AI model, AI fine-tuning may be stored on the blockchain.

For example, after teaching a large language model about poetic structures.

During communication with Claude, it was discovered that he only learned modern tonal patterns, which differ slightly from ancient ones. There are over 20 characters that are pronounced differently in modern versus ancient contexts, and around 200 characters that have the opposite tonal classification. Thus, this knowledge was taught to Claude.

At this point, the large language model can fine-tune this parameter, and the scale of this fine-tuning is small enough to be written to the blockchain.

Bittensor has subnetworks specifically for AI fine-tuning, writing AI model WeChat data to the blockchain to record the fine-tuning of the AI model.

◆ AI Incentive and Coordination Layer

In addition to the AI fine-tuning subnet, most of the Bittensor blockchain is used for incentivizing and coordinating AI.

The training and application of AI models do not occur on the Bittensor chain. Although different AI models can share data, that data is not on the Bittensor chain. The three components of AI—training data, AI models, and AI applications—are all not on the Bittensor chain. Some so-called subnetworks of Bittensor are essentially AI applications themselves, which is somewhat controversial.

However, the incentives associated with AI data sharing, AI training, and AI operation are on the Bittensor chain. Through decentralized incentives, the training and application of AI are promoted.

The storage methods for AI data and AI models in different Bittensor subnetworks vary, but most are centralized storage methods.

In the ecosystem, IP registration and management are on-chain. When AI models use IP works, it triggers an incentive mechanism, and the incentives are executed on-chain. This also belongs to the AI incentive and coordination layer, where the input data for AI, which is the IP works themselves, remains in a centralized storage method, and the AI models and generated AI works are also stored in a centralized manner.

▌ Incomplete Decentralization

AI fine-tuning on the blockchain and the AI incentive and coordination layer represent an incomplete decentralization of AI.

AI fine-tuning on the blockchain records the fine-tuning of AI models during training and inference in a decentralized manner, but the AI training data and AI models are still stored in a centralized manner, which should be classified as Quasi AI Layer1.

If only the AI incentive layer is decentralized, while the AI training data, AI models, and AI fine-tuning processes are all centralized, this may resemble Pseudo AI Layer1 more closely.

Indeed, enterprise-level AI training and inference have higher efficiency. However, the key issue with Quasi AI Layer1 or Pseudo AI Layer1 is the incompleteness of decentralization.

╰┈➤Decentralized AI Layer1

▌ What is the Significance of Decentralized AI Layer1?

Unless it is an AI with the background and resource level of GPT, centralization may create an information cocoon for AI. Most AI models, once trained using centralized methods, are likely based on limited private data, resulting in potentially flawed or even biased AI. Aligning AI training and application with social production, the significance of decentralized AI lies in:

On one hand, AI training data and AI models as production materials break the centralized monopoly. By decentralizing, sharing more training data can make AI models more complete.

On the other hand, decentralized AI production relationships break the monopolistic value of AI computing power, data, and other resources, allowing startup AIs to survive and develop at lower costs, giving AI consumers the opportunity to reduce usage costs.

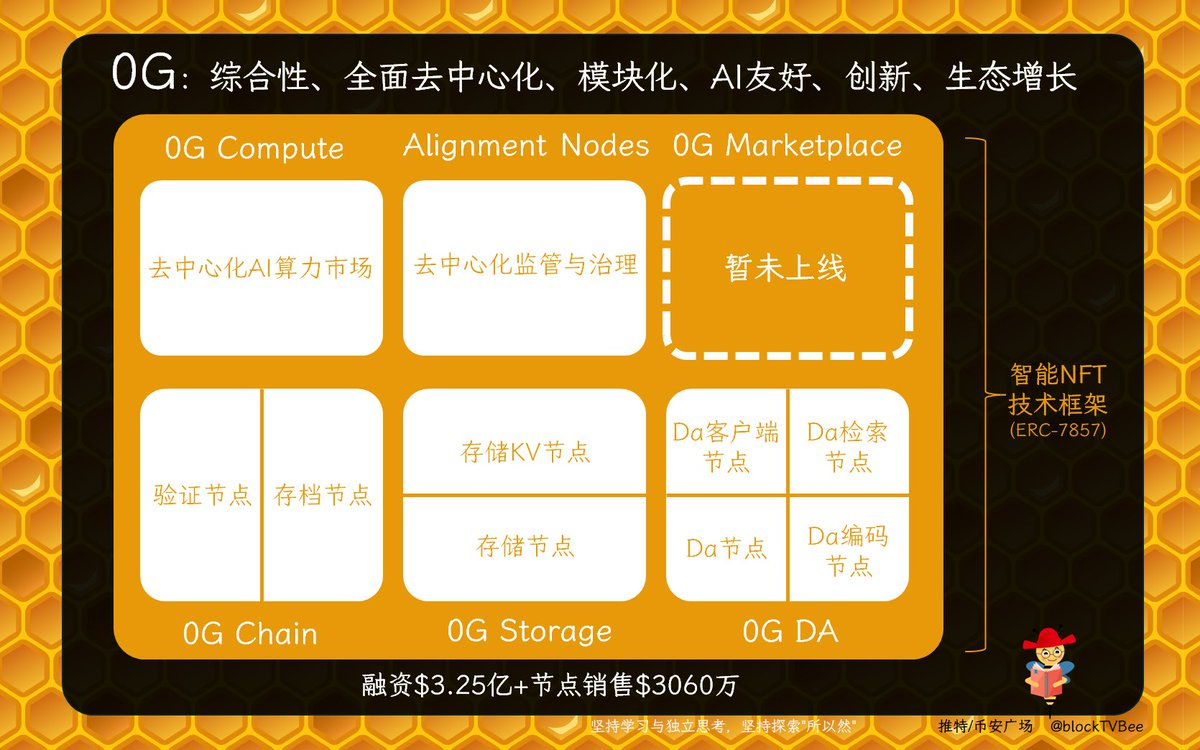

▌ 0G deAIOS

is a project dedicated to building a fully decentralized AI ecosystem, from technical infrastructure to ecological infrastructure, creating a fully decentralized AI operating system—deAIOS.

@

The first feature is a more comprehensive decentralization, allowing for the decentralization of the following:

AI-related economic incentives and financial settlements,

Distribution, regulation, and coordination of AI ecological resources,

Storage and sharing of AI data,

Training, inference, and storage of AI models…

The second feature of 0G is modular design, where both technical and ecological infrastructures consist of several modules, each of which is further composed of various functional modules. This allows 0G's deAIOS to adapt better to the development of AI technology; whenever various functions need to be added, removed, or modified, only the corresponding module needs to be scaled or updated. On the other hand, each module of 0G can also be independently applied to Web3 AI, Web2 AI, and non-AI application scenarios.

╰┈➤0G's Decentralized Underlying Infrastructure

0G employs a comprehensive modular design at the technical infrastructure layer, including 0G Chain, 0G Storage, and 0G DA modules.

▌ 0G Chain More Suitable for AI Applications

0G Chain can achieve decentralized fine-tuning functions and decentralized incentive coordination layer functions similar to Bittensor and Camp Layer1.

◆ Fully Compatible EVM Modular High-Speed Public Chain

0G Chain leverages the modular advantages of Cosmos SDK for continuous optimization and innovation. First, it integrates the Ethermint module to achieve high compatibility with EVM. Second, it optimizes algorithm parameters to enhance consensus execution efficiency, achieving TPS of over 2500.

Third, according to 0G's official Twitter, "TPS of 11000 per shard has been achieved on 50 distributed validators."

Fifth, the 0G roadmap mentions a DAG-based consensus algorithm. Once the DAG algorithm is implemented, it can change the serial execution of blockchain, enabling block parallelism and further improving blockchain efficiency.

At the consensus layer, 0G Chain does not require as many validators (1 million) as Ethereum; it only needs 50-200 validators, reducing excessive redundancy and improving the execution efficiency of blockchain consensus, making it more suitable for AI application needs. It is hard to imagine that after an AI application sends a transaction request, it would need to wait for 1 million nodes to collaborate for validation, which would take a long time.

At the application execution layer, 0G Chain is highly compatible with EVM, allowing smart contract developers and on-chain users to develop or participate in the ecosystem according to their previous habits.

Even various node programs of 0G achieve EVM compatibility through the integration of Geth, allowing node operators to participate in the ecosystem using EVM wallets.

◆ Validator Nodes and Archival Nodes 0G Chain operates based on distributed validator nodes and archival nodes.

- Distributed validator nodes are responsible for the consensus layer, coordinating and producing blocks according to the consensus algorithm, ensuring the security of 0G Chain.

- Distributed archival nodes are responsible for the execution layer, running EVM, executing smart contracts, computing and processing transactions, and storing state data.

This again reflects the modular characteristics of 0G, where the consensus module and execution module can be independently upgraded, optimized, or replaced. This provides long-term sustainable development space for technological exploration and progress within the 0G ecosystem.

▌ 0G Storage Designed for AI Applications

Decentralized storage can accommodate the scale required for AI training data and AI models.

0G Storage allows AI data to be stored in a decentralized manner, providing security, resistance to censorship, and other benefits. It effectively fills the gaps of Quasi AI Layer1 and Pseudo AI Layer1, completing the final piece of the AI Layer1 puzzle.

Although other AI Layer1s can collaborate with existing decentralized storage networks, the performance and cost models of current decentralized storage networks are not suitable for AI applications. AI applications often require high-frequency read and write operations, which networks like IPFS, Filecoin, and Arweave struggle to meet.

◆ Decentralized Storage Structure Supporting Fast Read and Write

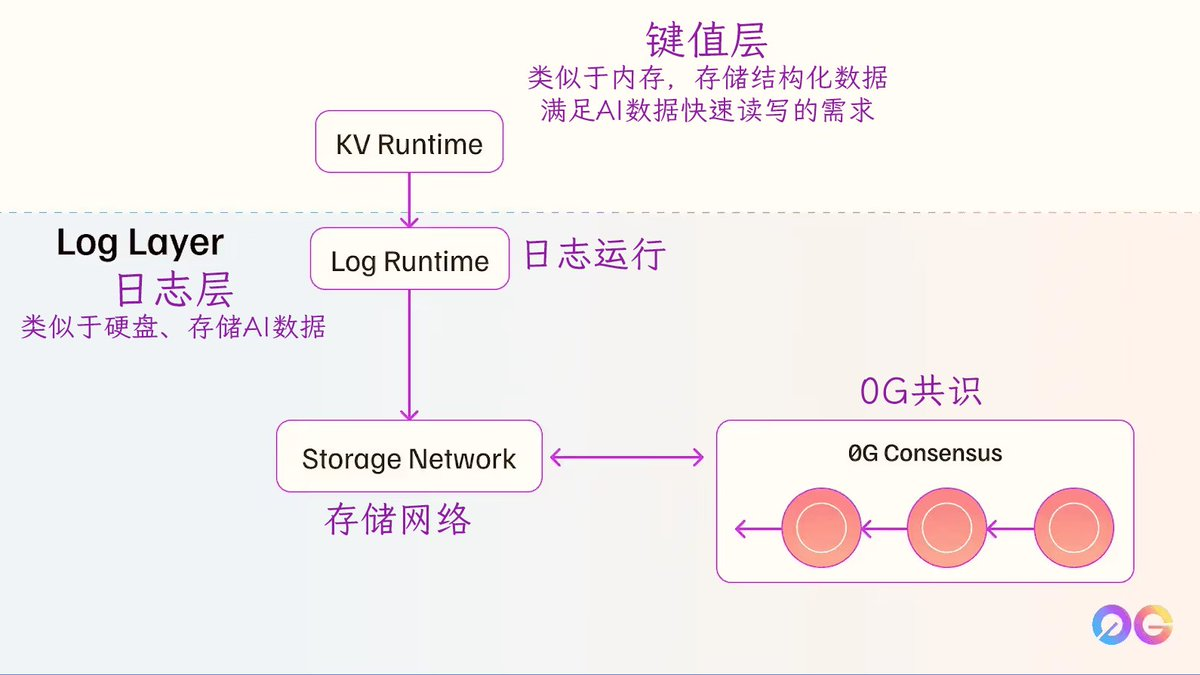

0G Storage is specifically developed for AI, consisting of a log layer and a key-value layer, both of which are modular and can be used independently.

The log layer is similar to a hard drive, storing AI input data (datasets for training, testing, and validation), AI output data, and complete AI models, as well as data from the AI training and inference processes. Additionally, the storage in the log layer is permanently written according to the consensus algorithm, with written data timestamped, possessing characteristics of decentralization and immutability typical of blockchain.

The key-value layer is akin to memory, where necessary data from the log layer is stored in a key-value data structure. For example:

AI_100 = {

name=XXX,

path="log://cluster1/models/xxx/v2/"

…

This way, when calling, you can directly use AI-100[path] to quickly read the storage path of the model named XXX.

It is this design that allows 0G Storage to read and write data quickly, meeting the needs of AI applications.

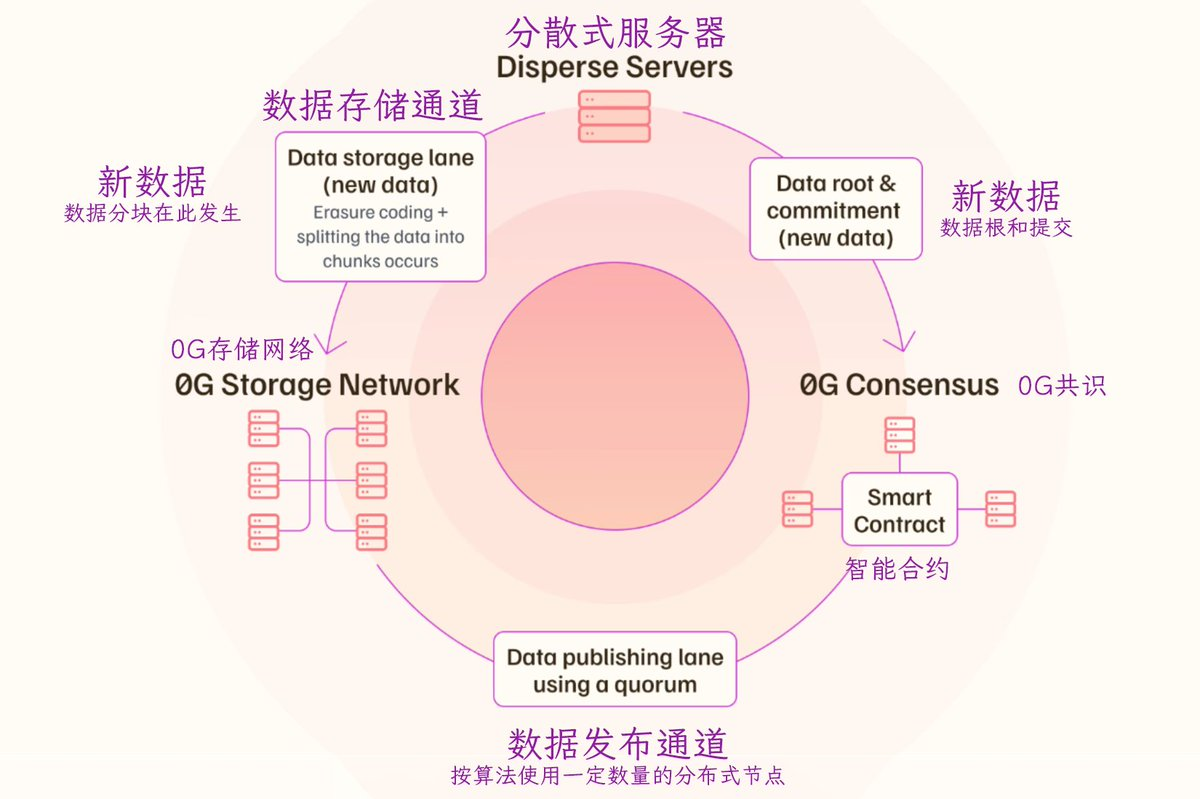

The data storage channel is solely responsible for storing data, using erasure coding technology to divide the storage objects into several segments distributed across different nodes. Additionally, some redundant segments are calculated and stored on other nodes according to the erasure coding algorithm, which are used to recover file segments in case of node failures. The erasure coding algorithm used by 0G Storage ensures that even if 30% of the nodes are inaccessible, the complete storage object can still be read.

The data publishing channel, upon receiving new data, is responsible for generating the corresponding metadata, creating and verifying the availability proof of the storage, and writing the relevant information into the blockchain smart contract (which could be 0G Chain or another blockchain, as the 0G network adopts a modular structure, allowing 0G Chain and 0G Storage to independently connect to external ecosystems).

The dual-channel separation of data storage and publishing not only enhances data security but also allows for the parallel processing of most transactions in these two channels that are not related, thereby improving the efficiency of AI data storage.

◆ Storage Nodes and Storage KV Nodes

- 0G Storage operates based on distributed storage nodes and archival KV nodes.

- The distributed storage nodes (Storage Node) are responsible for the log layer, storing data that needs to be permanently retained.

The distributed storage KV nodes (Storage KV) are responsible for the key-value layer, processing and storing the structured data corresponding to the permanently stored data.

▌ 0G DA Serving More Scenarios

0G DA (Data Availability) is a technical module providing decentralized DA services for AI applications, Rollups, and more scenarios.

◆ Non-Permanent Storage

DA is essentially data storage, similar to the data storage channel of 0G Storage, using erasure coding technology to divide data into several segments distributed across nodes, storing a certain amount of redundant segments according to the erasure coding algorithm, ensuring that complete data is available even if some nodes fail.

The functional difference between the two is that 0G Storage is for permanent storage, while 0G DA is for non-permanent storage.

The implementation difference is that 0G Storage achieves key data indexing and efficient data reading in decentralized storage through the key-value layer, while 0G DA retrieves DA data through retrieval nodes.

◆ Four Types of Nodes in 0G DA

0G DA is based on distributed DA nodes, DA encoding nodes, DA retriever nodes, and DA client nodes.

- The distributed DA nodes (DA Node) validate data segments when DA data is divided into several segments, execute aggregate signatures, and after obtaining more than 2/3 of the signatures, the DA nodes are responsible for storing the DA data.

- The distributed DA encoding nodes (DA Encoder) are responsible for executing the erasure coding algorithm to segment the DA data.

- The DA retriever nodes (DA Retriever) are responsible for retrieving DA data.

- The DA client nodes (DA Client) are responsible for uploading and downloading DA data according to the needs of the service objects. Currently, the 0G DA client node program supports DA services for Arbitrum Nitro Rollup and OP Stack Rollup, connecting to Arbitrum and OP Stack networks to fulfill their DA needs.

╰┈➤0G's Decentralized Service Infrastructure

Based on the technical infrastructure, 0G has built a more macro AI ecosystem. The 0G ecological infrastructure includes the 0G Compute, 0G Marketplace, and Alignment Nodes modules.

▌ Decentralized AI Computing Power Market—0G Compute

0G Compute is a decentralized AI computing power resource market.

GPU providers first register their services in the smart contracts of 0G Chain, including service types, prices, and some computing power metrics.

After AI users prepay fees, they issue computing requests to the smart contracts.

The smart contracts match suitable GPU services based on the specific needs of AI computation.

After GPU providers complete the computing tasks, they receive corresponding rewards.

The entire matching of AI computing power and financial settlement is executed by the smart contracts of 0G Compute, and the entire process is decentralized.

Additionally, 0G Compute has established cooperative relationships with other decentralized computing DePIN networks. Currently, Aethir and in.net are global GPU providers for 0G Compute.

Compared to traditional centralized computing service providers, the DePIN network can offer lower-cost AI computing power services due to its decentralized nature.

▌ Decentralized AI Products and Services Market—0G Marketplace

0G Marketplace is a necessary module for the AI ecosystem, serving as a trading platform for AI models, AI Agents, AI applications, and other AI solutions, similar to fetch.ai.

This module is still under development, and once completed, it will also be facilitated by smart contracts to match supply and demand and execute settlements.

▌ Decentralized Regulation and Governance Module—Alignment Nodes

Alignment Nodes are a key module in the 0G ecosystem, serving to regulate the ecosystem's decentralization. It can be translated as alignment nodes, emphasizing consistency in the regulation of the 0G ecosystem.

The so-called consistency includes the alignment of node goals and work, the consistency of AI models with expectations, and the consistency of AI output results with quality standards.

Alignment Nodes also supervise the work of various nodes, promptly identifying performance issues or misconduct. They participate in decentralized governance to advance the 0G ecosystem.

╰┈➤0G's AI Innovation Enhancement Technology Framework

0G is committed to achieving a better AI Layer1 ecosystem, innovating more technology frameworks suitable for AI.

▌ NFT and AI Metadata Binding

0G has innovatively developed the ERC-7857 standard, which binds node-related metadata to the node operation permission NFT. When the node operation permission NFT is transferred, the related metadata is also transferred, and sensitive metadata can be transferred in an encrypted form.

The binding of NFTs and AI metadata forms smart NFTs, which can be seamlessly integrated into the 0G Chain, 0G Storage, G DA, 0G Compute modules, etc.

This integration ensures that AI products like AI Agents maintain their functionality and privacy while retaining complete decentralized attributes within the 0G ecosystem.

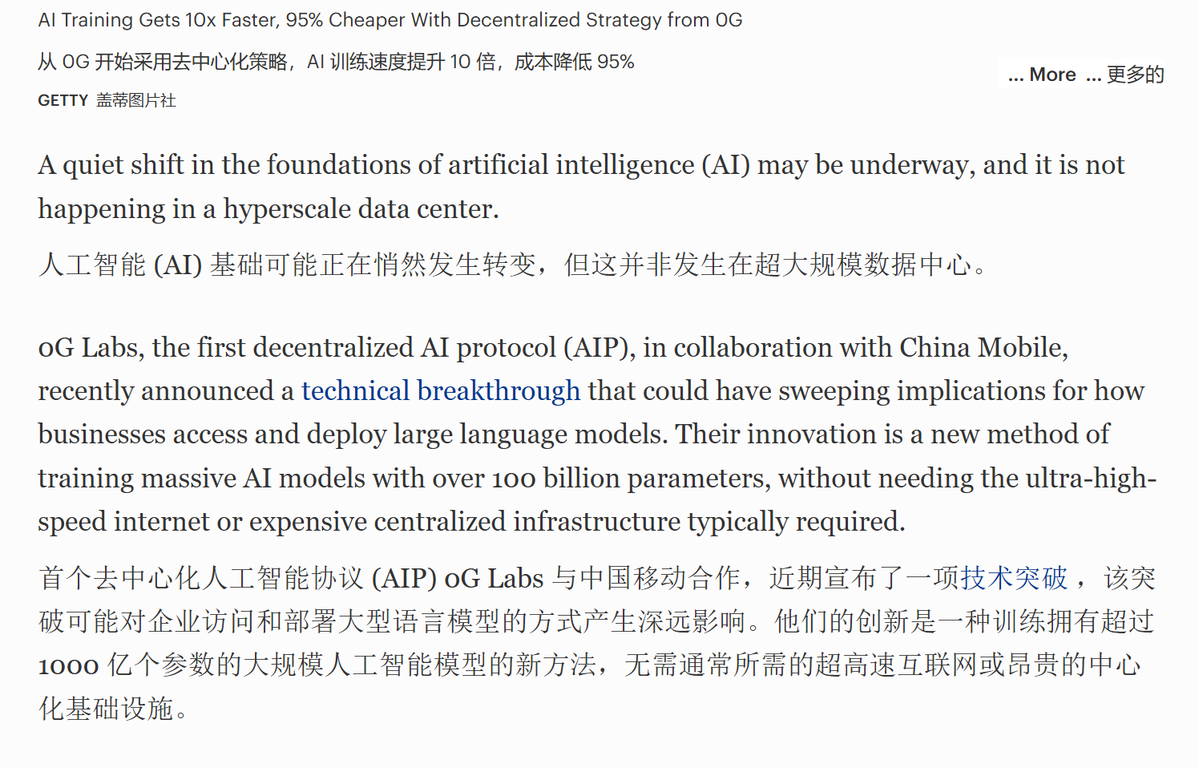

▌ High-Speed, Low-Cost AI Training Framework

According to reports from Forbes Digital Assets Channel, 0G has partnered with China Mobile to announce a high-speed, low-cost AI training framework—DiLoCoX.

The DiLoCoX (Distributed Low Communication Exchange) framework provides a theoretical basis for distributed training of AI, primarily considering communication during the distributed training process. The technical logic involves segmenting the AI model across nodes and adaptive gradients.

After segmenting the AI model, it allows for parallel computation and communication, enabling local updates of the AI model by nodes without waiting for all nodes to synchronize, thus improving training speed and inter-node communication speed.

The so-called adaptive gradient refers to selecting more suitable parameters during AI model training based on the current situation, thereby reducing the frequency or data scale of inter-node communication and lowering communication costs.

╰┈➤0G Ecosystem Construction

The positive trends of AI projects like Bittensor and fetch.ai are not necessarily due to robust infrastructure or powerful technology, but rather because there are real AI products participating in the ecosystem.

0G possesses a strong infrastructure and technical framework, making ecosystem construction based on this foundation crucial.

▌ Testnet

Although 0G is in the testing phase, the scale of the ecosystem is already beginning to take shape.

The 0G Chain testnet has 24 million wallets participating, generating nearly 6 million blocks, with over 300 million on-chain interactions and a total of 16.8 million smart contracts.

The total number of miners in the 0G Storage testnet is 12,798, with 567 active miners. The total storage capacity is 2.5TB, with over 7 million uploaded files.

▌ Node Sales

As of August 2025, over 85,000 0G alignment nodes have been sold to 8,500 operators worldwide, raising over $30.6 million.

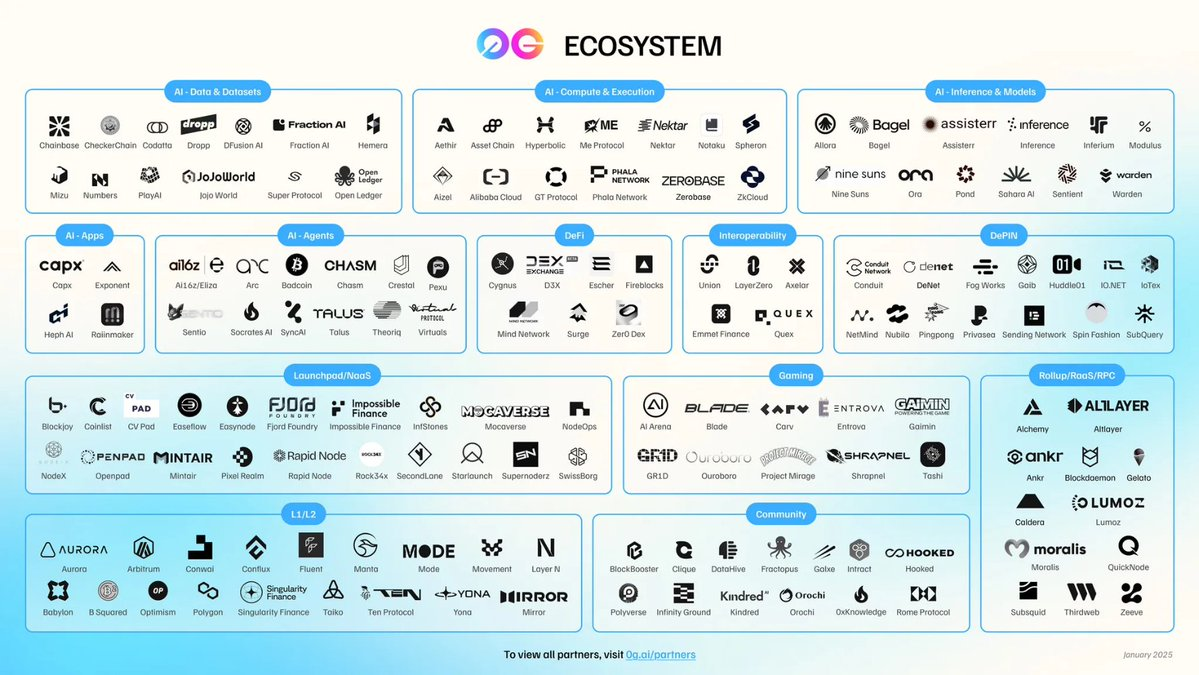

▌ Cooperation and Application Construction

In addition to AI-related projects, 0G has established partnerships with various projects in L, L2, infrastructure, interoperability, DePin, DeFi, gaming, and more. There are over 300 cooperative projects, with more than 450 integrations. Among them, 0G Chain has over 130 integrations, 0G Storage has over 80, 0G DA has over 80, and 0G Compute has over 60.

As of the first half of 2025, AI applications based on the OG infrastructure include, but are not limited to: - 16 AI projects for enterprise procurement (Oro), intelligent driving (Warden), and monetizable open-source AI model training (Bagel), etc. -

- 10 AI reasoning and model projects such as Sentient.

- 14 AI Agent projects such as Virtuals and Eliza OS.

- 19 AI data and analytics projects such as Public AI and Mind Network…

▌ Hackathon

At the 2025 ETHGlobal Hackathon, 0G sponsored a $5,000 reward, and 12 fully functional projects successfully integrated with 0G. Among them, 11 projects used 0G Compute, and 3 projects used 0G Storage.

▌ Accelerator and Ecosystem Incentives

0G has launched an ecosystem accelerator and growth program, which serves as a boost and driving force for the development of the 0G ecosystem. The accelerator provides technical, marketing, and other support for ecosystem projects. The growth program includes an ecosystem fund of $88.88 million.

╰┈➤ Final Thoughts

Why is 0G considered a better AI Layer1 ecosystem?

First, 0G is a vast ecosystem composed of a complex technical system and ecological products. In terms of positioning, it can currently be compared to Bittensor and in the future to Bittensor+ and fetch.ai.

Second, 0G has a modular structure from the overall to the local level, allowing for flexible updates and replacements, better matching the needs of the AI industry. At the same time, the modules can also be used independently, connecting to AI ecosystems and non-AI ecosystems outside of 0G.

Third, 0G Chain is highly compatible with EVM while also possessing the high efficiency of an enterprise-level blockchain, allowing it to better respond to the requirements of AI products.

Fourth, 0G Storage and 0G DA fill the gap in decentralized storage for AI Layer1, achieving a fully decentralized AI Layer1. The design of 0G's decentralized storage technology is more suitable for serving AI, surpassing Bittensor on the infrastructure technology level.

Fifth, the innovation of smart NFTs and AI training frameworks enables 0G to better serve AI applications and models.

Sixth, the management and incentives of the 0G ecosystem are advancing the AI ecosystem of 0G. Data on cooperation and product construction, testnets, and node sales indicate that 0G's AI ecosystem is striving to catch up…

免责声明:本文章仅代表作者个人观点,不代表本平台的立场和观点。本文章仅供信息分享,不构成对任何人的任何投资建议。用户与作者之间的任何争议,与本平台无关。如网页中刊载的文章或图片涉及侵权,请提供相关的权利证明和身份证明发送邮件到support@aicoin.com,本平台相关工作人员将会进行核查。