Text | Sleepy.md

In that era of telegrams charged by the word, ink and paper were money. People were used to condensing thousands of words to the extreme, where "quick reply" was worth more than a long letter, and "safety" was the heaviest reminder.

Later, the telephone entered homes, but long-distance calls were charged by the minute. Parents' long-distance calls were always concise, quickly hanging up after discussing important matters, and if the conversation slightly dragged on, the thought of saving on call fees would cut off any budding small talk.

Later still, broadband entered homes, and internet usage was charged by the hour. People stared at the timer on the screen, opening and closing web pages quickly, daring only to download videos, as streaming was a luxury action at the time. At the end of every download progress bar lay the longing for "connecting to the world" and the dread of "insufficient balance."

The units of billing changed over and over, but the instinct to save money has remained unchanged throughout history.

Today, tokens have become the currency of the AI era. However, most people have yet to learn how to be frugal in this age, as we haven't figured out how to calculate gains and losses within invisible algorithms.

When ChatGPT first came out in 2022, almost no one cared about what tokens were. It was the era of an AI free-for-all, spending a mere $20 a month to chat as much as one wished.

But since recently, as AI Agents have become popular, tracking token expenditure has become something every user of AI Agents must pay attention to.

Unlike simple question-and-answer dialogues, behind a task flow are hundreds or thousands of API calls; an Agent's independent thinking comes at a cost. Each self-correction and every tool call corresponds to a fluctuation in the numbers on the bill. Then, you realize that the money you’ve topped up suddenly isn't enough, and you still don't know what the Agent has actually done.

In real life, everyone knows how to save money. At the market, we know to clean off the muddy rotten leaves before weighing them; on the taxi ride to the airport, experienced drivers know to avoid the elevated roads during rush hour.

The logic of saving money in the digital world is no different, except the billing units have changed from "pounds" and "kilometers" to tokens.

In the past, saving was driven by scarcity; in the AI era, saving is about precision.

We hope this article can help you establish a method for saving money in the AI era, so that every penny you spend is used effectively.

Clean the rotten leaves before weighing

In the AI era, the value of information is no longer determined by its breadth, but by its purity.

The billing logic for AI is based on the number of words it reads. No matter whether what you feed it is genuine insight or meaningless boilerplate, as long as it reads it, you must pay for it.

Therefore, the first way to save tokens is to engrain the concept of "signal-to-noise ratio" into your subconscious.

Every word, picture, and line of code you feed to AI costs money. So before handing anything over to AI, remember to ask yourself: How much of this does AI really need? How much is the muddy rotten leaf?

For example, long openings like "Hello, please help me..." or repetitive background introductions, and uncleaned code comments are all muddy rotten leaves.

Moreover, the most common waste is simply throwing PDF or webpage screenshots at AI. You may think this saves you effort, but "saving effort" in the AI era often translates to "being expensive."

A complete PDF format, in addition to the main content, also contains headers, footers, charts, hidden watermarks, and a large amount of formatting code used for typesetting. These do not help AI understand your problems at all, yet they all incur costs.

Next time, remember to convert the PDF into clean Markdown text before feeding it to AI. When you turn a 10MB PDF into a 10KB clean text file, not only do you save 99% of the money, but you also allow AI's brain to operate much faster than before.

Images are another money-consuming beast.

In the logic of visual models, AI does not care whether your photo is beautiful; it only cares about how many pixels you occupy.

Taking Claude's official calculation logic as an example: the token consumption of an image = width in pixels × height in pixels ÷ 750.

A 1000×1000 pixel image consumes about 1334 tokens, which, based on Claude Sonnet 4.6's pricing, translates to about $0.004 per image; however, if the same image is compressed to 200×200 pixels, it only consumes 54 tokens, with a cost of $0.00016, a difference of 25 times.

Many people simply throw high-definition photos or 4K screenshots taken by their phones at AI, unaware that the tokens consumed by these images could suffice for AI to read through most of a novella. If the task is merely to recognize text in the image or make simple visual judgments—such as having AI recognize the amount on an invoice, read text in a manual, or judge if there is a traffic light in the picture—then 4K resolution is pure waste; compressing the image to the smallest usable resolution is enough.

However, the easiest way to waste tokens on the input side is not the file format but rather the inefficient way of speaking.

Many people treat AI as a real neighbor, accustomed to communicating in a chatty, social manner, starting with “help me write a webpage,” waiting for AI to spit out a rough draft, then adding details and pulling back and forth repeatedly. This kind of toothpaste-squeezing dialogue forces AI to generate content repeatedly, with each round of modifications stacking token consumption.

Engineers at Tencent Cloud have found that for the same request, a toothpaste-squeezing multi-turn dialogue often consumes 3 to 5 times more tokens than if the request had been made clearly in one go.

The true way to save money is to abandon this low-efficiency social probing and state your requirements, boundary conditions, and reference examples clearly in one go. Spare yourself the effort of explaining "what not to do," as negating often incurs higher understanding costs than affirmations; directly tell it "how to do it," and provide a clear, correct demonstration.

At the same time, if you know where the target is, clearly state it to AI; do not let AI act as a detective.

When you command AI to "find the user-related code," it must scan, analyze, and guess extensively in the background; however, when you tell it directly, "look at the file src/services/user.ts," the token consumption is vastly different. In the digital world, information equivalence represents the greatest savings.

Don't pay for AI's "politeness"

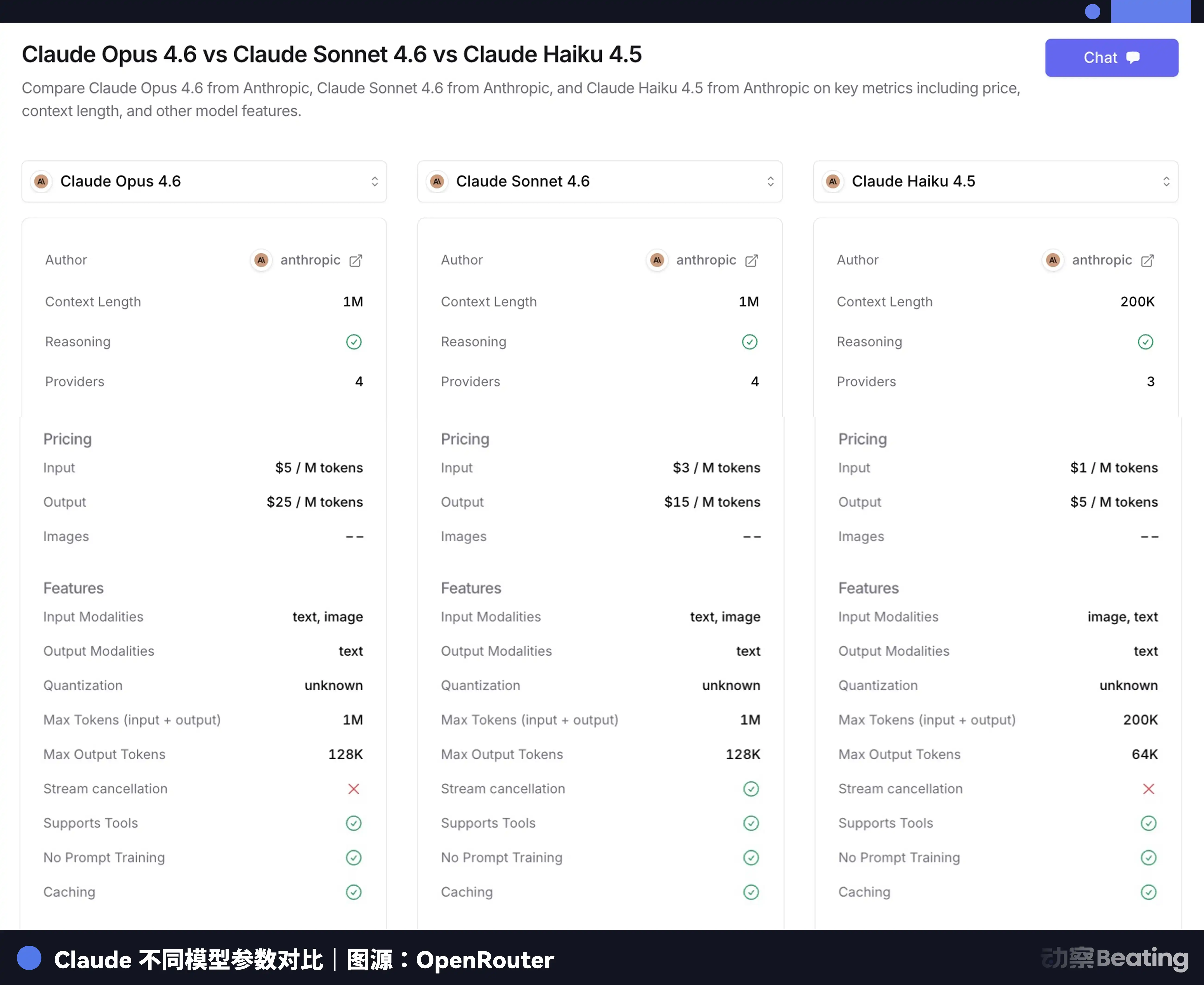

There is an unspoken rule in model billing that many people are unaware of: output tokens usually cost 3 to 5 times more than input tokens.

This means that what AI says is much more expensive than what you say to it. For example, with Claude Sonnet 4.6's pricing, input costs only $3 per million tokens, while output suddenly jumps to $15, a price difference of 5 times.

Those polite openings like "Okay, I fully understand your request; now I will start answering for you..." and those courteous endings like "I hope the above content helps you," while polite in human communication, will actually cost you money on the API bill even though they add no information.

The most effective way to solve output waste is to set rules for AI. Use system commands to clearly tell it: no small talk, no explanations, no reiteration of requirements, just give the answer directly.

These rules only need to be set once to take effect in every dialogue; it is a real "one-time investment, perpetual benefit" financial management strategy. However, when establishing these rules, many fall into another trap: piling on long natural language instructions.

Engineers' practical data indicates that the effectiveness of instructions does not lie in the word count, but in the density. Compressing a 500-word system prompt to 180 words by eliminating meaningless polite language, merging repeated commands, and restructuring paragraphs into concise bullet lists results in almost no fluctuation in AI's output quality, while the token consumption per call can drop by 64%.

Moreover, another more proactive control measure is to limit output length. Many people never set an output cap, allowing AI to express itself freely. This unrestrained expression often leads to extreme cost overruns. You may just need a succinct sentence, yet AI generates an 800-word essay to demonstrate some "intellectual sincerity."

If you seek pure data, you should force AI to return in a structured format rather than lengthy natural language descriptions. Under equivalent informational circumstances, JSON format consumes far fewer tokens than prose paragraphs. This is because structured data eliminates all redundant conjunctions, modal expressions, and explanatory modifiers, retaining only the high-density logical core. In the AI era, you should realize that what you pay for is the value of results, not AI's meaningless self-explanations.

Additionally, AI's "overthinking" is also consuming your account balance at a furious pace.

Some advanced models have an "expanded thinking" mode, where they perform massive internal reasoning before answering. This reasoning process is billed and is very costly, as it's based on output pricing.

This mode is essentially designed for "complex tasks that require deep logical support." However, most people also opt for this mode when asking simple questions. For tasks that do not require deep reasoning, clearly tell AI "no need for an explanation, just give the answer," or manually turn off expanded thinking, and you can save a lot of money.

Don't let AI dig up old accounts

Large models do not have true memory; they are just furiously sifting through old accounts.

This is a foundational mechanism many people are unaware of. Every time you send a new message in a conversation window, AI does not start understanding from your latest sentence, but rather re-reads all the content you’ve discussed previously—including every round of dialogue, every piece of code, and every referenced document—before providing you with an answer.

In the token bill, this "reviewing and learning" is by no means free. With the accumulation of conversation rounds, even if you merely follow up on a simple word, the cost of AI rereading the entire old account can grow exponentially. This mechanism means that the heavier the conversation history, the more expensive each of your inquiries becomes.

Someone tracked 496 real conversations containing more than 20 messages and found that the 1st message was read on average 14,000 tokens, costing about 3.6 cents per message; by the 50th message, the average reading increased to 79,000 tokens at a cost of about 4.5 cents per message—an increase of 80%. Furthermore, the context is getting longer; by the 50th message, AI has to reprocess a context that is 5.6 times that of the 1st.

The simplest solution to this problem is to establish the habit of: one task, one conversation window.

Once a topic is finished, promptly open a new dialogue; don't treat AI as an always-on chatting window. This habit sounds simple, yet many people struggle with it, thinking "what if I need to refer to old content?" In fact, the "what if" scenarios you worry about hardly ever come up, and for that rare case, you've already paid several times more for every new message.

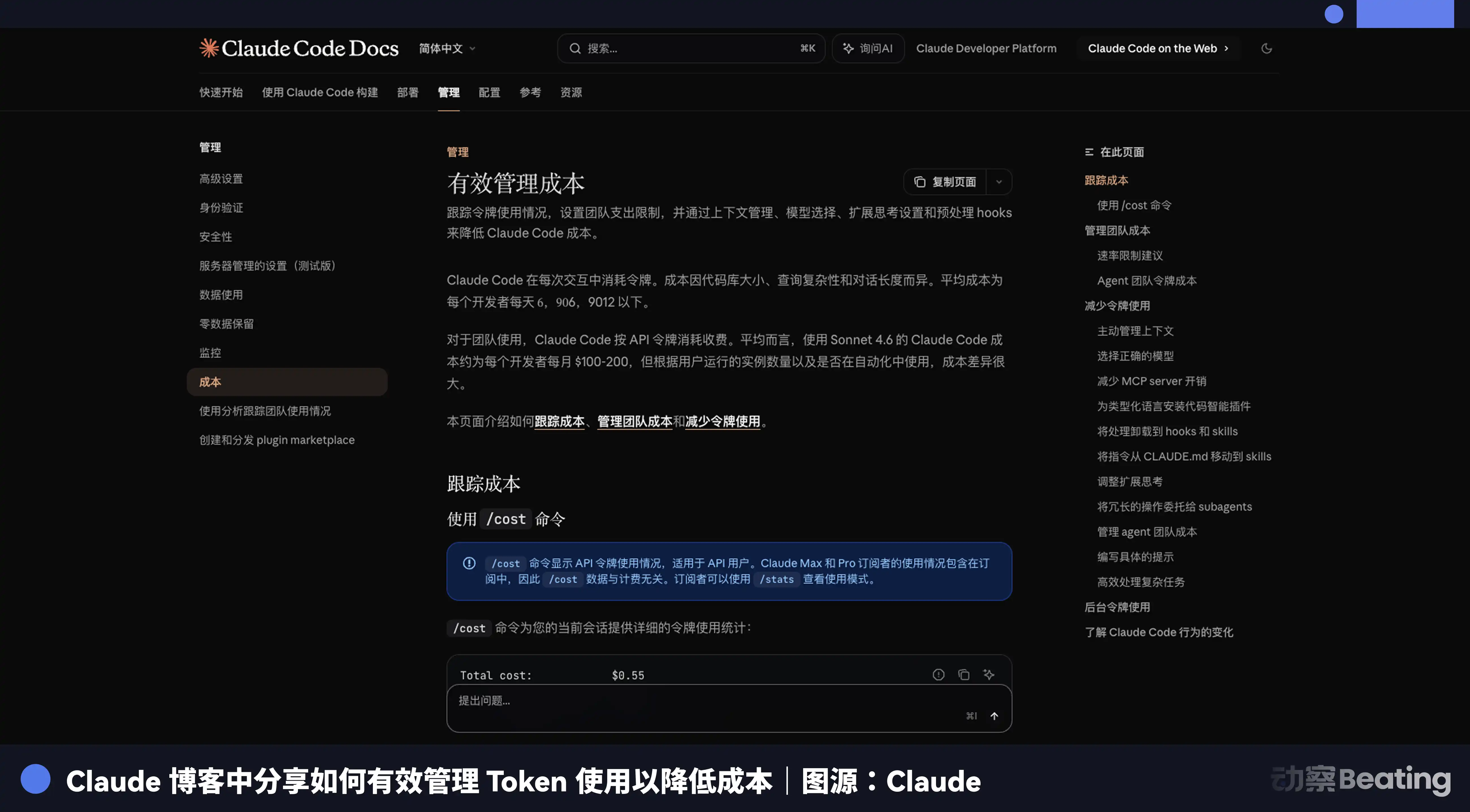

When a conversation truly needs to continue but the context has grown long, we can utilize some tools that offer compression features. Claude Code has a /compact command that can condense lengthy dialogue history into a brief summary, helping you perform a cyber decluttering.

Another saving logic is called Prompt Caching. If you repeatedly use the same system prompts or refer to the same reference document in each dialogue, AI will cache that content and charge a minimal cache retrieval fee the next time it’s called, rather than charging the full price each time.

Anthropic's official pricing shows that cache hits are priced at 1/10 of the normal price. OpenAI's Prompt Caching can similarly reduce input costs by about 50%. A paper published in January 2026 on arXiv tested long tasks across various AI platforms and found that Prompt Caching could reduce API costs by 45% to 80%.

This means that for the same content, the first time you feed it to AI, you pay full price, but for each subsequent call, you only pay 1/10. For those users who need to repeatedly use the same set of standardized documents or system prompts daily, this feature can save a significant amount of tokens.

However, Prompt Caching has a prerequisite: the content and order of your system prompts and reference documents must remain consistent and placed at the very beginning of the dialogue. Any alteration of the content will invalidate the cache and revert to full-price billing. So, if you have a set of fixed work standards, write them down and avoid random modifications.

The last context management tip is on-demand loading. Many people love to shove all the specifications, documents, and important notes into the system prompt for "just in case."

But this comes at the cost of loading thousands of words of rules for a relatively simple task, wasting a bunch of tokens. Claude Code's official documentation recommends keeping the CLAUDE.md file within 200 lines, breaking down specialized rules for different scenarios into independent skill files, loading the rules only for the relevant scenario when needed. Keeping context absolutely pure is the highest respect for computational power.

Don't drive a Porsche to buy vegetables

Different AI models have vastly different prices.

Claude Opus 4.6 charges $5 per million tokens for input and $25 for output, while Claude Haiku 3.5 only charges $0.8 for input and $4 for output, a difference of nearly 6 times. Using the top-tier model for collecting data and formatting tasks is not only slow but also very expensive.

A smart approach is to bring the common social thinking of "division of labor" into the AI realm, assigning different tasks to models at different price points.

Just like in the real world, you wouldn't specifically hire a six-figure expert to do brickwork at a construction site. The same goes for AI. Claude Code's official documentation clearly suggests that Sonnet handles most programming tasks, Opus is for complex architectural decisions and multi-step reasoning, while simple sub-tasks should be designated to Haiku.

A more concrete operational plan is to create a "two-stage workflow." In the first stage, use a free or inexpensive base model for initial dirty work—like data collection, formatting cleanup, draft generation, and simple classification and summarization. In the second stage, feed the extracted high-purity essence to a top model for core decision-making and deep refinement.

For example, if you need to analyze a 100-page industry report, you could first use Gemini Flash to extract key data and conclusions from the report and compile it into a 10-page summary, then pass that summary to Claude Opus for in-depth analysis and judgment. This two-stage workflow allows you to greatly reduce costs without compromising quality.

More advanced than pure segmented processing is in-depth division of labor based on task decomposition. A complex engineering task can completely be broken down into several independent sub-tasks, matching each one with the most suitable model.

For instance, a coding task could let a cheap model first handle the framework and template code, then only hand over the core logic to the expensive model to accomplish. Each sub-task has clean, focused context, leading to more accurate results and lower costs.

You shouldn't need to spend tokens

All the earlier discussions essentially tackle the tactical issue of "how to save money," but a more foundational logic premise has been overlooked by many: Does this action really require spending tokens?

The ultimate saving isn't about optimizing algorithms but rather about decision-making clarity. We have become accustomed to seeking universal solutions from AI, forgetting that in many scenarios, calling expensive large models is like using a cannon to shoot mosquitoes.

For instance, having AI automatically handle emails means it treats each email as an independent task to understand, classify, and reply—a massive token drain. But if you take 30 seconds to quickly scan your inbox and manually filter out those emails that clearly don't require AI's intervention, then hand the remaining ones over to AI, the cost drops dramatically to just a small fraction of the original.

People from the telegram era understood how much more each word could cost, so they weighed their words as a form of instinctive resource perception. The same goes for the AI era; when you actually know how much more it costs to let AI say another sentence, you'll naturally weigh whether it's worth letting AI handle the task, whether this task requires a top model or a cheaper one, and whether this context is still useful.

This weighing is the most cost-effective ability. In an age where computational power is increasingly expensive, the smartest use is not to let AI replace humans but to have AI and humans each do what they are best at. When this sensitivity to tokens becomes an innate reflex, you will truly shift from being a servant of computational power to its master.

免责声明:本文章仅代表作者个人观点,不代表本平台的立场和观点。本文章仅供信息分享,不构成对任何人的任何投资建议。用户与作者之间的任何争议,与本平台无关。如网页中刊载的文章或图片涉及侵权,请提供相关的权利证明和身份证明发送邮件到support@aicoin.com,本平台相关工作人员将会进行核查。