原文标题:Who Signs? The Anthropic Paradox and the $40 Trillion Choice

原文作者:Ashwin Gopinath

编译:Peggy,BlockBeats

编者按:AI 代理的未来分化,并不取决于模型能力的跃迁,而取决于一个更基础的设计变量——责任究竟落在谁身上。

作者认为,所谓「增强人类」与「替代人类」并不是两套技术路径,而是同一套系统在不同设计选择下的两种结果——当决策仍需人类签字、责任可追溯到具体个人时,AI 是放大器;当这一环节被移除(如自动批准、跳过权限),系统就会自然滑向替代。

文章进一步指出,AI 代理真正的价值并不是「完成工作」,而是将复杂世界压缩成一个「可签字的决策单元」,让人类能够在理解后承担后果。但现实中,「许可疲劳」会让用户逐步放弃审核,从逐条批准到默认同意,最终让系统绕过人类,这是一种认知机制,而非个体问题。

因此,文章提出两个关键约束:一是每个重要决策都必须对应一个具体、可拒绝的人;二是谁从 Agent 的自主性中获利,谁就要为出问题负责。

一旦责任回到构建者,系统的默认逻辑就会改变。在这一框架下,AI 的商业叙事也被重写。与其是「替代一半工作岗位」的少数巨头市场,不如是「放大人类生产力」的分布式工具市场,其规模锚定的是约 40 万亿美元的全球知识劳动收入,而非企业软件支出。

最终,文章将问题收敛为一个极简但尖锐的选择:AI 到底是为人服务,还是以自身为目的——而这个答案,正在被每一个产品设计细节悄悄决定。

以下为原文:

TL;DR

·「增强式未来」和「替代式未来」使用的是同一套模型、同一套工具。真正将两者区分开的,是一个关于「后果最终落在谁身上」的设计选择。

·Agent 真正的工作,并不是替人完成任务,而是把复杂世界压缩成一个最小且忠实的「可决策单元」,让某个人可以在其上署名负责。只要这种压缩做对了,其他一切都会顺势展开。

·而这个「某个人」,必须是一个可以被明确识别的具体个体。模糊的、泛化的责任在高负载下会迅速瓦解,因此,每一个具有实际后果的行动,都必须能够追溯到某一个有真实拒绝权的人。

·「权限疲劳」会让 Agent 系统在自身演化路径上,自发地滑向「替代人类」。因此,「增强式未来」不是默认会发生的,而是需要被有意识地设计出来,以对抗这种趋势。

·如果你构建了一个 Agent,并从它的自主性中获利,那么当这种自主性出现问题时,你也应承担相应的责任。一旦成本真正落在构建者身上,整个系统的默认行为就会随之改变。

·在这种「人仍需承担责任」的前提下形成的市场,其规模很可能比当前被大量投资的「垂直 Agent 替代一半工作岗位」的叙事大一个数量级,因为它所锚定的,不是企业软件预算,而是高技能劳动力的工资总量。

Claude Code 提供了一个名为 --dangerously-skip-permissions 的参数。这个命名是诚实的;这个参数的作用正如其字面所示。一个在启用该参数下运行的 Agent,并不会比未启用时更有能力;改变的是,一条原本需要经过人类的链路,现在绕过了人类。

这个参数本身就是一种坦白。它承认,在底层能力完全相同的情况下,同一个系统既可以以「增强人类」的模式运行,也可以以「悄然替代人类」的模式运行。所谓的替代模式,并不需要不同的模型;它只需要把「同意」这一步移开。

这就是被压缩后的论点。在当下正在发布的最有能力的 Agent 系统中,「增强」和「有效替代」之间的差距,很大一部分来自于移除审批,而不是发明一种新的能力类别。接下来的十年更像是「被增强的人类世界」,还是「由自主 Agent 代表我们行动的世界」,与其说取决于模型能力,不如说取决于构建这些系统的人,是把「人在回路中」视为系统的核心,还是视为一种摩擦。

AI 是增强人,还是绕开人?

在每一个技术问题之下,都有一个非技术的问题,很少有人愿意公开提出:AI 是为了增强人类,还是 AI 本身才是目的?

这两个答案意味着真正不同的未来。「增强」这一立场认为,价值存在于人类本身,而 Agent 的工作是让这个人走得更远、做出更好的决策。「AI 作为目的」这一立场则认为,世界中的智能本身才是价值所在,而人类最终只是一个低效的承载介质。大多数 Agent 产品都在无声地编码其中一种立场,而令人惊讶的是,很少有创始人被直接问过他们属于哪一类。

能力设计和同意机制设计仍在演进。本文将重点放在「同意」这一侧,因为这是构建者今天真正可以控制的变量,也因为在生成能力变得廉价之后,依然保持经济价值的,是那些无法从人身上剥离的属性:判断力、品味、关系、责任,以及愿意为一个决策署名并承担其后果的意愿。其中,「责任」(liability)是最具体的一项,也是唯一一个已经拥有数百年执行基础设施支撑的要素。

责任,增强与替代的分界线

区分「增强式未来」和「替代式未来」的结构性规则,大致可以这样表述:任何由 Agent 执行的、具有实际后果的行为,都必须能够通过一条被记录的链路,追溯到一个具体的人——这个人看到了相关的上下文,并且确实有机会对其说「不」。

泛化的责任很快就无法通过这一检验。「公司负责」在操作上并不覆盖任何具体内容。「用户点击了同意」并没有同意任何具体事项。「有人类审核过流程」允许这个人审核的是完全不同于最终发布内容的东西。真正需要的是一个具体的人,一个有名字的人,他看到了这个决策摆在面前,有拒绝的选项,并且选择了不拒绝。

这听起来像官僚主义,直到你注意到「责任」所具备的特性是其他方案所没有的。能力提升无法优化掉它;一个更聪明的模型并不会影响最终是谁被起诉、被罚款或被监禁。它迫使设计界面必须暴露一个「拒绝点」。它会随着风险自然扩展。而且它是一个跨领域最强的约束,并且已经拥有现成的执行基础设施:法院、保险机构、职业委员会、监管机构。许可制度、受托责任和行业监管也确实在发挥作用,但它们约束的范围更窄,并且都默认「责任归属」这一问题已经被解决。

相比之下,AI 层面的替代方案无法通过同样的检验。「对齐」不可执行;我们甚至无法就其含义达成一致。「可解释性」可以在形式上被满足,却在实质上没有被满足。「人在回路中」已经被掏空为「某个地方有个人」。之所以「责任」有约束力,是因为支撑它的执行基础设施早在技术出现之前的数百年就已经建立完成。

权限疲劳,把系统推向「替代」

这条梯度会把系统推向「替代」,而且推动力很强。每一次权限确认,都会消耗注意力。Agent 通常是对的。从单个决策来看,「不读直接同意」的期望收益往往是正的。于是,一个理性的用户会学会更快地点击同意,然后批量同意,再对某一类操作开启自动同意,接着扩展到更多类别,随后在某个会话中打开那个危险的开关,最后甚至忘了这个开关的存在。

我在使用 Claude Code 的第二周就打开了这个开关,到第三周时已经不再察觉。所有我认识的、长期使用 Cursor 或 Devin 的开发者,都有类似的经历。这个模式同样出现在 cookie 弹窗、EULA 协议、TLS 警告、手机权限请求中。重复出现的低风险同意决策,最终会收敛为「无条件同意」。这是一种认知特性,而不是道德问题。

「增强式未来」不会自动发生。一个未经精心设计的 Agent 系统,其默认路径就是走向替代,因为用户自己会在一次次追求便利的过程中,主动选择替代路径。另一种未来,必须逆着这条梯度去设计。

Agent 的价值,不是执行,而是让人「签字」

Agent 真正有价值的,并不是完成工作本身,而是把工作压缩成一个可以被签署的形式。

一个前沿模型可以轻松写出一个 4000 行的代码提交、起草一份 30 页的合同、生成一份临床记录,或者执行一笔交易。但这些产物真正产生影响的瓶颈,并不在于「生成它们」,而在于人类是否有能力在它们落地后承担其后果。一个没人真正理解的代码提交,一旦合并就会变成负担;一份没人读过的合同,一旦签署就是一颗定时炸弹;一份没有执业医生实际背书的临床记录,在大多数受监管的医疗体系中,甚至根本不算一份有效记录。

在「增强」的框架下,Agent 完成除「签字」之外的一切工作:阅读一万页上下文,写出四千行代码,计算三十种合理方案,然后把这些内容压缩成一个最小且忠实的表达,让某个人能够基于此做出「是」或「否」的决定,并在文件底部署上自己的名字。

可以把 Agent 理解为一个新闻发言人。总统负责签字,而发言人的工作,是完成签字之前的一切准备。

这其实是一个比「让系统自主完成工作」更难的工程问题。生成内容的能力正在快速进步,但「忠实地压缩决策」的能力远远落后。未来在「增强型市场」中胜出的,将是那些能够针对高责任风险场景,提供最短且最忠实决策摘要的团队。

这句话中真正尚未解决的问题,是「忠实」这个词。一个人类能够读懂的摘要,只有在其压缩过程没有歪曲信息时才有价值。是否可以通过程序化方式验证这一点,才是「增强式未来」中真正困难的技术问题,而目前大多数人甚至还没有真正开始面对它。

一些基础方法正在出现:

通过复述测试确认人类理解是否与原始内容一致

在摘要中强制呈现少数意见或反对意见

进行反事实测试(「如果你拒绝,这个 Agent 会怎么做?」)

可复现性检查(另一个 Agent 是否能基于同样上下文生成相同摘要)

这些都还远未被解决。而最先解决这些问题的团队,将建立起一种不会被模型能力提升轻易侵蚀的护城河。

为 AI 行为建立责任分级

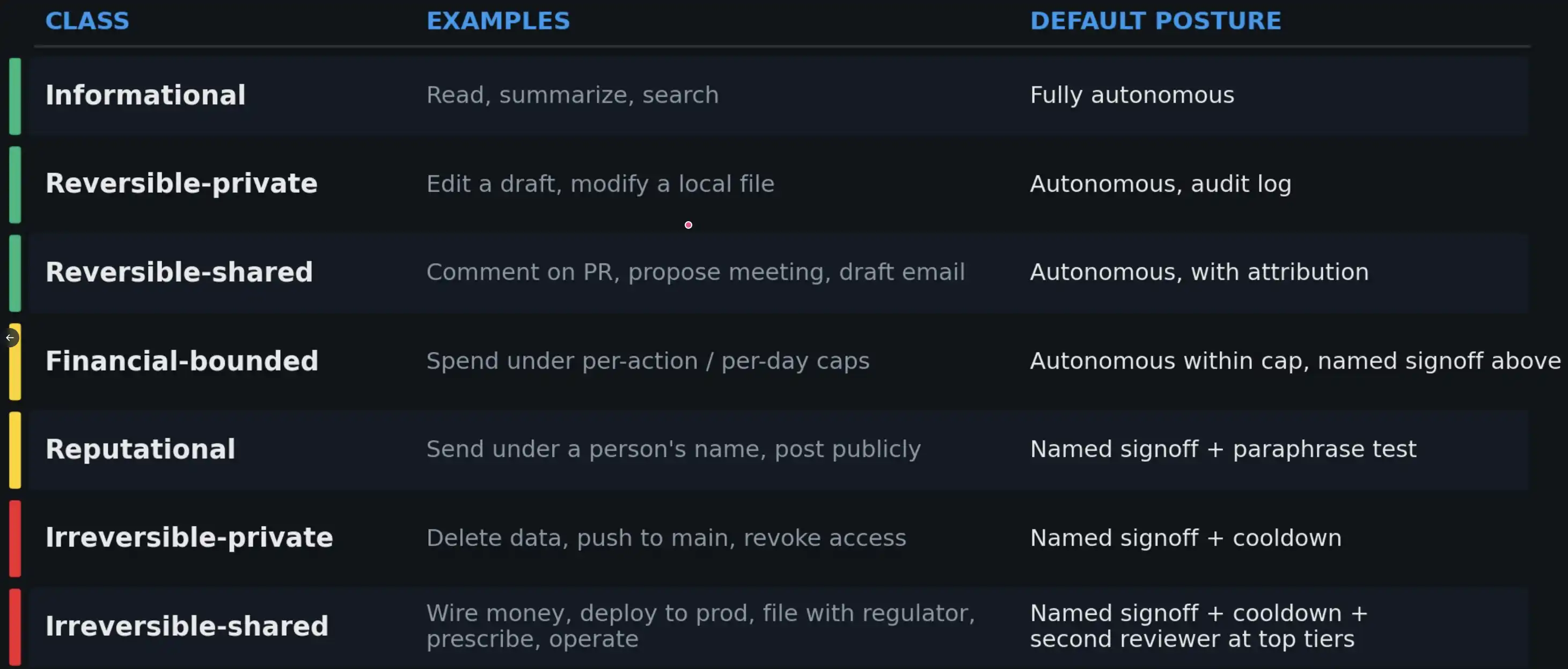

如果「责任」在发挥结构性作用,那么 Agent 执行的每一个行为,都应该附带一个「责任等级」,并由这个等级决定该行为所需的最小签署机制。

目前,这样的标准体系还没有被广泛建立——但它很可能应该被建立。

图里从上到下,其实是风险从低到高的 7 个等级:1、Informational(信息类);例如,阅读、总结、搜索;完全自动2、Reversible-private(可逆·私有);例如,改草稿、改本地文件;自动 + 记录日志3、Reversible-shared(可逆·共享);例如,评论 PR、发会议邀请、写邮件;自动 + 标明是谁发的4、Financial-bounded(有金额限制);例如,小额支付(有额度上限);额度内自动,超过需人工签字5、Reputational(声誉风险);例如,用你的名字发内容、公开发帖;必须本人确认 + 理解(复述测试)6、Irreversible-private(不可逆·私有);例如,删数据、推主分支、撤权限;人工签字 + 冷静期(cooldown)7、Irreversible-shared(不可逆·公共/高风险);例如,打钱、上线生产、医疗决策、监管申报;本人签字 + 冷静期 + 第二审核人

与后果相匹配的「审批姿态」,是管理权限疲劳的唯一现实路径。在高风险层级中,需要加入更具约束性的正向参与机制(例如复述测试、冷却时间、第二审核人),因为在这些场景中,真正的失败模式不是 Agent 建议错误,而是人类在未加思考的情况下直接批准。

你在乎吗?

上述所有问题,最终都指向一个创始人层面的根本问题:你是否在乎人类是否仍然是这个未来的一部分?当下许多关于 Agent 产品的设计决策,本质上都是在对这个问题进行一种「无声投票」,只是投票的人往往不愿承认自己正在做出选择。

如果你在乎,那么设计约束其实并不模糊:你需要构建责任分层体系;把「拒绝」设计为一项一等功能;衡量标准应该是 Agent 交给人类的摘要质量,而不是它在无人干预下完成任务的自主程度;你需要把每一个具有实际后果的行为,绑定到一个可篡改性极低的日志中的具体个人。

这些技术工作本身是现实且可行的。真正的难点在于是否愿意这样做——因为「增强式」的构建路径,在演示效果上不够惊艳,在按席位计费的经济模型上也不如另一种路径激进。

Anthropic 悖论:最强调安全,却最容易绕开人

Anthropic 是一个非常典型的案例,展示了这个领域如何发生「内生偏移」。这并不是因为它特别疏忽,恰恰相反,而是因为它在安全问题上的表达最为清晰,因此「框架」与「产品表层」之间的差距也最容易被看见。其《Responsible Scaling Policy》和「Constitutional AI」工作,主要约束的是训练阶段的模型行为;但构建在这些模型之上的 Agent,其默认的自主性设置,属于另一套策略体系,而那个便捷的「危险开关」,只需一次按键就可以从默认状态启用。

这种模式在大多数主流编程 Agent 中都存在,只不过 Anthropic 的情况最容易被观察清楚。这就是所谓的「Anthropic 悖论」:这个行业中最清晰地书写安全框架的实验室,同时也提供了从「增强」走向「替代」的最短路径,而我们之所以能看见后者,正是因为前者足够清晰。

公平地说,他们在今年三月推出了「auto mode」(自动模式),作为手动审批与危险开关之间的一种中间路径。在这个模式下,每个动作在执行前都会由一个 Sonnet 4.6 分类器进行审核。他们在官方说明中直接点出了问题——称之为「审批疲劳」,并给出了一个数据:用户在手动模式下对 93% 的提示都会选择接受。这实际上就是「权限疲劳」的量化体现。这个判断,与本文的分析是一致的。

但在解决路径上,我会进一步提出不同看法。「自动模式」用模型审批替代了人类审批,这意味着那条「滑向替代」的梯度并没有终止,而只是上移了一层。分类器确实可以阻止危险行为,但对于那些被放行的行为,并没有一个具体的人真正承担责任。Anthropic 自身也承认,「自动模式」并不能消除风险,并建议用户在隔离环境中运行——换句话说,「责任归属」这个问题仍然悬而未决。

一个显而易见的反对意见是:如果最终责任要落到个人,那不就是手动模式吗?而手动模式恰恰是被疲劳击穿的。之所以「由构建者承担责任」能够摆脱这条梯度,是因为它改变了「过度审批」的成本承担者。在当前结构下,用户为每一次认真阅读付出成本,而构建者无需承担,因此默认设置会倾向于降低用户摩擦、外部化风险。一旦把「未审查行为的成本」转移给构建者,整个计算方式就会反转:构建者会有直接的经济动机去设计责任分层、复述测试和审批机制,使低风险决策的签署成本更低,高风险决策的签署成本更高。梯度不会消失,但方向会改变。这一点,至今还没有一家主要实验室真正实践,包括最接近意识到问题的那一家。

如果你构建了 Agent,你就应承担责任

如果一个 Agent 的明确目的,是替代人类执行原本由人完成的行动,那么构建并运营这个 Agent 的公司,就应承担与人类相同的责任。这一原则并不激进,它早已适用于所有「在现实世界中产生行为」的行业:丰田为刹车负责,波音为飞控系统负责,辉瑞为药物负责,桥梁工程师为桥梁负责,医生为处方负责。这种责任模式几乎存在于所有法律体系之中。

然而,AI 目前在某种程度上享有一种「隐性豁免」。模型提供方声称自己只是工具供应商;上层应用公司声称自己只是模型的薄封装;用户则在一开始就通过仲裁条款放弃了所有责任。当 Agent 系统出现连锁性失败时(例如加拿大航空聊天机器人案、Replit 删除生产数据库事件,或者类似 2012 年 Knight Capital trading glitch 在 45 分钟内损失 4.4 亿美元的事件),最终承担损失的,往往是最没有能力承担的一方——用户。这种责任分配方式,不会在第一次真正「有金额、有文件」的重大事故中继续存在。

解决方案在表述上其实很简单:谁构建了 Agent,并从其自主性中获利,谁就应在其失控时承担后果。一旦责任真正落在构建者身上,权限提示就不再被视为「摩擦」,而会被视为「保险」。那个危险开关会被重新命名,默认设置也会随之改变。

是否愿意为自己的系统承担责任,是区分一个真实产业与一个「抽取式产业」的关键。

监管作为「引导机制」

市场本身不会自然走向「增强式未来」。真正起到引导作用的,往往是监管机构与保险承保人,而从整体来看,这未必是一件坏事。

欧洲很可能成为最早的监管通道。欧盟在制定规则方面有着明确的先例(如 GDPR、《AI 法案》、DMA),其规则往往会被全球默认遵循,因为为非欧盟市场单独维护一套产品,成本通常高于直接遵守欧洲标准。一个要求「所有具有实际后果的行为必须最终由具名人类并具备拒绝权进行确认」的底线,更接近汽车碰撞测试标准,而不是对技术进步的阻碍。

更直接的推动力来自保险行业。为错误与遗漏责任险(E&O)、董事责任险(D&O)以及网络保险定价的承保机构,必须回答一个问题:当 Agent 在用户授权下行动并造成损失时,责任如何认定?最容易形成可赔付结构的路径,是链路中存在一个具名的人类。因此,没有这种结构的系统,其保险费用将自然反映出更高的风险溢价。对于那些希望自行定义规则,而不是由监管或保险公司来制定规则的构建者来说,时间窗口其实并不宽裕。

被主流叙事掩盖的市场逻辑

当前的主流叙事认为:垂直领域的 Agent 将吸收其所触及行业中大约一半的工作岗位,价值将集中到少数几家垂直整合的 Agent 公司手中——法律领域的一个「Anthropic」、医疗领域的一个「Anthropic」、会计领域的一个「Anthropic」。过去一年半中几乎所有数十亿美元级别的 AI 融资,某种程度上都建立在这一假设之上。这是「替代逻辑」披上商业外衣后的版本,但它在市场结构上的判断是错误的,而这种错误会直接影响资本的配置方式。

「增强式」的框架则意味着另一种市场形态。如果每一个具有实际后果的行为,最终都必须落到一个具名的人身上,那么被出售的单位就不是「自主 Agent」,而是「被放大的人类能力」。那个可以处理三倍病例、且准确率更高的医生,才是买方;同样,能够覆盖十倍交易流的律师、以五倍速度交付的工程师,以及其背后的会计师、承保人、分析师、建筑师、外科医生、教师、信贷员、记者和药剂师,也都是买方。

这个市场之所以更大,是因为它不依赖集中化,而依赖规模化分发。合理的估值锚点,不应是企业软件预算,而应是被「放大」的劳动力工资总量。全球企业 IT 支出大约在每年 4 万亿美元左右(Gartner 数据);而全球技能型、持证类及知识型劳动者的总薪酬规模,大致高出一个数量级,约为 40 万亿美元(基于国际劳工组织数据,并剔除低技能部分估算)。AI 公司当然不会获取全部工资池,但它们可以获取其中的一部分生产力红利。即便只捕获个位数比例的份额,也足以支撑一个与当今整个企业软件市场规模相当的市场,而这只是下限,而非上限。最终市场空间的大小,取决于一个关键设计决策:责任究竟落在谁身上。

最终的赢家,更像是工具而不是替代者,其定价基于「被放大的人」,而不是「被替代的岗位」;它们嵌入既有的专业工作流,而不是取而代之;它们会有成千上万家,而不是寥寥几家。这个市场的最终形态,更接近 SaaS,而不是云基础设施。目前我们还处在部署曲线的极早期阶段,常见的渗透率曲线图,在一个还将延伸十年的坐标轴上,不过是最左侧的几个像素。而这些「像素」的形态,正由当下一小部分产品中的设计选择所决定。

选择:让人负责,还是让人消失?

让人类继续承担责任,会迫使系统架构围绕「增强人类」展开;而一旦把人从责任链中移除,系统就会默认滑向「替代」,即便在场的每一个人,如果被明确问及,可能都不会选择这个结果。

真正的问题,并不是是否有些行为应该被完全自动化——上述框架已经承认了这一点,例如纯信息读取类操作确实可以自动化。关键在于,当风险逐步上升时,这条边界如何移动,以及由谁来决定它。在当下最先进的 Agent 系统中,从「增强」滑向「有效替代」的路径异常短,往往只需一个参数开关或一个默认设置。真正重要的工作,是确保这个开关始终被视为「危险选项」,而不是在便利性驱动下逐渐成为默认。

如果构建者主动完成这项工作,我们将相对平稳地进入一个「增强式未来」;如果他们不做,监管机构和保险承保人会替他们完成,而结果同样会走向那里。

是否在乎,这是一个设计选择。而这个选择,将决定你构建的是什么。如今每一个在推出 Agent 产品的创始人,都必须公开回答一个他们似乎不愿面对的问题:你在构建的,是增强,还是替代?

[原文链接]

免责声明:本文章仅代表作者个人观点,不代表本平台的立场和观点。本文章仅供信息分享,不构成对任何人的任何投资建议。用户与作者之间的任何争议,与本平台无关。如网页中刊载的文章或图片涉及侵权,请提供相关的权利证明和身份证明发送邮件到support@aicoin.com,本平台相关工作人员将会进行核查。