Original | Odaily Planet Daily (@OdailyChina)

Author|Azuma (@azuma_eth)

On the evening of February 27, OpenAI announced the completion of its latest financing of $110 billion with a pre-money valuation of $730 billion.

The funding for this round came from three major giants, with Amazon contributing $50 billion (an initial investment of $15 billion, with the remaining $35 billion to be fulfilled in the coming months upon meeting certain conditions), NVIDIA contributing $30 billion (which will be procured back through a total of 5 GW of computing power), and SoftBank also contributing $30 billion.

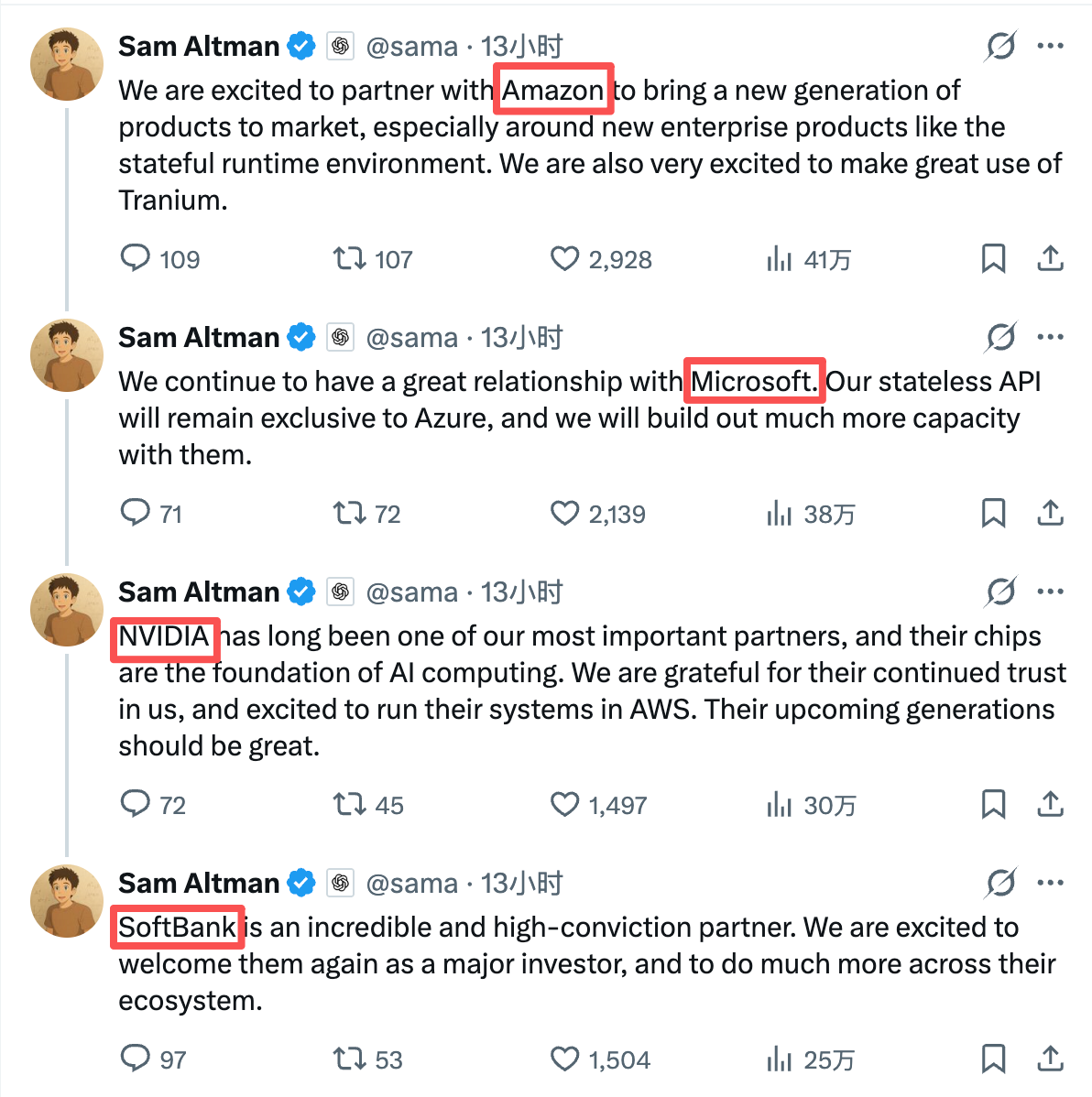

After the financing was completed, OpenAI founder Sam Altman expressed his gratitude to the three major contributors on his personal X account. Notably, Sam Altman's order of thanks was Amazon, Microsoft, NVIDIA, SoftBank—where the name of the "old" shareholder and important partner Microsoft, which did not contribute this time, was mentioned right after Amazon, which promised the largest investment.

Aakash Gupta, an overseas blogger who has long tracked the AI sector, pointed out that while most people are focused on the astronomical figure of $110 billion, the most critical information point in Sam Altman's speech lies in two overlooked technical terms, namely "Stateless API" and "Stateful Runtime Environment," which are captured by Microsoft and Amazon respectively.

The Backdrop of Technical Terms: The Present and Future of AI

The core difference between Stateless API and Stateful Runtime Environment lies in the key words "Stateless" and "Stateful."

The "Stateless" in Stateless API means that the server does not maintain a persistent state across requests—one inference is completed with each invocation; you ask once, AI responds once, and after the lifecycle of this request ends, the system does not retain any context and does not continue to run. The "Stateful" in Runtime Environment means a continuously existing execution environment—Agents have historical memory, can persist, collaborate across tasks, and execute tasks over the long term.

Stateless API is currently the mainstream form of commercialization for LLMs. Industries such as finance, retail, manufacturing, and healthcare mostly access AI by embedding it into existing systems in this form (e.g., various Q&A assistants, document summarization, search enhancement, etc.). The advantage of this model is that enterprises can quickly overlay AI capabilities within their existing structures with little friction, optimizing functionality without needing to significantly restructure their organization and processes. However, as model capabilities converge, the continuous decline in computing costs, and the intensification of price competition, the token billing-based Stateless API is more likely to move toward standardization and commodification, with its marginal profits facing continuous contraction risk.

In contrast, the Stateful Runtime Environment currently has limited commercial scale, but it represents not just simple "functional optimization," but a shift in business paradigms—it can not only answer questions but can also be seen as digital labor to concretely execute tasks. This means that its budget will extend beyond mere interface invocation fees to encompass automation, process management, and even parts of labor costs. For this reason, market expectations for Stateful Runtime Environment are much higher than its current scale.

Aakash Gupta also mentioned that in 2026 and 2027, nearly all enterprises' roadmaps will revolve around "autonomous agent workflows" rather than one-time API calls. Companies that will invest heavily in AI in the future will increasingly lean toward purchasing systems that can operate sustainably, collaborate across tools, and maintain context over the long term.

In the simplest terms, Stateless API represents the present, while Stateful Runtime Environment represents the future.

What Do Microsoft and Amazon Each Gain?

On the day the financing was completed, Microsoft and Amazon each announced their latest collaboration agreements with OpenAI.

Microsoft stated in a statement that the terms of the partnership previously announced with OpenAI in October 2025 will remain unchanged (the terms include OpenAI purchasing $250 billion worth of Azure services). Azure remains the exclusive cloud provider for OpenAI's Stateless API, and any Stateless API calls that OpenAI generates in collaboration with any third party (including Amazon) will be hosted on Azure; OpenAI's first-party products, including Frontier, will continue to be hosted on Azure.

Amazon then stated in an announcement that AWS will collaborate with OpenAI to jointly create a Stateful Runtime Environment driven by OpenAI models and provide services to AWS customers through Amazon Bedrock, helping businesses build generative AI applications and Agents at production-scale; AWS will also become the exclusive third-party cloud distribution service provider for OpenAI Frontier; the existing long-term partnership agreement between AWS and OpenAI worth $38 billion will be expanded to $100 billion, lasting 8 years, with OpenAI consuming 2 GW of Trainium computing power through AWS infrastructure to support the needs of Stateful Runtime Environment, Frontier, and other advanced workloads; OpenAI and Amazon will also develop customized models to support Amazon's customer-facing applications.

Upon comparison of the two announcements, the current situation becomes clear.

Microsoft is locking in the current cash flow with a $250 billion agreement and exclusive service rights, as every time OpenAI's Stateless API is invoked, Azure will charge behind the scenes—regardless of who the customer is or where the channel is, the ultimate traffic will return to Azure. This creates a very certain cash flow, but the problem lies in the shrinking profit margins of the Stateless API; while invocation volumes may continue to grow, actual profits may not stabilize over the long term.

On the other side, Amazon has secured the underlying hosting rights of the AI Agent era with $50 billion in cash and a $100 billion expansion agreement. Once Agents become the core carriers of corporate productivity, the truly long-term consumed resources—computing power, storage, scheduling systems, workflow orchestration, and cross-tool collaboration—will all be embedded within the AWS operating environment.

One controls the current cash flow, while the other bets on the future productivity structure.

OpenAI's Distributed Betting

Before the future truly arrives, no one knows whether the choices made by Microsoft and Amazon are right or wrong. However, it is certain that under these two clearly defined cooperation agreements with distinct benefits, OpenAI's initiative is significantly increasing.

In recent years, OpenAI has been highly dependent on Microsoft for cloud infrastructure. Microsoft is not only a major shareholder holding 27% of shares but also the controller of the infrastructure. This binding has provided OpenAI with effective early resource advantages but also means that the balance of bargaining power naturally tilts towards Microsoft. As Amazon's strong entry occurs, it will inevitably lead to direct competition between Microsoft and Amazon over the future service rights of OpenAI.

For OpenAI, this is a typical distributed betting strategy—avoiding deep ties with any single cloud service provider, preventing future growth from being entirely constrained by one party, and leveraging future business as a bargaining chip to negotiate better terms.

Whether it is Microsoft or Amazon, neither can afford to abandon OpenAI at present. When both sides are unable to withdraw, the bargaining power will naturally return to OpenAI's hands.

免责声明:本文章仅代表作者个人观点,不代表本平台的立场和观点。本文章仅供信息分享,不构成对任何人的任何投资建议。用户与作者之间的任何争议,与本平台无关。如网页中刊载的文章或图片涉及侵权,请提供相关的权利证明和身份证明发送邮件到support@aicoin.com,本平台相关工作人员将会进行核查。